Escolar Documentos

Profissional Documentos

Cultura Documentos

8579 CH 35

Enviado por

Gramoz CubreliTítulo original

Direitos autorais

Formatos disponíveis

Compartilhar este documento

Compartilhar ou incorporar documento

Você considera este documento útil?

Este conteúdo é inapropriado?

Denunciar este documentoDireitos autorais:

Formatos disponíveis

8579 CH 35

Enviado por

Gramoz CubreliDireitos autorais:

Formatos disponíveis

Poularikas A. D.

Random Digital Signal Processing

The Handbook of Formulas and Tables for Signal Processing.

Ed. Alexander D. Poularikas

Boca Raton: CRC Press LLC,1999

1999 by CRC Press LLC

1999 by CRC Press LLC

35

Random Digital Signal

Processing

35.1 Discrete-Random Processes

35.2 Signal Modeling

35.3 The Levinson Recursion

35.4 Lattice Filters

References

35.1 Discrete-Random Processes

35.1.1 Denitions

35.1.1.1 Discrete Stochastic Process

one realization

35.1.1.2 Cumulative Density Function (c.d.f)

continuous r.v. on time n (over the ensemble), the value of

35.1.1.3 Probability Density Function (p.d.f.)

35.1.1.4 Bivariate c.d.f.

35.1.1.5 Joint p.d.f.

Note:

For multivariate forms extend (35.1.1.4) and (35.1.1.5).

35.1.2 Averages (expectation)

35.1.2.1 Average (mean value)

relative frequency interpretation

{ ( ), ( ), , ( )} x x x n 1 2 L

F x n P X n x n X n

x

( ( )) { ( ) ( )}, ( ) x n ( )

X n n ( ) at

f x n

F x n

x n

x

x

( ( ))

( ( ))

( )

F x n x n P X n x n X n x n

x

( ( ), ( )) { ( ) ( ), ( ) ( )}

1 2 1 1 2 2

f x n x n

F x n x n

x n x n

x

x

( ( ), ( ))

( ( ), ( ))

( ) ( )

.

1 2

2

1 2

1 2

( ) { ( )} ( ) ( ( )) ( ), ( ) lim ( ) n E x n x n f x n dx n n

N

x n

x

N

i

N

i

1

]

1

1

1

1

1999 by CRC Press LLC

35.1.2.2 Correlation

complex r.v.

relative frequency interpretation

autocorrelation

35.1.2.3 Covariance-Variance

Example 1

Given with

r.v. uniformly distributed between Then

which implies that the process is at least a wide-sense stationary process.

35.1.2.4 Independent r.v.

,

uncorrelated. Independent implies uncorrelated, but the reverse is not always true.

35.1.2.5 Orthogonal r.v.

35.1.3 Stationary Processes

35.1.3.1 Strict Stationary

for any set

for any k and

any

n

0

.

35.1.3.2 Wide-sense Stationary (or weak)

constant for any n,

R R ( , ) { ( ) ( )} ., ( , ) { ( ) ( )} m n E x m x n m n E x m x n

real r.v

R( , ) ( ) ( ) ( ( ), ( )) ( ) ( ) m n x m x n f x n x m dx m dx n

R( , ) lim ( ) ( ) m n

N

x m x n

N

i

N

i i

1

]

1

1

1

1

R( , ) { ( ) ( )} n n E x n x n

C( , ) {[ ( ) ( )][ ( ) ( )] } { ( ) ( )} ( ) ( ) m n E x m m x n n E x m x n m n

C( , ) [ ( ) ( )][ ( ) ( )] ( ) ( ) m n x m m x n n dx m dx n

C( , ) ( ) { ( ) ( ) } n n n E x n n

2

2

variance

R C ( , ) ( , ) ( ) ( ) m n m n m n if 0

x n Ae

j n

( )

( )

+

0

and .

E x n E A j n R k E x k x E Ae A e

A E e A e R k

x

j k j

j k j k

x

{ ( )} { exp( ( ))} , ( , ) { ( ), ( )} { }

{ } ( )

( ) ( )

( ) ( )

+

+ +

0

2 2

0

0 0

0 0

l l

l

l

l l

f x m x n f x m f x n E f x m x n E f x m E f x n ( ( ), ( )) ( ( )) ( ( )), { ( ( ), ( ))} { ( ( ))} { ( ( ))} p.d.f.

C( , ) m n 0

E x m x n { ( ) ( )}

0

F x n x n x n F x n n x n n x n n

k k

( ( ), ( ), , ( )) ( ( ), ( ), , ( ))

1 2 1 0 2 0 0

L L + + +

{ , , , } n n n

k 1 2

L

x x

n ( )

R R

x x x

k k C ( , ) ( ), ( ) l l < 0 variance

1999 by CRC Press LLC

35.1.3.3 Correlation Properties

1. Symmetry:

2. Mean Square Value:

3. Maximum Value: ,

4. Periodicity: If for some then is periodic

35.1.3.4 Autocorrelation Matrix

The

H

superscript indicates conjugate transpose quantity (Hermitian)

Properties:

1.

2. Toeplitz matrix

3. is non-negative denite

4.

35.1.3.5 Autocovariance Matrix

35.1.3.6 Ergotic in the Mean

a.

b.

c.

35.1.3.7 Autocorrelation Ergotic

35.1.4 Special Random Signals

35.1.4.1 Independent Identically Distributed

If for a zero-mean, stationary random signal, it is said that the

elements are independent identically distributed (iid).

35.1.4.2 White Noise (sequence)

If delta function, variance of white noise,

the sequence is white noise.

r k r k r k r k

x x x

( ) ( ) ( ( ) ( ) )

for real process

r E x n

x

( ) { ( ) } 0 0

2

r r k

x x

( ) ( ) 0

r k r

x x

( ) ( )

0

0 k

0

r k

x

( )

R xx x

x

H

x x x

x x x

x x x

T

E

r r r p

r r r p

r p r p r

x x x p

1

]

1

1

1

1

{ }

( ) ( ) ( )

( ) ( ) ( )

( ) ( ) ( )

, [ ( ), ( ), , ( )]

0 1

1 0 1

1 0

0 1

L

L

M

L

L

R R

H

x x

r k r k

or ( ) ( )

R

x Rx R

H

0,

E x p x p x

B BH T B T

{ } , [ ( ), ( ), , ( )] x x R x 1 0 L

C x x R

x x x

H

x x x x x x x

T

E {( )( ) ] , [ , , , ] L

lim

( ) ,

( ) ( ), ( ) { ( )}

N

x x x

n

N

x

N n

N

x n n E x n

1

1

lim ( ) ,

N

k

N

x

N

c k

1

0

1

lim ( )

k

x

c k

0

lim ( )

N

k

N

x

N

c k

1

0

1

2

f x x f x f x ( ( ), ( ), ) ( ( )), ( ( )), 0 1 0 1 L L

x n ( )

r n m E v n v m n m n m

v

( ) { ( ) ( )} ( ), ( )

v

2

1999 by CRC Press LLC

35.1.4.3 Correlation Matrix for White Noise

35.1.4.4 First-Order Marrkov Signal

35.1.4.5 Gaussian

1.

2.

C

= covariance matrix for elements

of

x

3. correlation matrix,

4. is zero-mean Gaussian iid (Gaussian white noise), then

5. Linear operation on a Gaussian signal produces a Gaussian signal.

35.1.5 Complex Random Signals

35.1.5.1 Complex Random Signal

35.1.5.2 Expectation

35.1.5.3 Second Moment

35.1.5.4 Variance

35.1.5.5 Expectation of Two r.v.

the real vector has a joint p.d.f.

35.1.5.6 Covariance of Two r.v.

Note:

If is independent of (equivalently then

35.1.5.7 Expectation of Vectors

then

R I

v

2

f x n x n x n x f x n x n ( ( ) ( ), ( ), , ( )) ( ( ) ( )) 1 2 0 1 L

f x i x i i

i i

( ( )) exp ( ( ) ( ))

1

]

1

1

2

1

2

2

2

f x n x n x n f

L L

T

( ( ), ( ), , ( )) ( )

( )

exp[ ( ) ( )]

/

/ 0 1 1 2

1 2

1

2

1

1

2

L

x

C

x C x

x

[ ( ), ( ), , ( )] , [ ( ), ( ), , ( )], x n x n x n n n n

L

T

L 0 1 1 0 1 1

L L

f

L

T

( )

( )

exp[ ],

/

/

x

R

x R x R

1

2

2

1 2

1

2

1

E{ } x 0

If x n ( )

R I R x

1

]

1

1

1

2

2

2 2

2

1 1

2

1

2

0

1

v

v

L

L

v

L

v

i n

n

f x i

L

, , ( )

( )

exp ( )

/

, ., x u jv u v x + and real r.v complex r.v

E x E u j E v {} { } { } +

E x E xx E u E v { } { } { } { }

2

2 2

+

var ( ) { {} } { } {} x E x E x E x E x

2 2 2

E x x E u u E v v j E u v E u v { } { } { } ( { } { }),

1 2 1 2 1 2 1 2 2 1

+ + [ ] u u

T

1 2 1 2

cov( , ) { } { } { }. x x E x x E x E x

1 2 1 2 1 2

x

1

x

2

[ ] [ ] u u

T T

1 1 2 2

is independent of

cov( , ) x x

1 2

0

If

[ ] , x x x x

n

T

1 2

L

E E x E x E x

n

T

{

} [ { } { } { }] x

1 2

L

1999 by CRC Press LLC

35.1.5.8 Covariance of

H = means complex conjugate of a matrix.

Note:

is positive semi-denite.

Example

35.1.5.9 Complex Gaussian

If we write then complex

Gaussian

35.1.5.10 Complex Gaussian Vector

each component of

is the real random vectors

are independent,

multivariate complex Gaussian

p.d.f.

Properties

1. Any subvector of is complex Gaussian

2. If s of are uncorrelated, they are also independent and vice versa

3. Linear transformations again produce complex Gaussian

4. If then

x

C x x x x

{(

})(

}) }

var( ) cov( , ) cov( , )

cov( , ) var( ) cov( , )

cov( , ) cov( , ) var( )

x

H

n

n

n n n

E E E

x x x x x

x x x x x

x x x x x

1

]

1

1

1

1

1

1 1 2 1

2 1 2 2

1 2

L

L

M

L

C C C

x

H

x x

and

, {

} {

} , , { } { } , y Ax b y A x b A A + + + +

E E y x b E y E x b

i

j

n

ij j i i

j

n

ij j i

1 1

C y y y y A x x x x

{(

})(

}) } { (

})(

}) },

y

H H H

E E E E E E A

C A x x x x A

[(

})(

}) ] [ ]

y

ij

ij

n

k

n

H

k

H

j

E E E

[ ]

'

l

l l

1 1

, , ( , / ), ( , / ), x u jv u v u N v N

u v

+ and independent

2 2

2 2

f u v u v

u v

( , ) exp[ (( ) ( ) )]. +

1 1

2 2

2 2

{} , + E x j

u v

f x x x CN ( ) ( / )exp[

/ ], (

, ) 1

2

2

2 2

[ ] , x x x x

n

T

1 2

L

x CN

i i

(

, ),

2

[ ] , [ ] , u v u v

T T

1 1 2 2

L,[ ] u v

n n

T

f f x x

i

i

n

n

i

i

n

i

i

n

i i

(

) ( ) exp

x

1

]

1

1

1

]

1

1

1

]

1

1

1

2

1

2

1

2

1

1

[ / [ det( )]]exp[ (

) (

)], ( , , , )

1

1

1

2

2

2 2

n

x

H

x x n

diag C x C x C

L

CN

x

( , )

x

x

i

[ ] ( , ),

x 0 C x x x x CN

T

x 1 2 3 4

E x x x x E x x E x x E x x E x x { } { } { } { } { }

1 2 3 4 1 2 3 4 1 4 2 3

+

1999 by CRC Press LLC

35.1.6 Complex Wide Sense Stationary Random Processes

35.1.6.1 Mean

35.1.6.2 Autocorrelation

35.1.7 Derivatives, Gradients, and Optimization

35.1.7.1 Complex Derivative

J = scalar function with respect to a complex parameter

Note:

Example

35.1.7.2 Chain Rule

and hence

35.1.7.3 Complex Conjugate Derivative

Note:

Setting will produce the same solutions as

35.1.7.4 Complex Vector Parameter

,

each element is given by (35.1.7.1).

E x n E u n jv n E u n jE v n {( )} { ( ) ( )} { ( )} { ( )} + +

r k E x n x n k E u n jv n u n k jv n k

r k jr k jr k r k r k jr k

xx

u uv vu v u uv

( ) { ( ) ( )} {( ( ) ( )) ( ( ) ( ))}

( ) ( ) ( ) ( ) ( ) ( )

+ + + +

+ + +

2 2

J J

j

J

_

,

1

2

, + j .

J J J

0 0 if and only if

J

J J

j

J

j +

_

,

2

2 2

1

2

1

2

2 2 , ( )

J

j j

j j

( , )

, ( )

( ) , ,

_

,

+

+ +

1

2

1

2

1 0 0 1 1 0

J( , )

+ 1 0

J J

j

J

j

J J

_

,

_

,

( ( )) ( )

1

2

J /

0 J / 0

J J J J

p

T

1

]

1

1 1 2

L

1999 by CRC Press LLC

Note:

if each element is zero and hence if and only if for all

35.1.7.5 Hermitian Forms

1. (see 35.1.7.2) and hence

2.

3.

35.1.8 Power Spectrum Wide-Sense Stationary Processes (WSS)

35.1.8.1 Power Spectrum (power spectral density)

Properties

1. real-valued function

2. non-negative,

3.

Example

35.1.9 Filtering Wide-Sense Stationary (WSS) Processes and Spectral

Factorization

35.1.9.1 Output Autocorrelation

autocorrelation of a linear time invariant system,

h

(

k

) = impulse

response of at the system, autocorrelation of the WSS input process

x

(

n

).

35.1.9.2 Output Power

Z-transforms,

J / 0 J J

i i

/ / 0

i p 1 2 , , , . L

l

l

( ) ,

b

H

i

p

i i

k

k

k

k

k

b b b

1

b

b

H

Also,

H

b

0

J

H H

A A A real ( ),

J

J J

j

p

i

p

i ij j

k

j

p

i

p

i ij ik

i

p

i i k

i

p

ki

T

i

T

1 1 1 1 1 1

A A A A A A , , ( )

S e r k e r k E x n x n k x n r k S e e d

x

j

k

x

jk

x x

j jk

( ) ( ) , ( ) { ( ) ( )}, ( ) ( ) ( )

WSS,

1

2

S e

x

j

( )

S e

x

j

( )

0

r E x n S e d

x

j

( ) { ( ) } ( ) 0

1

2

2

r k a a S e r k e a e a e

ae ae

a

a a

x

k

x

j

k

x

jk

k

k jk

k

k jk

j j

( ) , , ( ) ( )

cos

< +

1 1

1

1

1

1

1

1

1 2

0 0

2

2

r k r k h k h k r k

y x y

( ) ( ) ( ) ( ), ( )

r k

x

( )

S e S e H e S z S z H z H z

y

j

x

j j

y x

( ) ( ) ( ) , ( ) ( ) ( ) ( / )

2

1

1999 by CRC Press LLC

if

Note:

If

H

(

z

) has a zero at will have a zero at and another at

35.1.9.3 Spectral Factorization

Z

-transform of a WSS process

x

(

n

),

variance of whiter noise.

35.1.9.4 Rational Function

Note:

since

is real. This implies that for each pole (or zero) in there

will be a matching pole (or zero) at the conjugate reciprocal location.

35.1.9.5 Wold Decomposition

Any random process can be written in the form. regular random process,

predictable process, are

orthogonal

Note:

continuous spectrum + line spectrum

35.1.10 Special Types of Random Processes (x(n) WSS process)

35.1.10.1 Autoregressive Moving Average (ARMA)

,

variance of input white noise,

H

(

z

) = causal linear time-invariant lter = output

powers spectrum

35.1.10.2 Power Spectrum of ARMA Process

35.1.10.3 Yule-Walker Equations for ARMA Process

matrix form:

h n S z S z H z H z

y x

( ) ( ) ( ) ( ) ( / ). is real then 1

z z S z

y

0

then ( ) z z

0

z z

1

0

/ .

S z Q z Q z S z

x v x

( ) ( ) ( / ), ( )

2

1

v x

j

S e d

2

1

2

1

]

1

1

exp ln ( ) ,

v

2

=

S z

B z

A z

B z

A z

x v

( )

( )

( )

( / )

( / )

,

1

]

1

1

]

1

2

1

1

B z b z b q z A z a z a p z

q p

( ) ( ) ( ) , ( ) ( ) ( ) . + + + + + +

1 1 1 1

1 1

L L

S z S z

x x

( ) ( / )

1 S e

x

j

( )

S z

x

( )

x n x n x n x n

p r r

( ) ( ) ( ), ( ) +

x n

p

( ) x n x n

p r

( ) ( ), and E x m x n

r p

{ ( ) ( )} .

0

S e S e a

x

j

x

j

k

k

N

k

r

( ) ( ) ( )

+

1

S z

B z B z

A z A z

x v

q q

p p

( )

( ) ( / )

( ) ( / )

2

1

1

v

2

B z

A z

q

p

( )

( )

, S z

x

( )

( ) z e

j

S e

B z B z

A z A z

B e

A e

x

j

v

q q

p p

v

q

j

p

j

( )

( ) ( / )

( ) ( / )

( )

( )

2 2

2

2

1

1

c k b h k b k h

q

k

q

q

q k

q

( ) ( ) ( ) ( ) ( ), +

l l

l l l l

0

r k a r k

c k k q

k q

x

p

p x

v q

( ) ( ) ( )

( )

, +

>

'

l

l l

1

2

0

0

1999 by CRC Press LLC

35.1.10.4 Extrapolation of Correlation

35.1.10.5 Autoregressive Process (AR)

output of the lter

35.1.10.6 Power Spectrum of AR Process

35.1.10.7 Yule-Walker Equation for AR Process

Set

q

= 0 in (35.1.10.3) and with the equations are:

In matrix form:

linear in the coefcient

35.1.10.8 Moving Average Process (MA)

output of the lter

r r r p

r r r p

r q r q r q p

r q r q r q p

r q p r q p r

x x x

x x x

x x x

x x x

x x x

( ) ( ) ( )

( ) ( ) ( )

( ) ( ) ( )

( ) ( ) ( )

( ) ( ) (

0 1

1 0 1

1

1 1

1

+

+ +

+ +

L

L

M M M

L

L

M M

L qq

a

a

a p

c

c

c q

p

p

p

v

q

q

q

)

( )

( )

( )

( )

( )

( )

___

1

]

1

1

1

1

1

1

1

1

1

1

1

1

]

1

1

1

1

1

1

1

]

1

1

1

1

1

1

1

1

1

1

1

1

1

1

2

0

1

0

0

0

2

M

M

M

r k a r k k p r r p

x

p

p x x x

( ) ( ) ( ) ; ( ), , ( )

l

l l L

1

0 1 for given

S z

b

A z A z

x v

p p

( )

( )

( ) ( / )

2

2

0

1

H z

b

a k z

b

A z

k

p

p

k

( )

( )

( )

( )

( )

0

1

0

1

S e

b

A e

x

j

v

p

j

( )

( )

( )

2

2

2

0

c b h b

o

( ) ( ) ( ) ( ) 0 0 0 0

2

r k a r k b k k

x

p

p x v

( ) ( ) ( ) ( ) ( ), . +

l

l l

1

2

2

0 0

r r r p

r r r p

r p r p r

a

a p

b

x x x

x x x

x x x

p

p

v

( ) ( ) ( )

( ) ( ) ( )

( ) ( ) ( )

( )

( )

( )

0 1

1 0 1

1 0

1

1

0

1

0

0

2

2

+

1

]

1

1

1

1

1

]

1

1

1

1

1

1

]

1

1

K

L

M

L

M

M

11

1

a k

p

( )

S z B z B z

x v q q

( ) ( ) ( / )

2

1 H z b k z

k

q

q

k

( ) ( )

0

1999 by CRC Press LLC

35.1.10.9 Power Spectrum of MA Process

35.1.10.10 Yule-Walker Equation of MA Process

From (35.1.10.3) with and noting that then

MA(q) process is zero for

k

outside

35.2 Signal Modeling

35.2.1 The Pade Approximation

35.2.1.1 Pade Approximation

and This means

that the data t exactly to the model over the range See Figure (35.1) for the model. The

system function is (see Section 35.1.10)

35.2.1.2 Matrix Form of Pade Approximation

Note:

Solve for

a

p

(

p

)s rst (the lower part of the matrix) and then solve for

b

q

(

q

)s.

35.2.1.3 Denominator Coefcients (

a

p

(

p

))

From the lower part of 35.2.1.2 we nd

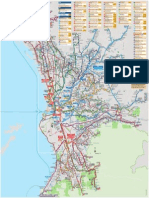

FIGURE 35.1

x

(

n

) modeled as unit sample response of linear shift-invariant system with

p

poles and

q

zeros.

S e B e

x

j

v q

j

( ) ( )

2

2

a k

p

( ) 0 h n b n

q

( ) ( ),

c k b k b r k b k b k b k b

q

q k

q q x v q q v

q k

q q

( ) ( ) ( ), ( ) ( ) ( ) ( ) ( ), + +

l l

l l l l

0

2 2

0

[ , ]. q q

x n a k x n k

b n n q

n q q p

k

p

p

q

( ) ( ) ( )

( ) , , ,

, ,

+

+ +

'

1

0 1

0 1

L

L

h n x n n p q h n n ( ) ( ) , , , , ( ) + < for for 0 1 0 0 L b n n n q

q

( ) . < > 0 0 for and

[ , ]. 0 p q +

H z B z A z

q p

( ) ( ) / ( ).

h(n)

e(n)

+

+

- (n)

x(n)

A

p

(z)

B

q

(z)

H(z) =

x

x x

x x

x q x q x q p

x q x q x q p

x q p x q p x q

( )

( ) ( )

( ) ( )

( ) ( ) ( )

( ) ( ) ( )

( ) ( ) ( )

0 0 0

1 0 0

2 1 0

1

1 1

1

L

L

L

M

L

L

M

L

+ +

+ +

1

]

1

1

1

1

11

1

1

1

1

1

1

1

1

]

1

1

1

1

1

1

1

]

1

1

1

1

1

1

1

1

1

1

1

1

1

2

0

1

0

0

a

a

a p

b

b

b q

p

p

p

q

q

q

( )

( )

( )

( )

( )

( )

M

M

M

1999 by CRC Press LLC

or equivalently nonsymmetric Toeplitz matrix with

x(q) element in the upper left

corner, x

q+1

= vector with its rst element being x(q + 1).

Note:

1. If X

q

is nonsingular, then exists and there is unique solution for the a

p

(p)s:

2. If X

q

is singular and (35.2.1.3) has a solution then X

q

z = 0 has a solution and, hence, there

is a solution of the form

3. If X

q

is nonsingular and no solution exists, we must set a

p

(0) = 0 and solve the equation X

q

a

p

= 0.

35.2.1.4 All Pole Model

(35.2.1.3) becomes

or or

Example 1

nd the Pade approximation of all-pole and second-order model (p =

2, q = 0). From (35.2.1.4) and and a(2) =

1.5. Also and since we obtain

approximate =

Note: Matches to the second order only.

35.2.2 Pronys Method

35.2.2.1 Pronys Signal Modeling

Figure 35.2 shows the system representation.

FIGURE 35.2 System interpretation of Pronys; method for signal modeling.

x q x q x q p

x q x q x q p

x q p x q p x q

a

a

a p

x q

x q

p

p

p

( ) ( ) ( )

( ) ( ) ( )

( ) ( ) ( )

( )

( )

( )

( )

( )

+

+ +

+ +

1

]

1

1

1

1

1

]

1

1

1

1

1

+

+

1 1

1 2

1 2

1

2

1

2

L

L

M

L

M

M

xx q p ( ) +

1

]

1

1

1

1

X a x X

q p q q

+1

,

X

q

1

a X x

p q q

+

1

1

.

a

p

. a a z

p p

+

H z b a k z q

k

p

p

k

( ) ( ) / ( ) , +

1

]

1

1

0 1 0

1

with

x

x x

x p x p x

a

a

a p

x

x

x p

p

p

p

( )

( ) ( )

( ) ( ) ( )

( )

( )

( )

( )

( )

( )

0 0 0

1 0 0

1 2 0

1

2

1

2

L

L

M

L

M

M

1

]

1

1

1

1

1

]

1

1

1

1

1

1

]

1

1

1

1

X a x

0 1 p

a k

x

x k a x k

p

k

p

( )

( )

( ) ( ) ( ) +

1

]

1

1

1

0

1

1

l

l l

x [ . . . . . ] 1 1 5 0 75 0 21 0 18 0 05

T

a a ( ) ( ) . 1 0 2 1 5 + 1 5 1 2 0 75 1 1 5 . ( ) ( ) . ( ) . a a a + or

b x H z z z ( ) ( ) , ( ) /[ . . ] 0 0 1 1 1 1 5 1 5

1 2

+

and hence h n x n ( ) ( )

x [ , . . . . . ] . 1 1 5 0 75 1 125 2 8125 2 5312

T

1999 by CRC Press LLC

35.2.2.2 Normal Equations

.

Note: The innity indicates a nonnite data sequence.

35.2.2.3 Numerator

35.2.2.4 Minimum Square Error

Example 1

Let otherwise. Find Pronys method to model as the

unit sample response of linear time-important lter with one pole and one zero,

Solutions

From (35.2.2.2) and

Hence and the denominator becomes

=

Numerator Coefcients

The minimum square error is +

But and For

comparison error =

which gives

35.2.3 Shanks Method

35.2.3.1 Shanks Signal Modeling

Figure 35.3 shows the system representation.

l

l l L l l l

+

1 1

0 1 2 0

p

p x x x

n q

a r k r k k p r k x n x n k k ( ) ( , ) ( , ) , , , ; ( , ) ( ) ( ) ,

b n x n a k x n k n q

q

k

p

p

( ) ( ) ( ) ( ) , , , +

1

0 1L

p q x

k

p

p x

r a k r k

,

( , ) ( ) ( , ) +

0 0 0

1

x n n N x n ( ) , , , ( ) 1 0 1 1 0 for and L x n ( )

H z b b z a z ( ) ( ( ) ( ) ) / ( ( ) ) + +

0 1 1 1

1 1

p a r r r x n N r x n

x x x

n

x

n

1 1 1 1 1 0 1 1 1 1 1 0

2

2

2

, ( ) ( , ) ( , ), ( , ) ( ) , ( , ) ( )

x n N ( ) . 1 2 a r r N N

x x

( ) ( , ) / ( , ) ( ) /( ) 1 1 0 1 1 2 1 A z ( )

1

2

1

1

N

N

z .

b x b x a x

N

N N

( ) ( ) , ( ) ( ) ( ) ( ) . 0 0 1 1 1 1 0 1

2

1

1

1

+

11

0 0

,

( , ) r

x

a r

x

( ) ( , ). 1 0 1 r x n N

x

n

( , ) ( ) 0 0 2

2

2

11

2 1

,

( ) /( ). N N N H z 21, ( )

z +

1 0 05

1

( . ) /( . ) ( ) ( ) ( . ) ( ), . ,

,

1 0 95 0 95 1 0 95

1 1

11

+

z h n n u n

n

and e

x n h n ( ) ( )

n

e n

0

2

4 595 [ ( )] . .

1999 by CRC Press LLC

35.2.3.2 Numerator of H(z)

35.2.4 All-Pole Model-Pronys Method

35.2.4.1 Transfer Function

35.2.4.2 Normal Equations

35.2.4.3 Numerator

minimum error (see 35.2.4.4)

35.2.4.4 Minimum Error

35.2.5 Finite Data Record Prony All-Pole Model

35.2.5.1 Normal Equations

FIGURE 35.3 Shanks method. The denominator A

p

(z) is found using Pronys method, B

q

(z) is found by minimizing

the sum of the squares of the error e(n).

(n) 1

A

p

(z)

g(n) x(n)

x(n)

e(n)

+

B

q

(z)

-

l

l l L l l

0 0

0 1

q

q g xg g

n

b r k r k k q r k g n g n k ( ) ( ) ( ) , , , ; ( ) ( ) ( ),

r k x n g n k g n n a k g n k

xg

n k

p

p

( ) ( ) ( ), ( ) ( ) ( ) ( )

0 1

H z b a k z

k

p

p

k

( ) ( ) / ( ) +

1

]

1

1

0 1

1

l

l l L

<

1

0

1 0 0

p

p x x

x

n

a r k r k k p x n n

r k x n x n k

( ) ( ) ( ) , , , ( ) ,

( ) ( ) ( )

for

b

p p

( ) , 0

p x

k

p

p x

r a k r k +

( ) ( ) ( ) 0

1

l

l l L

1

1 2

p

p x x

a r k r k k p ( ) ( ) ( ) , , , ,

r k x n x n k k x n n n N

x

n k

N

( ) ( ) ( ) , ( ) . < >

0 0 0 for and

1999 by CRC Press LLC

35.2.5.2 Minimum Error

35.2.6 Finite Data Record-Covariance Method of All-Pole Model

35.2.6.1 Normal Equation

35.2.6.2 Minimum Error

Example

use rst-order model: and 35.2.6.1 becomes

For k = 1, 35.2.6.1 becomes and must nd and

From 35.2.6.1 also =

But and with b(0) = 1 so that

the model is

35.3 The Levinson Recursion

35.3.1 The LevinsonDurbin Recursion

35.3.1.1 All-Pole Modeling

p x

k

p

p x

r a k r k +

( ) ( ) ( ) 0

1

l

l l L

l l l

< >

1

0 1 2

0 0 0

p

p x x

x

n p

N

a r k r k k p

r k x n x n k k x n n n N

( ) ( , ) ( , ) , , , ,

( , ) ( ) ( ), , , ( ) . for and

[ ] ( ) ( ) ( , )

min

p x

k

p

p x

r a k r k +

0 0

1

x [ ] , 1

2

L

N T

H z b a z p ( ) ( ) / ( ( ) ), +

0 1 1 1

1

then

l

l l

1

1

1 0 a r r k

x x

( ) ( , ) ( , ). a r r

x x

( ) ( , ) ( , ) 1 1 1 1 0 r

x

( , ) 1 1

r

x

( , ). 1 0 r x n x n x n

x

n

N

n

N

N

( , ) ( ) ( ) ( ) [ ]/[ ], 1 1 1 1 1 1

1 0

1

2 2 2

r

x

( , ) 1 0

n

N

N

x n x n

1

2 2

1 1 1 ( ) ( ) [ ]/[ ]. ( ) ( , ) / ( , ) 1 1 0 1 1 r r

x x

x x ( )

( ) 0 0 H z z ( ) /[ ].

1 1

1

r k a r k k p r a r

x

p

p x p x

p

p x

( ) ( ) ( ) , , , , ( ) ( ) ( ), + +

l l

l l L l l

1 1

0 1 2 0

r k x n x n k k x n n n N

x

n

N

( ) ( ) ( ) , ( ) . < >

0

0 0 0 for and

1999 by CRC Press LLC

35.3.1.2 All-Pole Matrix Format

or symmetric Toeplitz.

35.3.1.3 Solution of (35.3.1.2)

1. Initialization of the recursion

a)

b)

2. For

a)

b) reection of coefcient;

c)

d)

e)

3. (see 35.3.1.1)

35.3.1.4 Properties

1.

j

s produced by solving the autocorrelation normal equations (see Section 35.2.4) obey the

relation

2. If for all j, then minimum phase polynomial (all roots lie

inside the unit circle)

3. If a

p

is the solution to the Toeplitz normal equation (see 35.3.1.2) and

(positive denite) then minimum phase

4. If we choose (energy matching constraint), then the auto-correlation sequences of

x(n) and h(n) are equal for

r r r r p

r r r r p

r r r r p

r p r p r p r

a

x x x x

x x x x

x x x x

x x x x

( ) ( ) ( ) ( )

( ) ( ) ( ) ( )

( ) ( ) ( ) ( )

( ) ( ) ( ) ( )

0 1 2

1 0 1 1

2 1 0 2

1 2 0

1

1

]

1

1

1

1

1

1

L

L

L

M

L

pp

p

p

p

a

a p

( )

( )

( )

1

2

1

0

0

0

M

M

1

]

1

1

1

1

1

1

1

]

1

1

1

1

1

1

R a u u R

p p p

T

p

1 1

1 0 0 , [ , , , ] , L

a r

x 0 0

0 1 0 ( ) , ( )

a

0

0 1 ( ) ,

0

0 r

x

( )

j p 0 1 1 , , , L

j x

i

j

j x

r j a i r j i + + +

( ) ( ) ( ); 1 1

1

j j j +

1

/

For i j a i a i a j i

j j j j

+ +

+ +

1 2 1

1 1

, , , ( ) ( ) ( ); L

a j

j j + +

+

1 1

1 ( ) ;

j j j + +

1 1

2

1 [ ]

b

p

( ) 0

j

1

j

< 1 A z a k z

p

k

p

p

k

( ) ( ) +

1

1

R a u

p p p

1

R

p

> 0

A z

p

( )

b

p

( ) 0

k p .

1999 by CRC Press LLC

35.3.2 Step-Up and Step-Down Recursions

35.3.2.1 Step-Up Recursion

The recursion nds a

p

(i)s from

j

s.

Steps

1. Initialize the recursion:

2. For

a) For

b)

3.

35.3.2.2 Step-Down Recursion

The recursion nds

j

s from a

p

(i)s.

Steps

1. Set

2. For

a) For

b) Set

c) If

3.

Example

To implement the third-order lter in the form of a lattice structure

we proceed as follows. From step 2) we obtain the second-

order polynomial or

2

= Next we nd and hence

1

= and

= The lattice lter implementation is shown in Figure 35.4.

FIGURE 35.4

a

0

0 1 ( )

j p 0 1 1 , , , L

i j a i a i a j i

j j j j

+ +

+ +

1 2 1

1 1

, , , ( ) ( ) ( ) L

a j

j j + +

+

1 1

1 ( )

b

p

( ) 0

p p

a p ( )

j p p 1 2 1 , , , L

i j 1 2 , , , L a i a i a j i

j

j

j j j

( ) [ ( ) ( )]

+

+

+ + +

1

1

1

1

2 1 1 1

j j

a j ( )

j

1 quit

p

b

2

0 ( )

H z z z z ( ) . . . +

1 0 5 0 1 0 5

1 2 3

a

3 3 3

1 0 5 0 1 0 5 3 0 5 [ , . , . , . ] , ( ) . .

T

a

a

2 2 2

1 1 2 [ ( ) ( )] a a

T

a

a

a

a

a

a

2

2 3

2

3

3

3

3

3

1

2

1

1

1

2

2

1

1

1 0 25

0 5

0 1

0 5

0 1

0 5

0 6

0 2

( )

( )

( )

( )

( )

( )

.

.

.

.

.

.

.

.

1

]

1

1

]

1

1

]

1

1

]

1

1

1

]

1

+

1

]

1

1

]

1

1

]

1

,

a

2

2 0 2 ( ) . . a a a

1

2

2 2 2 2

1

1

1

1 1 0 5 ( ) [ ( ) ( )] .

a

1

1 0 5 ( ) .

[ . . . ] . 0 5 0 2 0 5

T

y(n)

x(n)

-0.5 =

3

-0.5 =

3

0.2 =

2

0.2 =

2

0.5 =

1

0.5 =

1

z

-1

z

-1

1999 by CRC Press LLC

35.3.3 Cholesky Decomposition

35.3.3.1 Cholesky Decomposition

Hermitian Toeplitz autocorrelation matrix,

lower triangular,

35.3.4 Inversion of Toeplitz Matrix

35.3.4.1 Inversion of Toeplitz Matrix

nonsingular Hermitian matrix (see 35.3.1.2), A

p

= see 35.3.3.1, D

p

= see

35.3.3.1, b = arbitrary vector.

35.3.5 Levinson Recursion for Inverting Toeplitz Matrix

35.3.5.1 Levinson Recursion

R

p

x = b, R

p

= see 35.3.1.2 (known), b = arbitrary vector, x = unknown vector

Recursion:

1. Initialize the recursion

a)

b)

c)

2. For

a)

b)

c) ;

d)

e)

f)

g)

h)

i)

R L D L

p p p p

H

A L A

p

p

p

p p p

H

a a a p

a a p

a p

1

]

1

1

1

1

1

1

1 1 2

0 1 1 1

0 0 1 2

0 0 0 1

1 2

2

( ) ( ) ( )

( ) ( )

( ) ,

L

L

L

M M M M

L

D D R R A R A D

p p p p p p

H

p p p

diag { }, det det , . . . ,

0 1

35 3 1 2 L see

R x b R A D A

p p p p p

H

,

1 1

a

0

0 1 ( )

x b r

x 0

0 0 0 ( ) ( ) / ( )

0

0 r

x

( )

j p 0 1 1 , , , L

j x

i

j

j x

r j a i r j i + + +

( ) ( ) ( ) 1 1

1

j j j +

1

/

For i j a i a i a j i

j j j j

+ +

+ +

1 2 1

1 1

, , , ( ) ( ) ( ) L

a j

j j + +

+

1 1

1 ( )

j j j + +

1 1

2

1 [ ]

j

i

j

j x

x i r j i +

1

1 ( ) ( )

q b j

j j j + +

+

1 1

1 [ ( ) ]/

For i j x i x i q a j i

j j j j

+ +

+ + +

0 1 1

1 1 1

, , , ( ) ( ) ( ) L

x j q

j j + +

+

1 1

1 ( )

1999 by CRC Press LLC

Example

1. Initialization:

2. For =

and hence

3. For j =1

+

35.4 Lattice Filters

35.4.1 The FIR Lattice Filter

35.4.1.1 Forward Prediction Error

forward prediction error, data, all-pole lter coefcients.

35.4.1.2 Square of Error

35.4.1.3 Z-Transform of the p

th

-Order Error

forward prediction error lter (all pole), see (35.4.1.1).

Note: The output of the forward prediction lter is when the input is x(n).

35.4.1.4 (j+1) Order Coefcient

see Section 35.3.1

8 4 2

4 8 4

2 4 8

0

1

2

18

12

24

1

]

1

1

1

1

]

1

1

1

1

]

1

1

1

x

x

x

( )

( )

( )

0 0

0 8 0 0 0 18 8 9 4 r x b r ( ) , ( ) ( ) / ( ) / /

j r

1

]

1

1

]

1

0 1 4 4 8 1 2

1 1

1 2

0 1 0 0 1

1

, ( ) , / / / ,

/

,

a

1 0 1

2

1

[ ]

8 1 1 4 6 0 1 9 4 4 9 1 12 9 6 1 2

0 0 1 0 1

[ / ] , ( ) ( ) ( / ) , [ ( ) ] / ( ) / / , x r q b

x

1

0

1

1

0

0

1

1

9 4

0

1 2

1 2

0

2

1 2

1

]

1

+

1

]

1

1

]

1

+

1

]

1

1

]

1

x

q

a ( ) ( ) /

( / )

/

/

,

1 1 2 2

1

2 1 1 2 1 2 4 0 0

0

+ +

1

]

1

r a r ( ) ( ) ( ) ( / ) , , , a

a

2 1 1 1

6 0 , [ ( ) x

x

r

r

T

1

1

2

1

2 1 2 2 4 4 2 6 ( )]

( )

( )

[ / ][ ] ,

1

]

1

+ q b

2 1 2

2 12 6 6 1 [ ( ) ]/ [ ]/ , x

2

1

1

0

1

0

1

]

1

1

1

x

x

( )

( )

q

a

a

2

2

2

2

1

1

2

1 2

0

6

0

1 2

1

2

5 2

6

( )

( ) / / /

1

]

1

1

1

1

]

1

1

1

+

1

]

1

1

1

1

]

1

1

1

e x n a k x n k

p

f

k

p

p

+

( ) ( ) ( )

1

x n ( ) a k

p

( )

p

n

p

f

e n

+

0

2

( )

E z A z X z A z a k z

p

f

p p

k

p

p

k

( ) ( ) ( ), ( ) ( ) +

1

1

e

p

f

a i a i a j i

j j j j j + +

+

+ +

1 1 1

1 ( ) ( ) ( ).

1999 by CRC Press LLC

35.4.1.5 (j+1) Order Coefcient in the Z-domain

35.4.1.6 (j+1) Order Error in the Z-domain

35.4.1.7 (j+1) Order of Error (see 35.4.1.6)

(inverse Z-transform of (35.4.1.6), j

th

order of backward prediction

error

35.4.1.8 Backward Prediction Error

35.4.1.9 (j+1) Backward Prediction Error

35.4.1.10 Single Stage of FIR Lattice Filter

See Figure 35.5

35.4.1.11 p

th

-Order FIR Lattice Filter

See Figure 35.6

Note:

FIGURE 35.5 One-stage FIR lattice lter.

FIGURE 35.6 p

th

-order FIR lattice lter.

A z A z z A z

j j j

j

j + +

+

+

1 1

1

1 ( ) ( ) [ ( / )]

( )

E z E z z E z E z z X z A z E z A z X z E z A z X z

j

f

j

f

J j

b

j

b

j j

f

j j

f

j +

+

+ +

+

1

1

1

1

1 1

1 ( ) ( ) ( ), ( ) ( ) ( / ), ( ) ( ) ( ), ( ) ( ) ( )

e n e n e n

j

f

j

f

j j

b

+ +

+

1 1

1 ( ) ( ) ( ) e

j

b

e n x n j a k x n j k

j

b

k

j

j

( ) ( ) ( ) ( ) + +

1

e n e n e n

j

b

j

b

j j

f

+ +

+

1 1

1 ( ) ( ) ( )

e n e n x n

f b

0 0

( ) ( ) ( )

1999 by CRC Press LLC

35.4.1.12 All-Pass Filter

which indicates that

is the output of an all-pass lter with input

35.4.2 IIR Lattice Filters

35.4.2.1 All-pole Filter

produces a response to the input (see Section 35.4.1

for denition).

Forward and Backward Errors

(see 35.4.1.7), (see 35.4.1.9). See Figure

35.7 for a pictorial representation of the single stage of an all-pole lattice lter. Cascading p in such a

section we obtain the p

th

-order all-pole lattice lter.

35.4.2.2 All-Pass Filter

35.4.3 Lattice All-Pole Modeling of Signals

35.4.3.1 Forward Covariance Method

35.4.3.1.1 Reection Coefcients

,

< > = dot product,

FIGURE 35.7 Single stage of an all-pole lattice lter.

H z A z A z z A z a k z E z H z E z

ap p

R

p

p

p

k

p

p

k

p

b

ap p

f

( ) ( ) / ( ) [ ( / )]/ ( ) , ( ) ( ) ( ) +

1

]

1

1

1 1

1

e n

p

b

( ) e n

p

f

( ).

1 1

1

0

1

A z

E z

E z

a k z

p

f

p

f

k

p

p

k

( )

( )

( )

( )

+

e n

f

0

( ) e n

p

+

( )

e n e n e n

j

f

j

f

j j

b

( ) ( ) ( )

+ + 1 1

1 e n e n e n

j

b

j

b

j j

f

+ +

+

1 1

1 ( ) ( ) ( )

e

j+1

(n)

j+1

z

-1

b

e

j+1

(n)

f

j+1

*

e

j

(n)

b

e

j

(n)

f

e

j+1

(n)

b

e

j+1

(n)

f

e

j

(n)

b

e

j

(n)

f

j+1

H z z

A z

A z

ap

p p

p

( )

( / )

( )

j

f n j

N

j

f

j

b

n j

N

j

b

j

f

j

b

j

b

e n e n

e n

1 1

1

2

1 1

1

2

1

1

( )[ ( )]

( )

, e e

e

e

j

f

j

f

j

f

j

f T

e j e j e N + [ ( ) ( ) ( )] , 1 L

e

j

b

j

b

j

b

j

b T

e j e j e N

1 1 1 1

1 1 [ ( ) ( ) ( )] L

1999 by CRC Press LLC

35.4.3.1.2 Forward Covariance Algorithm

1. Given j 1 reection coefcients

2. Given the forward and backward prediction errors

3. j

th

reection coefcient is found from 35.4.3.1.1

4. Using lattice lter the (j 1)

st

order forward and backward prediction errors are updated to form

5. Repeat the process

Example

Given

Initialization: Next, evaluation of the norm

.

Inner product between

.

From 35.4.3.1.1

.

Updating forward prediction error (set in 4.1.7): hence,

unit step function. First-order modeling error (35.4.1.2):

are zero. First-order backward prediction error (set j = j 1 in

4.1.9): or Second

reection coefcient:

since Similar steps

Continuing and hence For nding a

i

s see Section 35.4.1.

j

f f f

j

f T

1 1 2 1

[ ] L

e n e n

j

f

j

b

1 1

( ), ( )

e n e n

j

f

j

b

( ) ( ) and

x n n N

n

( ) , , . < < 0 0 1

e n e n x n n N

f b n

0 0

0 1 ( ) ( ) ( ) , , , , . L

e n e n

f b

n

N

b

n

N

n

N

0 0

2

1

0

2

0

1

2

2

2

1 1

1

1

( ): [ ( )]

e n e n e n e n

f b f b

n

N

f b

n

N

n

N

0 0 0 0

1

0 0

0

1

2

2

2

1 1

1

1

( ) ( ): , ( ) ( ) and

e e

1

0 0

0

2

f

f b

b

e e

e

,

j j 1 e n e n e n

j

f

j

f

j

f

j

b

( ) ( ) ( ), +

1 1

1

e n u n u n n u n

f n n

1

1

1 ( ) ( ) ( ) ( ) ( ), ( )

1

1

1

2

0 1

f

n

N

f

e n N

[ ( )] ( ) ( ) since L

e n e n e n

j

b

j

b

j j

f

( ) ( ) ( ) +

1 1

1 e n e n e n u n u n

b b f n n

1 0 1 0

1 1

1 1 ( ) ( ) ( ) ( ) ( ). +

+

2

1 1

1

2

0

f

f b

b

e e

e

,

e n n

f

1

0 0 ( ) . > for e n e n n

f b f

2 1 3

0 ( ) ( ) ( ) . and

j

f

j > 0 1 for all

f T

[ ] . 0 0 L

1999 by CRC Press LLC

35.4.3.2 The Backward Covariance Method

35.4.3.2.1 Reection Coefcients

The steps are similar to those in section 35.4.3.1: Given the rst j 1 reection coefcients =

and given the forward and backward prediction errors the j

th

reec-

tion coefcient is computed. Next, using the lattice lter, the (j 1)

st

-order forward and backward errors

are updated to form the j

th

-order errors, and the process is repeated.

35.4.3.3 Burgs Method

35.4.3.3.1 Reection Coefcients

,

< , > = dot product, es are the forward and backward prediction errors.

35.4.3.3.2 Burg Error

.

The Burgs method has the same steps for computing the necessary unknown as in Section 35.4.3.1 and

35.4.3.2.

35.4.3.3.3 Burgs Algorithm

1. Initialize the recursion:

a)

b)

2. For j = 1 to p:

a)

For n = j to N:

b)

c) ,

d)

j

b n j

N

j

b

j

b

n j

N

j

f

j

f

j

b

j

f

e n e n

e n

1 1

1

2

1 1

1

2

1 ( )[ ( )]

( )

, e e

e

b

[ ] ,

1 2 1

b b

j

b T

L

e n e n

j

f

j

b

1 1

( ) ( ) and

j

B n j

N

j

f

j

b

n j

N

j

f

j

b

j

f

j

b

j

f

j

f

e n e n

e n e n

+

+

2 1

1

2

1 1

1

2

1

2

1 1

1

2

1

2

( )[ ( )]

[ ( ) ( ) ]

, e e

e e

j

B

j

B

j

f

j

f

j

B B

n

N

f b

n

N

e j e N e n e n x n +

[ ( ) ( ) ][ ], [ ( ) ( ) ] ( )

1 1

2

1

2 2

0

0

0

2

0

2

0

2

1 1 2

e n e n x n

f b

0 0

( ) ( ) ( ) =

D x n x n

n

N

1

1

2 2

2 1

[ ( ) ( ) ]

j

B

j

n j

N

j

f

j

b

D

e n e n

2

1

1 1

( )[ ( )]

e n e n e n e n e n e n

j

f

j

b

j

B

j

b

j

b

j

b

j

B

j

f

( ) ( ) ( ), ( ) ( ) ( ) ( ) + +

1 1 1 1

1 1

D D e j e N

j j j

B

j

f

j

b

+

1

2 2 2

1 ( ) ( ) ( )

j

B

j j

B

D [ ] 1

2

1999 by CRC Press LLC

Example 1

Given unit step function. From Example 1 of (35.4.3.1.2)

.

Similarly, from Section 35.4.3.2

.

Therefore,

.

Update the errors:

Hence,

.

Zero-order error:

,

rst-order error

.

x n u n n N u n

n

( ) ( ), , , , ( ) 0 1L

e e e

0 0

2

2 0

2

2

2

1

1

1

1

f b

N

b

N

,

and

e

0

2

2

2

2

1

1

f

N

1

0 0

0

2

0

2 2

2

2

1

B

f b

f b

+

+

e e

e e

,

e n e n e n u n u n

e n e n e n u n u n

f f B b n B n

b b B f n B n

1 0 1 0 1

1

1 0 1 0

1

1

1 1

1 1

( ) ( ) ( ) ( ) ( ),

( ) ( ) ( ) ( ) ( ),

+ +

+ +

e

e

e e

1

2

2

1

2 4 2

2 1

2 2

1

2

2

1

2 2

2 1

2 2

1 1

2

1 1

1

1

1

1 1

1

1

1

f

n

N

f

N

b

n

N

b

N

f b

n

N

f f

e n

e n

e n e n

+

+

[ ( )] ( )

( )

,

[ ( )] ( )

( )

,

, ( ) ( )

( )

( )

2 2

2 1

2 2

1

1

1

( )

( )

.

( ) N

2

1 1

1

2

1

2

2

4

2

2

1

B

f b

f b

+

+

e e

e e

,

0

0

2

2 1

2 2

2 2

1

1

2

1

B

n

N

N

x n

( )

( )

1 0 0

2

0

2

1

2

0

2

1

2

2 2

0 1 1 1 1 1

B B f b B B N B

e e N + [ [ ( )] [ ( )] ][ ] [ ][ ] ( ) / ( )

1999 by CRC Press LLC

35.4.3.4 Modied Covariance Method

35.4.3.4.1 Normal Equation

modied covariance error

Example 1

Given data For second-order lter p = 2,

,

(a) insert-

ing values of from (a) and solving for a(1) and a(2) we obtain

Hence, the all-pole model has

35.4.4 Stochastic Modeling

35.4.4.1 Forward Reection Coefcients

,

E stands for expectation.

35.4.4.2 Backward Reection Coefcients

35.4.4.3 Burg Reection Coefcient

k

p

x x p x x

x

n p

N

r k r p k p a k r r p p

p r k x n k x n

+ +

1

0

1

[ ( , ) ( , )] ( ) [ ( , ) ( , )],

, , , ( , ) ( ) ( )],

l l l l

l L l l

p

M

+ + +

r r p p a k r k r p p k

x x

k

p

p x x

( , ) ( , ) ( )[ ( , ) ( , )] 0 0 0

1

x n u n n N

n

( ) ( ), , . 0 L

r k c

x

n

N

n k n k

n

N

n k

( , ) l

l l l

2 2

2 4

c

N

[ ]/[ ],

( )

1 1

2 1 2

r r r r

r r r r

a

a

r r

r r

x x x x

x x x x

x x

x x

( , ) ( , ) ( , ) ( , )

( , ) ( , ) ( , ) ( , )

( )

( )

( , ) ( , )

( , ) ( , )

1 1 1 1 1 2 0 1

2 1 1 0 2 2 0 0

1

2

1 0 2 1

2 0 2 0

+ +

+ +

1

]

1

1

]

1

+

+

1

]

11

,

r k

x

( , ) l a a ( ) ( ) / ( ) . 1 1 2 1

2

+ and

A z z z ( ) [( ) / ] . + +

1 1

2 1 2

j

f j

f

j

b

j

b

E e n e n

E e n

{ ( )[ ( )] }

{ ( ) }

1 1

1

2

1

1

j

b j

f

j

b

j

f

E e n e n

E e n

{ ( )[ ( )] }

{ ( ) }

1 1

1

2

1

j

B j

f

j

b

j

f

j

b

E e n e n

E e n E e n

+

2

1

1

1 1

1

2

1

2

{ ( )[ ( )] }

{ ( ) } { ( ) }

1999 by CRC Press LLC

References

Hayes, M. H., Statistical Digital Signal Processing and Modeling, John Wiley & Sons Inc., New York,

NY, 1996.

Kay, S., Modern Spectrum Estimation: Theory and Applications, Prentice-Hall, Englewood Cliffs, NJ,

1988.

Marple, S. L., Digital Spectral Analysis with Applications, Prentice-Hall, Englewood Cliffs, NJ, 1987.

Você também pode gostar

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)No EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Nota: 4.5 de 5 estrelas4.5/5 (121)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryNo EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryNota: 3.5 de 5 estrelas3.5/5 (231)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaNo EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaNota: 4.5 de 5 estrelas4.5/5 (266)

- Never Split the Difference: Negotiating As If Your Life Depended On ItNo EverandNever Split the Difference: Negotiating As If Your Life Depended On ItNota: 4.5 de 5 estrelas4.5/5 (838)

- The Emperor of All Maladies: A Biography of CancerNo EverandThe Emperor of All Maladies: A Biography of CancerNota: 4.5 de 5 estrelas4.5/5 (271)

- The Little Book of Hygge: Danish Secrets to Happy LivingNo EverandThe Little Book of Hygge: Danish Secrets to Happy LivingNota: 3.5 de 5 estrelas3.5/5 (400)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeNo EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeNota: 4 de 5 estrelas4/5 (5794)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyNo EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyNota: 3.5 de 5 estrelas3.5/5 (2259)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreNo EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreNota: 4 de 5 estrelas4/5 (1090)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersNo EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersNota: 4.5 de 5 estrelas4.5/5 (345)

- Team of Rivals: The Political Genius of Abraham LincolnNo EverandTeam of Rivals: The Political Genius of Abraham LincolnNota: 4.5 de 5 estrelas4.5/5 (234)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceNo EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceNota: 4 de 5 estrelas4/5 (895)

- The Unwinding: An Inner History of the New AmericaNo EverandThe Unwinding: An Inner History of the New AmericaNota: 4 de 5 estrelas4/5 (45)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureNo EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureNota: 4.5 de 5 estrelas4.5/5 (474)

- Russian GrammarDocumento141 páginasRussian GrammarMichael Sturgeon, Ph.D.100% (55)

- The Yellow House: A Memoir (2019 National Book Award Winner)No EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Nota: 4 de 5 estrelas4/5 (98)

- Spectre TransientnoiseDocumento89 páginasSpectre TransientnoiseHoang Nguyen100% (1)

- George M. Siouris-An Engineering Approach To Optimal Control and Estimation Theory-Wiley-Interscience (1996)Documento211 páginasGeorge M. Siouris-An Engineering Approach To Optimal Control and Estimation Theory-Wiley-Interscience (1996)Umar JavedAinda não há avaliações

- Compgrammar PolishDocumento114 páginasCompgrammar Polish420100% (4)

- The Slavic LanguagesDocumento4 páginasThe Slavic LanguagesGramoz CubreliAinda não há avaliações

- Romanian and Albanian Common Vocabulary-LibreDocumento16 páginasRomanian and Albanian Common Vocabulary-LibreGramoz CubreliAinda não há avaliações

- NutritionDocumento28 páginasNutritionGramoz Cubreli100% (1)

- EndosDocumento7 páginasEndosGramoz Cubreli100% (1)

- Soderstrom T., Stoica P. System Identification (PH 1989) (ISBN SDocumento637 páginasSoderstrom T., Stoica P. System Identification (PH 1989) (ISBN Sjugneejee100% (5)

- Estimation and Detection Theory by Don H. JohnsonDocumento214 páginasEstimation and Detection Theory by Don H. JohnsonPraveen Chandran C RAinda não há avaliações

- Isola ObspyDocumento24 páginasIsola ObspyAndrea ProsperiAinda não há avaliações

- RepProgPh70 - Pitarke Theory SPSPPDocumento87 páginasRepProgPh70 - Pitarke Theory SPSPPGramoz CubreliAinda não há avaliações

- MIT6 094IAP10 Lec04Documento34 páginasMIT6 094IAP10 Lec04Happy2021Ainda não há avaliações

- Unit 11aDocumento45 páginasUnit 11aGramoz CubreliAinda não há avaliações

- Decision-Making Biases and Information Systems: KeywordsDocumento11 páginasDecision-Making Biases and Information Systems: KeywordsGramoz CubreliAinda não há avaliações

- The Synchrotron: Longitudinal Focusing & The Gamma TransitionDocumento13 páginasThe Synchrotron: Longitudinal Focusing & The Gamma TransitionGramoz CubreliAinda não há avaliações

- Valence Bond Theory: Hybridization: o o o o o oDocumento12 páginasValence Bond Theory: Hybridization: o o o o o oGramoz CubreliAinda não há avaliações

- Ar Ni Er: Av. M Illie Mathy SDocumento1 páginaAr Ni Er: Av. M Illie Mathy SGramoz CubreliAinda não há avaliações

- Molecular FluorescenceDocumento7 páginasMolecular FluorescenceGramoz CubreliAinda não há avaliações

- Stereoisomerism: AH Chemistry Unit 3 (C)Documento38 páginasStereoisomerism: AH Chemistry Unit 3 (C)Gramoz CubreliAinda não há avaliações

- StereoisomersDocumento17 páginasStereoisomersGramoz CubreliAinda não há avaliações

- Auto-Regression and Distributed Lag ModelsDocumento79 páginasAuto-Regression and Distributed Lag Modelssushantg_6100% (2)

- Power Spectral DensityDocumento9 páginasPower Spectral DensitySourav SenAinda não há avaliações

- Ap9211 AdspDocumento2 páginasAp9211 AdspselvijeganAinda não há avaliações

- TSA PPT Lesson 03Documento15 páginasTSA PPT Lesson 03souravAinda não há avaliações

- Auto-Focus Using PSD Estimation Effective Bandwidth: 1. Autofocus SimulationDocumento10 páginasAuto-Focus Using PSD Estimation Effective Bandwidth: 1. Autofocus SimulationKarthik VijapurapuAinda não há avaliações

- Orthogonal ProjectionDocumento17 páginasOrthogonal Projection17.asthasharmaAinda não há avaliações

- DSP Lab 01-1Documento4 páginasDSP Lab 01-1Pette MingAinda não há avaliações

- Experiment 6 N 7Documento6 páginasExperiment 6 N 7Maanas KhuranaAinda não há avaliações

- Teleprotection Terminal InterfaceDocumento6 páginasTeleprotection Terminal InterfaceHemanth Kumar MahadevaAinda não há avaliações

- Matlab Image Noises Algorithms Explained and Manually ImplementationDocumento5 páginasMatlab Image Noises Algorithms Explained and Manually ImplementationSufiyan Ghori100% (8)

- SIGGRAPH2001 CoursePack 08Documento81 páginasSIGGRAPH2001 CoursePack 08Bogdan VladAinda não há avaliações

- ESS101-Modeling and Simulation: Paolo FalconeDocumento28 páginasESS101-Modeling and Simulation: Paolo FalconeElvir PecoAinda não há avaliações

- 3 Sampling PDFDocumento20 páginas3 Sampling PDFWaqas QammarAinda não há avaliações

- Dynamic Harmonic RegressionDocumento26 páginasDynamic Harmonic RegressionangelAinda não há avaliações

- GATE - Communication EngineeringDocumento120 páginasGATE - Communication EngineeringMurthy100% (1)

- Pre WhiteningDocumento20 páginasPre WhiteningAjeet patilAinda não há avaliações

- Unit 4 Random Signal ProcessingDocumento39 páginasUnit 4 Random Signal ProcessingmanojniranjAinda não há avaliações

- Lecture 1Documento23 páginasLecture 1Jaco GreylingAinda não há avaliações

- Kriging - Method and ApplicationDocumento17 páginasKriging - Method and ApplicationanggitaAinda não há avaliações

- Presentation 6Documento21 páginasPresentation 6liya shanawasAinda não há avaliações

- A Novel Signal Modeling Approach For Classification of Seizure and Seizure-Free EEG SignalsDocumento11 páginasA Novel Signal Modeling Approach For Classification of Seizure and Seizure-Free EEG Signalssatyender jaglanAinda não há avaliações

- ADA311320Documento76 páginasADA311320oaky2009Ainda não há avaliações

- Time Series Econometrics HomeworkDocumento6 páginasTime Series Econometrics Homeworkafesdbtaj100% (1)