Escolar Documentos

Profissional Documentos

Cultura Documentos

Ijettcs 2013 06 24 128 PDF

0 notas0% acharam este documento útil (0 voto)

36 visualizações7 páginasInternational Journal of Emerging Trends & Technology in Computer Science (IJETTCS)

Web Site: www.ijettcs.org Email: editor@ijettcs.org, editorijettcs@gmail.com

Volume 2, Issue 3, May – June 2013 ISSN 2278-6856, Impact Factor

2.524 ISRA:JIF

Título original

IJETTCS-2013-06-24-128.pdf

Direitos autorais

© Attribution Non-Commercial (BY-NC)

Formatos disponíveis

PDF, TXT ou leia online no Scribd

Compartilhar este documento

Compartilhar ou incorporar documento

Você considera este documento útil?

Este conteúdo é inapropriado?

Denunciar este documentoInternational Journal of Emerging Trends & Technology in Computer Science (IJETTCS)

Web Site: www.ijettcs.org Email: editor@ijettcs.org, editorijettcs@gmail.com

Volume 2, Issue 3, May – June 2013 ISSN 2278-6856, Impact Factor

2.524 ISRA:JIF

Direitos autorais:

Attribution Non-Commercial (BY-NC)

Formatos disponíveis

Baixe no formato PDF, TXT ou leia online no Scribd

0 notas0% acharam este documento útil (0 voto)

36 visualizações7 páginasIjettcs 2013 06 24 128 PDF

International Journal of Emerging Trends & Technology in Computer Science (IJETTCS)

Web Site: www.ijettcs.org Email: editor@ijettcs.org, editorijettcs@gmail.com

Volume 2, Issue 3, May – June 2013 ISSN 2278-6856, Impact Factor

2.524 ISRA:JIF

Direitos autorais:

Attribution Non-Commercial (BY-NC)

Formatos disponíveis

Baixe no formato PDF, TXT ou leia online no Scribd

Você está na página 1de 7

International Journal of EmergingTrends & Technology in Computer Science(IJETTCS)

Web Site: www.ijettcs.org Email: editor@ijettcs.org, editorijettcs@gmail.com

Volume 2, Issue 3, May June 2013 ISSN 2278-6856

Volume 2, Issue 3 May June 2013 Page 272

Abstract: Natural Language Processing (NLP) is an area

which is concerned with the computational aspects of the

human language. The main difficulty in natural language

processing tasks is perhaps its ambiguity. Ambiguity in

natural language pervades virtually all aspects of language

analysis. Sentence analysis in particular exhibits a large

number of ambiguities that demand adequate resolution

before the sentence can be understood.

Most of the language processing applications like

Information Retrieval (IR), Information Extraction (IE),

Question-Answering systems, Text Summarization and

Machine Translation (MT) are affected by the highly

ambiguous nature of natural language.

Ambiguities in sentence analysis are generally categorized

into two types: lexical and structural ambiguities. The present

work describes the methodology to resolve lexical ambiguity

in a Kannada sentence. Lexical ambiguity arises when a

lexical item has alternate meanings and different Part-Of-

Speech (POS) tags.

The paper describes the decision list based algorithm to

disambiguate Kannada polysemous words. We built Kannada

corpora using web resources. It is further divided in to

training and testing corpora. The decision list required for

disambiguation task is created using training corpora. The

example sentences needs to be disambiguated are stored in

testing corpora. The proposed algorithm attempts to

disambiguate all the content words such as nouns, verbs,

adverbs, adjectives in an unrestricted Kannada text sentence.

The algorithm is based on one powerful assumption that

words tend to have one sense per collocation. i.e. the nearby

words provide strong and consistent clues to the sense of the

ambiguous word.

Keywords: Decision list, Lexical ambiguity, Corpus,

Machine Translation, Word Sense Disambiguation.

1. INTRODUCTION

One of the fundamental tasks in Natural Language

Processing is Word Sense Disambiguation. It is the

problem of determining in which sense a word having a

number of distinct senses is used in a given sentence.

Consider the following sentence.

O V I^ Io .

[raamanu pandithara baLI hoogi yantra haakisikonDu

bandanu] 'Ramu received an astrological device from the

panDith'

O -O Iv^C.

[raamana maneya niirettuva yantra haaLaagide] 'Rama's

house water lifting machine is got corrupted'.

In the above sentence the word [yantra] is

ambiguous. It has two distinct meanings such as an

astrological device (Sense A) and a Type of machinery

(Sense B). To a human, it is obvious that the first

sentence is using the word [yantra] in an

astrological device sense and in the second sentence, it is

being used in a type of machinery sense. The process of

identifying the correct sense of the word [yantra] in

a given context is called Word Sense Disambiguation

(WSD). As a human being, we can easily disambiguate

the word [yantra] using the external world

knowledge. But developing algorithms to replicate this

human ability is a difficult task.

There are a number of ways to approach this problem.

One simple way is to determine which sense occurs most

commonly (Most Frequent Sense) and always choose that

sense. This technique acts as a base line for the remaining

WSD algorithms.

Initial research on WSD focused on disambiguating a few

selected target words in a given sentence. Until recently,

research in WSD did not focus on disambiguating all the

content words in a text in one go. Disambiguating all the

content words is called unrestricted WSD.

In the present work, we propose a machine learning

algorithm based on Decision List for unrestricted

Kannada text WSD. The algorithm considers multiple

types of evidence in the context of the ambiguous word,

exploiting the differences in the collocation distribution

as measured by log-likelihood. The algorithm exploits the

one sense per collocation property of the human

language. i.e. nearby words provide strong and consistent

clues to the sense of a target word.

In order to achieve high quality translation output in

machine translation, word sense disambiguation is one of

the most important problems to be solved. This is the

motivation behind the present work. The WSD is

necessary not only in Machine Translation but also in

almost every application of language technology

including information retrieval or extraction, knowledge

mining or acquisition, lexicography, semantic

interpretation etc.

The rest of the paper is organized as follows. Section 2

explores previous work done in word sense

disambiguation and presents the current state of the word

sense disambiguation. Section 3 introduces linguistic

preliminaries of the Kannada language and the basic

Kannada Word Sense Disambiguation Using

Decision List

Parameswarappa S and Narayana V.N

Department of Computer Science & Engineering,

Malnad College of Engineering, Hassan, Karnataka, 573 202, India

International Journal of EmergingTrends & Technology in Computer Science(IJETTCS)

Web Site: www.ijettcs.org Email: editor@ijettcs.org, editorijettcs@gmail.com

Volume 2, Issue 3, May June 2013 ISSN 2278-6856

Volume 2, Issue 3 May June 2013 Page 273

infrastructure requirement of the present work. Section 4

discuses the basics of decision list and its construction

using the Kannada corpus. Section 5 describes the

methodology and the proposed algorithm used for

disambiguation task. Section 6 provides the detailed

information required to implement the proposed

algorithm. Section 7 discuses the algorithm testing,

evaluation and discuss the observations made during the

process. Section 8 concludes the paper and provides the

pointer for future research in this direction.

2. RELATED WORK

Word Sense Disambiguation has been a key task in the

field of Natural Language Processing since late 1940s.

Based on how the disambiguation information is acquired

by the WSD system, they are classified as knowledge-

based, hybrid and corpus-based systems [1].

Knowledge-based approaches encompass systems that

rely on information from an explicit lexicon such as

Machine Readable Dictionaries, thesauri, computational

lexicons such as Wordnet [2] or hand crafted knowledge

bases. Some of the examples for Knowledge based

approaches are Lesks algorithm [3], Walker's algorithm

[4].

Hybrid approaches like WSD using Structural Semantic

Interconnections [5] use combination of more than one

knowledge sources such as Wordnet as well as a small

amount of tagged corpora.

Unsupervised algorithms work directly from un-annotated

raw corpora. They have the potential to overcome the new

knowledge acquisition bottleneck and they have achieved

good result. [6] and [7] are some of the examples of

unsupervised approaches.

Supervised and semi-supervised methods make use of

annotated corpora to train from or as seed data in a

bootstrapping process. Some of the examples for

supervised learning algorithms are WSD using SVM [8],

Exemplar based WSD [9]. An example for both

supervised and semi-supervised algorithm is Decision list

algorithm. Decision list algorithm is an accurate

algorithm. Its performance is better than other

algorithms.

The formal model of decision lists was presented in [10].

The statistical decision procedure for lexical ambiguity

resolution was presented by [11]. The algorithm exploits

both local syntactic patterns and more distinct collocation

evidence, generating an efficient, effective and highly

perspicuous recipe for resolving a given ambiguity.

Yarowsky proposed an algorithm based on two powerful

constraints namely one sense per collocation and one

sense per discourse for sense disambiguation [12].

Initial WSD research focused on disambiguating a few

selected target words in a given sentence. But the ultimate

aim of WSD is to disambiguate all the content words in a

sentence. Rada Mihalcea [13] proposed a method based

on semantic density to do unrestricted WSD.

3. KANNADA LANGUAGE

This section introduces the linguistic preliminaries of the

Kannada language and the basic infrastructure

requirement of the present work.

3.1 Linguistic preliminaries of Kannada

The world languages are classified in to two categories.

Namely, fixed word order and free word order. In the

former case, the words constituting a sentence can be

positioned in a sentence according to grammatical rules

in some standard ways. On the other hand, in the later

case, no fixed ordering is imposed on the sequence of

words in a sentence. An example for fixed word order

language is English and that of pure free word order

language is Sanskrit. Generally Kannada is a free word

order language. But, it lost free word ordering partially in

the course of evolution. Here word groups are free order

but the internal structure of word groups is fixed order

[14].

Kannada is an agglutinating language of the suffixing

type. Nouns are marked for number and case and verbs

are marked, in most cases, for agreement with the subject

in number, gender and person. This makes Kannada, a

relatively free word order language. Kannada language

exhibits a very rich system of morphology. Morphology

includes inflection, derivation, conflation (sandhi) and

compounding [15].

Indian languages come from four different language

families - the Indo-Aryan, The Tibeto-Burman, The

Austro-Asiatic and the Dravidian. Kannada belongs to

Dravidian family. Kannada is one of the technologically

least developed languages in India today. This is ironical

since Kannada has a very old and rich literary tradition, it

is currently spoken by a very large number of people, and

Karnataka is in the centre stage of IT (Information

Technology) revolution in the country. As of today, the

only corpus we have is the roughly 3 Million word corpus

developed by CIIL (Central Institute of Indian

Languages) Mysore long ago. Lack of basic resources

such as corpora is one of the major reasons for our

lagging behind in language technology. Several

languages in India today have 30 to 50 Million word

corpora. There are hardly any electronic dictionaries,

morphological analyzers, POS tagger and Computational

Grammars or Parsing systems for Kannada worth taking

seriously. Naturally, we are lagging behind in many areas

of linguistics as also in language technologies [16].

3.2 Kannada Corpus

Corpus is a large and representative collection of

language material stored in a computer processable form

[17]. It provides realistic, interesting and insightful

examples of language use for theory building and for

verifying hypothesis [18]. Corpus provides the basic

International Journal of EmergingTrends & Technology in Computer Science(IJETTCS)

Web Site: www.ijettcs.org Email: editor@ijettcs.org, editorijettcs@gmail.com

Volume 2, Issue 3, May June 2013 ISSN 2278-6856

Volume 2, Issue 3 May June 2013 Page 274

language data from which lexical resources, such as

dictionaries, thesauri, Wordnet etc can be generated [19].

Language technologies and applications are greatly

benefited from language corpus.

For the proposed algorithm testing, we have used

randomly selected set of sentences from the Kannada web

corpus developed using corpus building tool [20]. The

corpora include wide variety of subjects such as, Kannada

news papers, Wikipedia articles, blogs, books, novels etc.

The selected set of sentences from the Kannada web

corpora are grouped into two categories, namely training

and testing corpora. Both the categories are parsed with

Kannada Shallow Parser. Given a sentence, the parser

assigns to it a syntactic structure. This information is used

for creating decision list.

3.3 Kannada Dictionary

Knowledge of language is essential for meaningful

communication through language. Words of a language

and the phonological, morphological, syntactic and

semantic information associated with them, forms a very

important part of the knowledge of language. Knowing

the words is an extremely important part of knowing a

language. Dictionaries are storehouse of such information

and therefore they have key role to play in Natural

Language Processing (NLP).

As in [21], a dictionary may be regarded as a

lexicographical product that is characterized by three

significant features: (1) it has been prepared for one or

more functions; (2) it contains data that have been

selected for the purpose of fulfilling those functions; and

(3) its lexicographic structures link and establish

relationships between the data so that they can meet the

needs of users and fulfill the functions of the dictionary.

By keeping these features in mind, we created a Kannada

electronic dictionary containing around 50000 entries for

our work using corpora in a semi-automated way.

3.4 Kannada Shallow Parser

The lexical and syntactic structure of the sentence in

training and testing corpora are extracted by the Kannada

Shallow Parser. It is developed by IIIT Hyderabad [22].

For each parsed sentence, the shallow parser produces

eight intermediate stage output. Namely, Tokenization,

Morphological analysis, POS tagger, chunker, pruning,

pick one Morph, Head computation and Vibakti

computation. These outputs help us to extract Lexical and

Syntactic structure of the sentence for further processing.

4. DECISION LIST

This Section discus the basics of decision list as well as

its construction using the Kannada corpus.

4.1 One Sense per Collocation

The nearby words provide strong and consistent clues as

to the sense of the target word is referred as the One

Sense per Collocation property. This effect varies

depending upon the type of collocation. It is strongest for

immediately adjacent collocation, and weakens with

distance. It is much stronger for words in an argument-

predicate relationship than for arbitrary associations at

equivalent distance. It is very much stronger for

collocations with content words than those with function

words. In general high reliability of this behavior makes

it an extremely useful property for sense disambiguation.

4.2 Context Features

A context feature is a way of keeping track of the words

that occur surrounding an ambiguous word. The context

features can also be viewed as Uni-grams, Bi-grams, Tri-

grams or include all words in the context as unsorted n-

grams. When part-of-speech tags or case tags were

provided, the surrounding part-of-speech or case tag n-

grams were also used with each respective feature set.

Table 1 shows the initial set of context features

considered.

Table 1: Initial set of Context features

Context features

Word found in +/ k word window

Word immediately to the right (+1 W)

Word immediately to the left (-1 W)

Pair of words at offsets -2 and -1

Pair of words at offsets -1 and +1

Pair of words at offsets +1 and +2

4.3 Decision List Construction

A decision list is a classifier that can best be described as

an extended if-then-else statement. For each matched

condition, there is a single classification. Table 3 shows

an example decision list for the ambiguous Kannada word

[yantra] constructed using the part of the training

corpora as shown in table 2. If the context of the test

sentence does not have any of the features in the decision

list, the classifier simply chooses the most frequent sense

with a confidence of the probability of that sense. The

decision list classifier uses the log-likelihood of

correspondence between each context feature and each

sense, using additive smoothing. The decision list was

created by ordering the correspondences from strongest to

weakest. Instances that did not match any rule in the

decision list were assigned the most frequent sense, as

calculated from the training data. The log-likelihood of

correspondence is used as a confidence for classifier

combination.

International Journal of EmergingTrends & Technology in Computer Science(IJETTCS)

Web Site: www.ijettcs.org Email: editor@ijettcs.org, editorijettcs@gmail.com

Volume 2, Issue 3, May June 2013 ISSN 2278-6856

Volume 2, Issue 3 May June 2013 Page 275

Table 2: Training Corpus

Sense Training sentence (Key Word In Context)

A CO [dehaliya yantra mantra]

'Yantra Mantra Delhi'

B ecC O [tyalavillade calisuva

yantra] 'Without oil moving machinery'

B 1 o nV [kruShi kelasa

sugamagoLisida yantra] 'The agricultural work

streamlined machinery'

A O V I^ Io

. [raamanu pandithara baLI hoogi yantra

haakisikonDu bandanu] 'Ramu received an

astrological device from the panDith'

B O -O Iv^C. [raamana

maneya niirettuva yantra haaLaagide] 'Rama's

house water lifting machine is got corrupted'.

Table 3: Decision List.

Deduced

Sense

Collocation Sentence

Number

A [mantra] 'mantra' 1

B O [calisuva] 'moving' 2

B 1 [kruShi] 'agricultural' 3

A [panDita] 'astrologer' 4

B -O [niirettuva] 'water lifting' 5

5. EXPERIMENTATION

This section describes the methodology used to do

unrestricted WSD and proposes an algorithm to

disambiguate all the content words in a given sentence.

5.1 Methodology

Our proposed algorithm uses decision list constructed

using training corpora to disambiguate all the content

words in the testing corpora. All ambiguous content

words in a sentence are disambiguated using the one

sense per collocation property of the human language.

5.2 Algorithm Decision List based Kannada WSD

.1. Collect a large set of collocation for the ambiguous

word.

2. Calculate word sense probability distribution for all

such collocation.

3. Calculate the log-likelihood ratio using the formula

log(pr(Sense A/Collocationi)/pr(Sense B/Collocationi))

4. Higher log-likelihood =more predictive evidence.

5. Collocations are ordered in a decision list with most

predictive collocations ranked highest.

6. IMPLEMENTATION

The algorithm is implemented using Perl. The System

architecture, required files and the modules for

implementing the algorithm are discussed here.

Figure 1 shows the architecture of the proposed system. It

consists of different modules based on their functionality.

Figure 1 Proposed System Architecture

6.1 Implementation Modules

The program uses the following modules for

disambiguation task.

a) Sentence extractor: This module extracts the sentence

from corpora for disambiguation task. And writes them

into training as well as testing corpora files depending on

the sentence category.

b) Kannada Shallow Parser: It splits the input sentence

across the space and extracts only valid Kannada words

from a sentence and does the morphological analysis of

the valid Kannada words.

c) AmbiWord Extractor: It extracts all the ambiguous

words present in a sentence.

d) Disambiguator: This module will identify and extract

correct sense of an ambiguous word.

6.2 Files

The following files are used during program execution.

a) Training corpora: This file contains randomly selected

set of sentences from Kannada web corpora. The decision

list required for content word sense disambiguation is

Kannada Corpora

Sentence

Extractor

Kannada Shallow

Parser

Disambiguator

AmbiWord

Extractor

Disambiguated

Sentence

Dictionary

International Journal of EmergingTrends & Technology in Computer Science(IJETTCS)

Web Site: www.ijettcs.org Email: editor@ijettcs.org, editorijettcs@gmail.com

Volume 2, Issue 3, May June 2013 ISSN 2278-6856

Volume 2, Issue 3 May June 2013 Page 276

constructed using this file.

b) Testing Corpora: This file contains randomly selected

set of sentences from a Kannada Web Corpora. These are

different from the set of sentences present in training

Corpora. This file acts as input file. The sentences in the

file are given as an input for an algorithm. The algorithm

disambiguates all the content words in a given sentence.

7. EVALUATION

We used the sentences from testing corpora as a test bed

for testing the program.

7.1 Test Document

Table 4 shows the partial list of sentences used to test the

program.

Table 4: Sentences extracted from test Corpora.

Table 5 shows the transliteration and English translation

of the sentences shown in table 4.

Table 5: Transliteration & Translation.

Test Sentence

[paakistaana nere santhrastharige neravu]

Help for Pakisthan flood victims

[siite neredaru] 'Seethe matured biologically'

[vyavastheya eduru iijuva heNNumakkaLinda naanu

aatmavishvaasada paaTha kalitiddeene]. 'I learnt a

lesson of confidence from females who swim against the

system'

[raamanige anna iDu] serve rice to rama

[raajabaag savaarana urusu khyaatavaagide]. 'raajabaag

savaara's fair is popular'

[baTTegaLu oNagive] 'Cloths are dried'.

[ondu niirettuva yantra tanni] 'bring water lifting

machine'

[panDitaru yantra koTTaru] 'Astrologer gave an

astrological device'

7.2 Result

The results obtained for the test sentences are shown in

Table 6.

Table 6: The program execution result.

Example Sentence Comment

ck O n ,. Correct

e O. Wrong

k IV

_co c OcC

Wrong

O-n . Correct

Ocn c c^C. Correct

L: ^c. Correct

-O -. Correct

o. Correct

7.3 Discussion

During the disambiguation process, the following

observations were made.

a) The accuracy of the algorithm is entirely depends on

the decision list constructed using the training examples

present in the training corpora.

b) The classifier is word specific.

c) A new classifier needs to be trained for every word that

you want to disambiguate.

d) The decision list algorithm does not need large tagged

corpus. It is simple to implement.

e) It is a simple semi-supervised algorithm which builds

on a supervised algorithm.

f) Understanding the decision list is easy.

g) The system assigns incorrect sense 'gather' for a

sentence e O [siite neredaru] instead of

'biologically matured' sense. This is because of the

insufficient context information. This kind of problems

can be easily addressed at discourse level analysis but

it is behind the scope of the present work.

h) It is possible to capture the clues provided by the

proper nouns from the corpus to disambiguate the word.

In a sentence Occn c c^C

[raajaabaag savaarana urusu khyaatavaagide], Occn

c [raajaabaag savaarana] is a proper noun. With the

help of this clue it is possible to disambiguate the word

[savaara] easily.

i) In a sentence k IV

_co c OcC [vyavastheya eduru iijuva

heNNumakkaLinda naanu aatmavishvaasada paaTha

International Journal of EmergingTrends & Technology in Computer Science(IJETTCS)

Web Site: www.ijettcs.org Email: editor@ijettcs.org, editorijettcs@gmail.com

Volume 2, Issue 3, May June 2013 ISSN 2278-6856

Volume 2, Issue 3 May June 2013 Page 277

kalitiddeene], IV [heNNumakkaLinda] and

_co [aatmavishvaasada] are compound words,

because both the words are formed using two word

combination, according to linguistic principles the

meaning of the compound words must be deduced using

the constituent words in it. But these words are not

available in lexical database, hence our system interprets

the constituent parts of the compound word as a separate

words, it leads to wrong result during the disambiguation

process.

8. CONCLUSION AND FUTURE WORK

In this paper, we proposed a Kannada Word Sense

Disambiguation system. It is a valuable resource for

resource poor Kannada Language. we constructed

reasonable size Kannada web corpora using a corpus

builder tool. The corpus is divided in to training and

testing corpus. The decision list is constructed using

training corpora and then used them for disambiguating

the sentences in the testing corpora. Experiments are

conducted and the results obtained are described. The

performance of the system with respect to applicability

and precision are encouraging.

In future, we are planning to build a robust Kannada

Word Sense Disambiguation system by addressing the

compound words and discourse level issues.

References

[1] Eneko Agirre, Philip Edmonds, Word Sense

Disambiguation: Algorithms and Applications, Text,

Speech and Language Technology, XXXIII,

Springer, 2007.

[2] Fellbaum Christiane,WordNet: An electronic Lexical

database, MIT Press, 1998.

[3] M. Lesk, "Automatic sense disambiguation using

machine readable dictionaries: how to tell a pine

cone from an ice cream cone," In Proceeding of the

SIGDOC, 1986.

[4] D. Walker, R. Amsler, "The Use of Machine

Readable Dictionaries in Sublanguage Analysis," In

Analyzing Language in Restricted Domains,

Grishman and Kittredge (eds), pp. 69 - 83, LEA

Press, 1986.

[5] Roberto Navigli, Paolo Velardi, "Structural Semantic

Interconnections: A Knowledge-Based Approach to

Word Sense Disambiguation," IEEE Transactions On

Pattern Analysis and Machine Intelligence, 1992.

[6] Vronis Jean, "HyperLex: Lexical cartography for

information retrieval," Computer Speech &

Language, XVIII (3), pp. 223-252, 2004.

[7] Schtze, Hinrich. "Automatic word sense

discrimination," Computational Linguistics, XXIV

(1), pp. 97123, 1998.

[8] K. Lee Yoong, T. Ng Hwee, T Tee chia, "Supervised

word sense disambiguation with support vector

machines and multiple knowledge sources," In

Proceedings of Senseval-3: Third International

Workshop on the Evaluation of Systems for the

Semantic Analysis of Text, pp. 137-140, Barcelona,

Spain, 2004.

[9] T. Ng Hwee, Hian B. Lee, "Integrating multiple

knowledge sources to disambiguate word senses: An

exemplar-based approach," In Proceedings of the

34th Annual Meeting of the Association for

Computational Linguistics (ACL), pp. 40-47, Santa

Cruz, U.S.A.,1996.

[10] R.L. Rivest, "Learning decision lists," Machine

learning, pp. 229 - 246, II, 1987.

[11] Yarowsky David, "Decision lists for lexical

ambiguity resolution: Application to accent

restoration in Spanish and French," In Proceedings

of the 32nd Annual Meeting of the association for

Computational Linguistics (ACL), pp. 88-95, Las

Cruces. U.S.A., 1994.

[12] Yarowsky David, "Unsupervised word sense

disambiguation rivaling supervised methods," In

Proceedings of the 33rd Annual Meeting of the

Association for Computational Linguistics (ACL),

pp. 189-196, Cambridge, MA, 1995.

[13] R. Mihalcea, D. Moldovan, "A method for word

sense disambiguation of unrestricted text," In

Proceedings of 37th Annual Meeting of the ACL, pp.

152-158, Maryland, 1999.

[14] A. Bharathi, V. Chaitanya, R. Sangal, Natural

Language Processing: A paninian Prespective, PHI,

1995.

[15] S.N. Sridhar, Modern Kannada Grammar, Manohar

Publications & Distributors, 2007.

[16] K. Narayana Murthy, Computer processing of

Kannada Language, In Proceedings of the KUWH,

Hampi, 2001.

[17] J. Sinclair, Corpus, Concordance, Collocation,

Oxford University Press, Oxford, 1991.

[18] M. Barlow, "Corpora for theory and Practice,"

Journal of Corpus Linguistics. I (1), pp, 1-38, 1996.

[19] Niladri Sekhar Dash, Bidyut Baran Chaudhuri,

"Relevance of Corpus in Language research and

applications," Journal of Dravidian Linguistics,

XXXII (2), pp. 101-122, 2002.

[20] S. Parameswarappa, V.N. Narayana, G.N. Bharathi,

A Novel Approach to Build Kannada Web Corpus,

In the proceedings of IEEE co-sponsored

International Conference on Computer,

Communication and Informatics (ICCCI-2012),

pages 259-264, IEEE Digital Library, SIET,

Coimbatore, Jan 2012.

[21] Sandro Nielsen, "The effect of Lexicographical

Information cost on Dictionary making and use," In

Proceedings of Lexikos 18, pp 170-189, 2008.

[22] "Kannada Shallow Parser," International Institute of

Information Technology, Hyderabad, [Online].

Available: http://ltrc.iiit.ac.in/analyzer/Kannada.

International Journal of EmergingTrends & Technology in Computer Science(IJETTCS)

Web Site: www.ijettcs.org Email: editor@ijettcs.org, editorijettcs@gmail.com

Volume 2, Issue 3, May June 2013 ISSN 2278-6856

Volume 2, Issue 3 May June 2013 Page 278

AUTHOR

Parameswarappa S received the B.E. and

M.E degrees in CSE from Bangalore

University in 1995 and 1999 respectively.

Since 1995, he is working as a faculty in

Computer Science & Engineering decipline.

Currently he is pursuing Ph.D. in Computer Science &

Engineering from Visvesvaraya Technological

University, Belguam, Karnataka, India. His areas of

interest include Natural Language Processing, Machine

Translation and Word Sense Disambiguation. He has 12

International publications to his credit.

Dr. V.N. Narayana received the B.E. from

Mysore University in 1982. M.E. from IISc

Bangalore in 1986 and Ph.D. from IIT Kanpur

in 1995. He is serving MCE, Hassan since

1983. His areas of interest are A.I., NLP, Machine

Translation, Compilers and Operating Systems. He has

18 national and 12 international publications to his credit.

Você também pode gostar

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)No EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Nota: 4.5 de 5 estrelas4.5/5 (120)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaNo EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaNota: 4.5 de 5 estrelas4.5/5 (266)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryNo EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryNota: 3.5 de 5 estrelas3.5/5 (231)

- The Little Book of Hygge: Danish Secrets to Happy LivingNo EverandThe Little Book of Hygge: Danish Secrets to Happy LivingNota: 3.5 de 5 estrelas3.5/5 (399)

- Never Split the Difference: Negotiating As If Your Life Depended On ItNo EverandNever Split the Difference: Negotiating As If Your Life Depended On ItNota: 4.5 de 5 estrelas4.5/5 (838)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeNo EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeNota: 4 de 5 estrelas4/5 (5794)

- The Emperor of All Maladies: A Biography of CancerNo EverandThe Emperor of All Maladies: A Biography of CancerNota: 4.5 de 5 estrelas4.5/5 (271)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyNo EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyNota: 3.5 de 5 estrelas3.5/5 (2219)

- Team of Rivals: The Political Genius of Abraham LincolnNo EverandTeam of Rivals: The Political Genius of Abraham LincolnNota: 4.5 de 5 estrelas4.5/5 (234)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersNo EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersNota: 4.5 de 5 estrelas4.5/5 (344)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreNo EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreNota: 4 de 5 estrelas4/5 (1090)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureNo EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureNota: 4.5 de 5 estrelas4.5/5 (474)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceNo EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceNota: 4 de 5 estrelas4/5 (895)

- The Unwinding: An Inner History of the New AmericaNo EverandThe Unwinding: An Inner History of the New AmericaNota: 4 de 5 estrelas4/5 (45)

- The Yellow House: A Memoir (2019 National Book Award Winner)No EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Nota: 4 de 5 estrelas4/5 (98)

- Preview Activity 1: 1 2 3 Black Numbers?Documento6 páginasPreview Activity 1: 1 2 3 Black Numbers?neli kaAinda não há avaliações

- Her, Its) (Boşlukları Verilenlerle Doldurunuz)Documento2 páginasHer, Its) (Boşlukları Verilenlerle Doldurunuz)ESL-ELT Teacher100% (6)

- Action & State Verbs ExercisesDocumento3 páginasAction & State Verbs ExercisesChristine Jennifer WightAinda não há avaliações

- Common OET Grammar Mistakes in Writings Infinitives (Special Verbs) PDFDocumento16 páginasCommon OET Grammar Mistakes in Writings Infinitives (Special Verbs) PDFChinonso Nelse NwadeAinda não há avaliações

- Hippo 2 Writing SFDocumento2 páginasHippo 2 Writing SFBojanaAinda não há avaliações

- EN7G-I-b-11 Observe Correct Subject-Verb Agreement.Documento7 páginasEN7G-I-b-11 Observe Correct Subject-Verb Agreement.RANIE VILLAMIN100% (2)

- Impact of Covid-19 On Employment Opportunities For Fresh Graduates in Hospitality &tourism IndustryDocumento8 páginasImpact of Covid-19 On Employment Opportunities For Fresh Graduates in Hospitality &tourism IndustryInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Design and Detection of Fruits and Vegetable Spoiled Detetction SystemDocumento8 páginasDesign and Detection of Fruits and Vegetable Spoiled Detetction SystemInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Experimental Investigations On K/s Values of Remazol Reactive Dyes Used For Dyeing of Cotton Fabric With Recycled WastewaterDocumento7 páginasExperimental Investigations On K/s Values of Remazol Reactive Dyes Used For Dyeing of Cotton Fabric With Recycled WastewaterInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- THE TOPOLOGICAL INDICES AND PHYSICAL PROPERTIES OF n-HEPTANE ISOMERSDocumento7 páginasTHE TOPOLOGICAL INDICES AND PHYSICAL PROPERTIES OF n-HEPTANE ISOMERSInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Detection of Malicious Web Contents Using Machine and Deep Learning ApproachesDocumento6 páginasDetection of Malicious Web Contents Using Machine and Deep Learning ApproachesInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- THE TOPOLOGICAL INDICES AND PHYSICAL PROPERTIES OF n-HEPTANE ISOMERSDocumento7 páginasTHE TOPOLOGICAL INDICES AND PHYSICAL PROPERTIES OF n-HEPTANE ISOMERSInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Study of Customer Experience and Uses of Uber Cab Services in MumbaiDocumento12 páginasStudy of Customer Experience and Uses of Uber Cab Services in MumbaiInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Study of Customer Experience and Uses of Uber Cab Services in MumbaiDocumento12 páginasStudy of Customer Experience and Uses of Uber Cab Services in MumbaiInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Detection of Malicious Web Contents Using Machine and Deep Learning ApproachesDocumento6 páginasDetection of Malicious Web Contents Using Machine and Deep Learning ApproachesInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Customer Satisfaction A Pillar of Total Quality ManagementDocumento9 páginasCustomer Satisfaction A Pillar of Total Quality ManagementInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Experimental Investigations On K/s Values of Remazol Reactive Dyes Used For Dyeing of Cotton Fabric With Recycled WastewaterDocumento7 páginasExperimental Investigations On K/s Values of Remazol Reactive Dyes Used For Dyeing of Cotton Fabric With Recycled WastewaterInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- An Importance and Advancement of QSAR Parameters in Modern Drug Design: A ReviewDocumento9 páginasAn Importance and Advancement of QSAR Parameters in Modern Drug Design: A ReviewInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Customer Satisfaction A Pillar of Total Quality ManagementDocumento9 páginasCustomer Satisfaction A Pillar of Total Quality ManagementInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Performance of Short Transmission Line Using Mathematical MethodDocumento8 páginasPerformance of Short Transmission Line Using Mathematical MethodInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Soil Stabilization of Road by Using Spent WashDocumento7 páginasSoil Stabilization of Road by Using Spent WashInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Analysis of Product Reliability Using Failure Mode Effect Critical Analysis (FMECA) - Case StudyDocumento6 páginasAnalysis of Product Reliability Using Failure Mode Effect Critical Analysis (FMECA) - Case StudyInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- The Mexican Innovation System: A System's Dynamics PerspectiveDocumento12 páginasThe Mexican Innovation System: A System's Dynamics PerspectiveInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- An Importance and Advancement of QSAR Parameters in Modern Drug Design: A ReviewDocumento9 páginasAn Importance and Advancement of QSAR Parameters in Modern Drug Design: A ReviewInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Analysis of Product Reliability Using Failure Mode Effect Critical Analysis (FMECA) - Case StudyDocumento6 páginasAnalysis of Product Reliability Using Failure Mode Effect Critical Analysis (FMECA) - Case StudyInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Staycation As A Marketing Tool For Survival Post Covid-19 in Five Star Hotels in Pune CityDocumento10 páginasStaycation As A Marketing Tool For Survival Post Covid-19 in Five Star Hotels in Pune CityInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- The Impact of Effective Communication To Enhance Management SkillsDocumento6 páginasThe Impact of Effective Communication To Enhance Management SkillsInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- A Digital Record For Privacy and Security in Internet of ThingsDocumento10 páginasA Digital Record For Privacy and Security in Internet of ThingsInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Secured Contactless Atm Transaction During Pandemics With Feasible Time Constraint and Pattern For OtpDocumento12 páginasSecured Contactless Atm Transaction During Pandemics With Feasible Time Constraint and Pattern For OtpInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- A Deep Learning Based Assistant For The Visually ImpairedDocumento11 páginasA Deep Learning Based Assistant For The Visually ImpairedInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- A Comparative Analysis of Two Biggest Upi Paymentapps: Bhim and Google Pay (Tez)Documento10 páginasA Comparative Analysis of Two Biggest Upi Paymentapps: Bhim and Google Pay (Tez)International Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Challenges Faced by Speciality Restaurants in Pune City To Retain Employees During and Post COVID-19Documento10 páginasChallenges Faced by Speciality Restaurants in Pune City To Retain Employees During and Post COVID-19International Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Advanced Load Flow Study and Stability Analysis of A Real Time SystemDocumento8 páginasAdvanced Load Flow Study and Stability Analysis of A Real Time SystemInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Anchoring of Inflation Expectations and Monetary Policy Transparency in IndiaDocumento9 páginasAnchoring of Inflation Expectations and Monetary Policy Transparency in IndiaInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Predicting The Effect of Fineparticulate Matter (PM2.5) On Anecosystemincludingclimate, Plants and Human Health Using MachinelearningmethodsDocumento10 páginasPredicting The Effect of Fineparticulate Matter (PM2.5) On Anecosystemincludingclimate, Plants and Human Health Using MachinelearningmethodsInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Synthetic Datasets For Myocardial Infarction Based On Actual DatasetsDocumento9 páginasSynthetic Datasets For Myocardial Infarction Based On Actual DatasetsInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- English Grammar Worksheet Date: Assignment No. 1 - Goal # 7 Full NameDocumento4 páginasEnglish Grammar Worksheet Date: Assignment No. 1 - Goal # 7 Full NameJavier Martinez9-BAinda não há avaliações

- L3 Using Discourse MarkersDocumento11 páginasL3 Using Discourse MarkersJonalyn MempinAinda não há avaliações

- LAB # 07 Facts and Rules in PROLOG: ObjectiveDocumento6 páginasLAB # 07 Facts and Rules in PROLOG: ObjectiveMuhammad HassaanAinda não há avaliações

- Syntax Test 5Documento2 páginasSyntax Test 5Lệ Huyền MaiAinda não há avaliações

- Giao An Tieng Anh 10Documento242 páginasGiao An Tieng Anh 10Bui Tuyet Chinh TuyetchinhAinda não há avaliações

- GRADE 10 ENGLISH Tabular LessonDocumento11 páginasGRADE 10 ENGLISH Tabular LessonTin Tin IIAinda não há avaliações

- Vocabulary and grammar practice worksheet with animals, recycling, verbs and adjectivesDocumento3 páginasVocabulary and grammar practice worksheet with animals, recycling, verbs and adjectivesmegiAinda não há avaliações

- Multiple Choice PDFDocumento1 páginaMultiple Choice PDFCami CerneanuAinda não há avaliações

- Next Move 4 Unit 3 Test 20230315 143350 PDFDocumento7 páginasNext Move 4 Unit 3 Test 20230315 143350 PDFGOAT CATTYAinda não há avaliações

- MODALS PresentasiDocumento17 páginasMODALS PresentasiArif Tirtana LieAinda não há avaliações

- Yerror Analysis On The Essays of The Education StudentsDocumento21 páginasYerror Analysis On The Essays of The Education StudentsHeaven's Angel LaparaAinda não há avaliações

- TensesDocumento8 páginasTensesKaren KongAinda não há avaliações

- PRACTICE 18. Personal Pronouns: Directions: Underline Each Pronoun. Note How It Is UsedDocumento4 páginasPRACTICE 18. Personal Pronouns: Directions: Underline Each Pronoun. Note How It Is Usedyayan fuhsadAinda não há avaliações

- Time Expressions With The Past PerfectDocumento3 páginasTime Expressions With The Past PerfectMuhanmad FachriAinda não há avaliações

- UNIT 2 - Final Test RevisionDocumento2 páginasUNIT 2 - Final Test Revisionleo2009Ainda não há avaliações

- Waj 3022 English Language Proficiency 1Documento18 páginasWaj 3022 English Language Proficiency 1nuranis nabilahAinda não há avaliações

- Overall TensesDocumento1 páginaOverall TensesSayid ÖzcanAinda não há avaliações

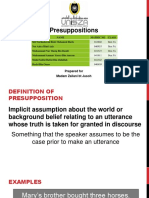

- Presuppositions: Prepared For Madam Zailani BT JusohDocumento17 páginasPresuppositions: Prepared For Madam Zailani BT JusohHazierah HarisAinda não há avaliações

- Stress - WstępDocumento11 páginasStress - WstępbadooagaAinda não há avaliações

- English Task FirstDocumento11 páginasEnglish Task FirstNayeli TipanAinda não há avaliações

- Verb to Be Practice WorksheetDocumento3 páginasVerb to Be Practice WorksheetMaria Cristina FornelliAinda não há avaliações

- Ask Questions With WILL YOU BE - ING?Documento2 páginasAsk Questions With WILL YOU BE - ING?fernandoAinda não há avaliações

- PHD's Degree Arabic Language and LiteratureDocumento5 páginasPHD's Degree Arabic Language and LiteratureEdita HondoziAinda não há avaliações

- Ieo Sample Paper Class-10Documento2 páginasIeo Sample Paper Class-10name nameAinda não há avaliações