Escolar Documentos

Profissional Documentos

Cultura Documentos

Anti-Virus Comparative: Performance Test (AV Products)

Enviado por

fkyouTítulo original

Direitos autorais

Formatos disponíveis

Compartilhar este documento

Compartilhar ou incorporar documento

Você considera este documento útil?

Este conteúdo é inapropriado?

Denunciar este documentoDireitos autorais:

Formatos disponíveis

Anti-Virus Comparative: Performance Test (AV Products)

Enviado por

fkyouDireitos autorais:

Formatos disponíveis

AntiVirusComparativePerformanceTest(AVProducts)October2012

Anti-Virus Comparative

Performance test (AV Products)

Impact of Anti-Virus Software on System Performance

Language: English

October 2012

Last Revision: 19th October 2012

www.av-comparatives.org

www.avcomparatives.org

AntiVirusComparativePerformanceTest(AVProducts)October2012

www.avcomparatives.org

Table of Contents

1. Introduction

2. Tested products

3. Test methods

4. Side notes and comments

5. Test cases

6. Test results

7. Award levels reached in this test

12

8. Copyright and Disclaimer

13

AntiVirusComparativePerformanceTest(AVProducts)October2012

www.avcomparatives.org

Introduction

We want to make clear that the results in this report are intended to give only an indication of the

impact on system performance (mainly by the real-time/on-access components) of the various AntiVirus products in these specific tests. Users are encouraged to try out the software on their own PCs

and form an opinion based on their own observations.

Tested products

The following products were evaluated (with default settings) in this test1:

avast! Free Antivirus 7.0

GFI Vipre Antivirus 2013

AVG Anti-Virus 2013

Kaspersky Anti-Virus 2013

AVIRA Antivirus Premium 2013

McAfee AntiVirus Plus 2013

Bitdefender Antivirus Plus 2013

Microsoft Security Essentials 4.0

BullGuard Antivirus 2013

Panda Cloud Antivirus Free 2.0.1

eScan Anti-Virus 11

PC Tools Spyware Doctor with Antivirus 9.0

ESET NOD32 Antivirus 5.2

Qihoo 360 Antivirus 3.0

Fortinet FortiClient Lite 4.3

Sophos Endpoint Security 10

F-Secure Anti-Virus 2013

Trend Micro Titanium Antivirus Plus 2013

G DATA AntiVirus 2013

Webroot SecureAnywhere Antivirus 2013

Please note that the results in this report apply only to the products/versions listed above (e.g. 64Bit versions, product version, etc.). Also, keep in mind that different vendors offer different (and

differing quantities of) features in their products.

The following activities/tests were performed under an up-to-date Windows 7 Professional SP1 64-Bit:

File copying

Archiving / Unarchiving

Encoding / Transcoding

Installing / Uninstalling applications

Launching applications

Downloading files

PC Mark 7 v1.0.4 Professional Testing Suite

We used the latest available product versions available at time of testing (end of September 2012).

3

AntiVirusComparativePerformanceTest(AVProducts)October2012

www.avcomparatives.org

Test methods

The tests were performed on an Intel Core i5 750 machine with 4GB of RAM and SATAII hard disks.

The performance tests were done on a clean and fully updated Windows 7 Professional SP1 64 Bit

system (English) and then with the installed Anti-Virus software (with default settings). The tests

have been done with an active internet connection to simulate real world impact of cloud services/features.

The hard disks were defragmented before starting the various tests, and care was taken to minimize

other factors that could influence the measurements and/or comparability of the systems. Optimizing

processes/fingerprinting used by the products were also considered this means that the results represent the impact on a system which has already been used by the user for a while. The tests were

repeated several times (with and without fingerprinting) in order to get mean values and filter out

measurement errors. After each run the workstation was defragmented and rebooted. We simulated

various file operations that a computer user would execute: copying2 different types of clean files

from one place to another, archiving and unarchiving files, encoding and transcoding3 audio and video files, converting DVD-Files to iPod format, downloading files from Internet, launching applications,

etc. We also used a third-party industry recognized performance testing suite (PC Mark 7 Professional)

to measure the system impact during real-world product usage. Readers are invited to evaluate the

various products themselves, to see how they impact on their systems (such as software conflicts

and/or user preferences, as well as different system configurations that may lead to varying results).

Security products need to load on systems at an early stage to provide security from the very beginning this load has some impact on the time needed for a system to start up. Measuring boot times

accurately is challenging. The most significant issue is to define exactly when the system is fully

started, as many operating environments may continue to perform start-up activities for some time

after the system appears responsive to the user. It is also important to consider when the protection

provided by the security solution being tested is fully active, as this could be a useful measure of

boot completion as far as the security solution is concerned. Some Anti-Virus products are loading

their services very late (even minutes later) at boot (users may notice that after some time that the

system loaded, the system gets very slow for some moments), so the system looks like loading very

fast, but it just loads its services later and makes the system also insecure/vulnerable. As we do not

want to support such activities, we still do not measure boot times.

We used around 5GB of data consisting of various file types and sizes (pictures, movies, audio files, various

MS Office documents, PDF files, applications/executables, Microsoft Windows 7 system files, archives, etc.).

Converting MP3 files to WAV, MP3 to WMA, AVI to MPG and MPG to AVI, as well as iPod format

4

AntiVirusComparativePerformanceTest(AVProducts)October2012

www.avcomparatives.org

Side notes and comments

The on-access/real-time scanner component of Anti-Virus software runs as a background process to

check all files that are accessed, in order to protect the system continuously against malware threats.

For example, on-access scanners scan files as soon as they are accessed, while (e.g.) behaviourblockers add a different layer of protection and monitor what the file does when it is already executed/running. The services and processes that run in the background to do these tasks also require and

use system resources. Suite products have usually a higher impact on system performance than AntiVirus-only products, as more services/features are included and running in the background.

Security products need to be active deep in the system in order to protect it and e.g. to scan processes and so on that are already active during the system start-up, to identify rootkits and other malware. Those procedures add some extra time and thus a delay in system boot/start up.

If a product takes up too many system resources, users get annoyed and may either disable or uninstall some essential protective features (and considerably compromise the security of their system) or

may switch to security software that is less resource-hungry. Therefore, it is important not only that

Anti-Virus software provides high detection rates and good protection against malware, but also that

it does not degrade system performance or trouble users.

While this report looks at how much impact various Internet Security products have on system performance, it is not always the security software the main factor responsible for a slow system. Other

factors also play a role, and if users follow some simple rules, system performance can be improved

noticeably. The next sections address some of the other factors that may play a part.

A few common problems observed on some user PCs:

-

Old hardware: If a PC already runs at a snails pace because it has ten-year-old hardware, using modern (security) software may make it unusable.

o If possible, buy a new PC that at least meets the minimum recommended requirements of

the software you want to use. Multi-Core processors are preferable.

o Adding more RAM does not hurt. If you use Windows XP or Windows 7, you should use a

minimum of 2GB of RAM. If you use Vista switch to Windows 7. 64-Bit systems are preferable, as especially software which is optimized for such systems will run faster.

o Make sure you have only ONE Anti-Virus program with real-time protection. If your new PC

came with a trial Anti-Virus program, remove this before installing a different AV program.

Keep all your software up-to-date: Using an Anti-Virus version from e.g. 2010 does not protect you as well as the newer version would, even though you may still be able to update the

signatures. Please visit http://update.microsoft.com regularly and keep your operating system

up-to-date by installing the recommended patches. Any software can have vulnerabilities and

bugs, so keep all the software installed on your PC up-to-date: this will not only protect you

against many exploits and vulnerabilities, but also give you any other application improvements that have been introduced.

AntiVirusComparativePerformanceTest(AVProducts)October2012

www.avcomparatives.org

Clean up the content of your hard disk:

o If your hard disk is almost full, your system performance will suffer accordingly. Leave at

least 20% of your disk space free and move your movies and other infrequently accessed

files to another (external) disk. If money is not an issue, consider buying solid state

drives (SSDs).

o Uninstall unneeded software. Often, the slowdown that users notice after installing an

Anti-Virus product is due to other software on the PC running in the background (that is,

due to software conflicts or heavy file access by other programs, each access requiring anti-virus scanning).

o Remove unneeded entries/shortcuts from the Autostart/start-up folder in the program

menu

o if your PC is already messed up by residual files and registry entries left over by hundreds

of applications you installed and uninstalled after trying them out over the past years, reinstall a clean operating system and install only software you really need (fewer software

installations, fewer potential vulnerabilities and conflicts, and so on) and use e.g. an image/backup tool in order to ensure that you do not have to reinstall everything manually

in future.

Defragment your hard disks regularly: A fragmented hard disk can have a very big impact on

system performance as well as considerably increasing the time needed to boot up the system.

Fingerprinting/Optimization: most Anti-Virus products use various technologies to decrease

their impact on system performance. Fingerprinting is such a technology, where already scanned

files do not get rescanned again for a while (or more rarely) or are whitelisted. This increases the

speed considerably (esp. after some time the PC was used), but also adds some little potential

risk, as not all files are scanned anymore. It is up to the user to decide what to prefer. We suggest performing regularly a full-system scan (to be sure that all files are at least currently found

as clean and to further optimize the fingerprinting).

Be patient: a delay of a few additional seconds due to Anti-Virus is not necessarily a big deal.

However, if even with the suggestions above the performance of your PC still annoys you, for instance, after you have installed the Anti-Virus you should consider trying out another Anti-Virus

product. (If you only notice a slow-down after using the Anti-Virus for a long time, there are

probably other factors behind the slowdown). Never reduce your security by disabling essential

protection features, just in the hope of gaining a slightly faster PC!

AntiVirusComparativePerformanceTest(AVProducts)October2012

www.avcomparatives.org

Test cases

File copying

Some Anti-Virus products do not scan all kind of files by design/default (e.g. based on their file

extensions), or use fingerprinting technologies, which may skip already scanned files in order to

increase the speed (see comments on page 6). We copied a set of various common file types from one

physical hard disk to another physical hard disk.

Archiving and unarchiving

Archives are commonly used for file storage, and the impact of Anti-Virus software on the time taken

to create new archives or to unarchive files from existing archives may be of interest for most users.

We archived a set of different file types which are widespread at home and office workstations form

one physical hard disk to another physical hard disk and unzipped them after this again on a third

physical hard disk. The results already consider the fingerprinting/optimization technologies of the

Anti-Virus products, as most users usually make archives of files they have on their disk.

Encoding/transcoding

Music files are often stored and converted on home systems, and converting such files takes system

resources. Due that, many home users may be interested to know if their Anti-Virus product imposes a

slowdown while converting multimedia files from one format to another. We encoded and transcoded

some multimedia files with FFmpeg, and for the iPod conversion we used HandBrakeCLI. The impact

during FFmpeg and iPod converting was almost the same.

Installing/uninstalling applications

We installed several programs with MSI installers, and then uninstalled them and measured how long

it took. We did not consider fingerprinting, because usually an application is only installed once.

Launching applications

Office document files and PDF files are very common. We opened some large document files in Microsoft Office (and closed it) and some large PDF files in Adobe Acrobat Reader (and closed it). Before

each opening, the workstation was rebooted. The time taken for the viewer or editor application to

open and a document to be displayed was measured. Although we list the results for the first opening

and the subsequent openings, we consider the subsequent openings more important, as normally this

operation is done several times by users, and optimization features of the Anti-Virus products take

place, minimizing their impact on the systems.

Downloading files

Files are downloaded from the internet using Internet Explorer.

AntiVirusComparativePerformanceTest(AVProducts)October2012

www.avcomparatives.org

Test results

These specific test results show the impact on system performance that Anti-Virus products have,

compared to the other tested Anti-Virus products. The reported data just give an indication and are

not necessarily applicable in all circumstances, as too many factors can play an additional part.

As we noticed that delivering percentages get easily misinterpreted by users (as well as misused by

marketing departments or the press) and percentages would need adjustments when other hardware

specifications are being used, we grouped the percentage results by clustering them.

The impact within those categories does not statistically differ, also considering error measurements.

The testers defined the categories by consulting statistical methods like hierarchal clustering and

taking into consideration what would be noticed from users perspective or compared to the impact of

the other security products.

Note: As the single performance results (page 9) are built using clusters, if some products are faster/slower than others this reflects in the results. Due to that, the results cannot be directly compared

with results from previous tests, as they can only be compared within the test. This means that it

would be wrong to state that a product got slower (in some areas) compared to last year, while it

would be correct to state that a product was (within the test) slower than those in the higher category.

AntiVirusComparativePerformanceTest(AVProducts)October2012

www.avcomparatives.org

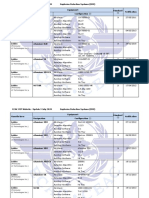

Overview of single AV-C performance scores

File copying

Vendor

On first run

On subsequent runs

Avast

AVG

AVIRA

Bitdefender

BullGuard

eScan

ESET

Fortinet

F-Secure

G DATA

GFI Vipre

Kaspersky

McAfee

Microsoft

Panda

PC Tools

Qihoo

Sophos

Trend Micro

Webroot

Installing/

Encoding/

Archiving/

uninstalling

transcoding

unarchiving

applications

On first run

slow

On subsequent runs

Key:

mediocre

fast

veryfast

Launching applications

OpenWord

OpenPDF

On first run

On subsequent runs

Downloading

files

AntiVirusComparativePerformanceTest(AVProducts)October2012

www.avcomparatives.org

PC Mark Tests

In order to provide an industry-recognized performance test, we used the PC Mark 7 Professional Edition4 testing suite. Users using PC Mark 7 should take care to minimize all external factors which

could affect the testing suite and follow strictly at least the considerations/suggestions documented

inside the PC Mark manual, in order to get consistent and valid/useful results. Furthermore, the tests

should be repeated several times to verify them. For more information about the various consumer

scenarios tests included in PC Mark, please read the whitepaper on their website5.

Without AV is tested on a baseline6 system, which scores 100 points in the PC Mark test.

PC Mark points

without AV

Webroot

ESET

F-Secure

Sophos

Avast

Avira

Microsoft

Qihoo

AVG

Panda

BitDefender

Kaspersky

Fortinet

eScan

McAfee

G DATA

GFI Vipre

Trend Micro

BullGuard

PC Tools

100

99,8

99,7

99,6

99,5

99,3

99,3

99,3

99,3

98,8

98,8

98,7

98,1

97,9

97,8

97,8

97,7

97,4

95,8

95,7

95,3

For more information, see http://www.pcmark.com/benchmarks/pcmark7/

http://www.pcmark.com/wp-content/uploads/2011/05/PCMark7_Whitepaper.pdf (PDF)

6

Baseline system: Intel Core i5 750 machine with 4GB RAM, ATI Radeon HD4300 (512 MB)

5

10

AntiVirusComparativePerformanceTest(AVProducts)October2012

www.avcomparatives.org

Summarized results

Users should weight the various subtests according to their needs. We applied a scoring system in

order to sum up the various results.

For file copying we took the mean values, as well as for launching applications (on subsequent

runs). Like in previous performance reports, very fast gets 15 points, fast gets 10 points, mediocre gets 5 points and slow gets zero points. This leads to the following results:

AV-C Score

PC Mark Score

TOTAL

Webroot

ESET

Avast

Microsoft

88

88

88

88

99,8

99,7

99,3

99,3

187,8

187,7

187,3

187,3

F-Secure

Kaspersky

AVG

Panda

McAfee

AVIRA

Qihoo

BitDefender

Sophos

eScan

83

83

81

80

78

76

76

76

75

75

99,6

98,1

98,8

98,8

97,8

99,3

99,3

98,7

99,5

97,8

182,6

181,1

179,8

178,8

175,8

175,3

175,3

174,7

174,5

172,8

BullGuard

Fortinet

PC Tools

G DATA

GFI Vipre

Trend Micro

73

68

68

65

60

58

95,7

97,9

95,3

97,7

97,4

95,8

168,7

165,9

163,3

162,7

157,4

153,8

11

AntiVirusComparativePerformanceTest(AVProducts)October2012

www.avcomparatives.org

Award levels reached in this test

The following award levels are for the results reached in this performance test report. Please note that

the performance test only tells you how much impact an Anti-Virus product may have on a system

compared to other Anti-Virus products (please read the note on page 8); it does not tell anything

about the effectiveness of the protection a product provides.

PRODUCTS7

AWARDS

Webroot

ESET

Avast

Microsoft

F-Secure

Kaspersky

AVG

Panda

McAfee

AVIRA

Qihoo

BitDefender

Sophos

eScan

BullGuard

Fortinet

PC Tools

G DATA

GFI Vipre

Trend Micro

The above awards have been given based on our assessment of the overall impact results with default

settings under Windows 7 Professional SP1 64 Bit.

We suggest considering products with the same award to be as light as the other products with same award.

12

AntiVirusComparativePerformanceTest(AVProducts)October2012

www.avcomparatives.org

Copyright and Disclaimer

This publication is Copyright 2012 by AV-Comparatives e.V. . Any use of the results, etc. in whole

or in part, is ONLY permitted if the explicit written agreement of the management board of AVComparatives e.V. is given prior to any publication. AV-Comparatives e.V. and its testers cannot be

held liable for any damage or loss, which might occur as a result of, or in connection with, the use of

the information provided in this paper. We take every possible care to ensure the correctness of the

basic data, but no representative of AV-Comparatives e.V. can he held liable for the accuracy of the

test results. We do not give any guarantee of the correctness, completeness, or suitability for a specific purpose of any of the information/content provided at any given time. No one else involved in

creating, producing or delivering test results shall be liable for any indirect, special or consequential

damage, or loss of profits, arising out of, or related to, the use or inability to use, the services provided by the website, test documents or any related data. AV-Comparatives e.V. is a Non-Profit Organization.

AV-Comparatives e.V. (October 2012)

13

Você também pode gostar

- Software Test Plan For A Mobile ApplicationDocumento7 páginasSoftware Test Plan For A Mobile ApplicationKrishna Duvvuri67% (3)

- IPExpert Wireless Workbook Detailed Solutions Guide 3.1a (2 of 2) PDFDocumento785 páginasIPExpert Wireless Workbook Detailed Solutions Guide 3.1a (2 of 2) PDFjamal abki100% (2)

- Understanding Business Continuity ManagementDocumento17 páginasUnderstanding Business Continuity ManagementUsman HamidAinda não há avaliações

- 962-0229 Onan OTPPC Series (Spec A) 300 To 600 Amp Transfer Switch Parts Manual (04-2013)Documento44 páginas962-0229 Onan OTPPC Series (Spec A) 300 To 600 Amp Transfer Switch Parts Manual (04-2013)Willy Alexander RamirezAinda não há avaliações

- Soa Best PracticesDocumento160 páginasSoa Best PracticesKrnv KumarAinda não há avaliações

- McAfee Threat Prevention White PaperDocumento12 páginasMcAfee Threat Prevention White PaperDanilo NascimentoAinda não há avaliações

- WindrunnertornadoDocumento28 páginasWindrunnertornadoAnonymous dti8QzAinda não há avaliações

- Programming Manual For Cheap RFID and Keypad Lock From China Master Code SettingsDocumento3 páginasProgramming Manual For Cheap RFID and Keypad Lock From China Master Code Settingslg_2647100% (1)

- Computer Hardware Servicing Safety and PrecautionsDocumento68 páginasComputer Hardware Servicing Safety and PrecautionsAmir M. Villas63% (8)

- Software Eng..Documento116 páginasSoftware Eng..manjeet_dahiya419910% (1)

- What Is Client-Server and Web Based Testing and How To Test These ApplicationsDocumento9 páginasWhat Is Client-Server and Web Based Testing and How To Test These ApplicationssvelamaAinda não há avaliações

- Anti-Virus Comparative: Performance Test (AV Products)Documento13 páginasAnti-Virus Comparative: Performance Test (AV Products)Lamico DellavvoltoioAinda não há avaliações

- Anti-Virus Comparative: Performance TestDocumento12 páginasAnti-Virus Comparative: Performance Testsanjay sahAinda não há avaliações

- Anti-Virus Comparative: Performance TestDocumento12 páginasAnti-Virus Comparative: Performance TestRichardAinda não há avaliações

- Anti-Virus Comparative: Performance Test (Suite Products)Documento17 páginasAnti-Virus Comparative: Performance Test (Suite Products)Muhammad NavalAinda não há avaliações

- Anti-Virus Comparative: Performance TestDocumento12 páginasAnti-Virus Comparative: Performance TestLuis Eduardo Perez OrtizAinda não há avaliações

- Anti-Virus Comparative: Performance TestDocumento12 páginasAnti-Virus Comparative: Performance TestUbaldito G MonterrosasAinda não há avaliações

- Anti-Virus Comparative: Performance Test (Suite Products)Documento13 páginasAnti-Virus Comparative: Performance Test (Suite Products)HeryAinda não há avaliações

- Av TestDocumento9 páginasAv TestI9 Channel TechAinda não há avaliações

- Anti-Virus Comparative: Performance Test (Suite Products)Documento13 páginasAnti-Virus Comparative: Performance Test (Suite Products)Walter MejiaAinda não há avaliações

- February-June 2017: Whole Product DynamicDocumento15 páginasFebruary-June 2017: Whole Product Dynamickileab5Ainda não há avaliações

- Drivers, Tools, Anti-VirusDocumento103 páginasDrivers, Tools, Anti-Virusmary joy dela cruzAinda não há avaliações

- Anti-Virus Comparative: File Detection Test of Malicious SoftwareDocumento11 páginasAnti-Virus Comparative: File Detection Test of Malicious Softwareyoung-ThucidAinda não há avaliações

- Anti-Virus Comparative: On-Demand Detection of Malicious SoftwareDocumento14 páginasAnti-Virus Comparative: On-Demand Detection of Malicious SoftwarethalhaAinda não há avaliações

- Learning Guide - 32: Information Technology Support ServiceDocumento26 páginasLearning Guide - 32: Information Technology Support ServiceKiya AbdiAinda não há avaliações

- GST 206Documento14 páginasGST 206Osoka VictorAinda não há avaliações

- Installation TestingDocumento12 páginasInstallation TestingNarasimhaVaddeAinda não há avaliações

- Installation TestingDocumento2 páginasInstallation TestingPavan SuryaAinda não há avaliações

- Nur Fiffy Faryanti (System Testing)Documento6 páginasNur Fiffy Faryanti (System Testing)Sharifah Nur izzatiAinda não há avaliações

- Emb SW TST - 5 - 9 - 2009Documento8 páginasEmb SW TST - 5 - 9 - 2009Karuna NidhiAinda não há avaliações

- Contionous DeploymentDocumento19 páginasContionous DeploymentdavidAinda não há avaliações

- Software InstallationDocumento22 páginasSoftware InstallationEmman LabarintoAinda não há avaliações

- Install Software Application LO3 1Documento7 páginasInstall Software Application LO3 1Beriso AbdelaAinda não há avaliações

- Anti-Virus Comparative: On-Demand Detection of Malicious SoftwareDocumento14 páginasAnti-Virus Comparative: On-Demand Detection of Malicious Softwarevhisnu indartoAinda não há avaliações

- Ethiopian TVET-System: Learning Guide # 7Documento7 páginasEthiopian TVET-System: Learning Guide # 7Yohannes Busho100% (1)

- Lesson 1Documento12 páginasLesson 1Angelyn Briones Dela CruzAinda não há avaliações

- Avc Od Aug2011Documento14 páginasAvc Od Aug2011Rodrigo DVAinda não há avaliações

- Unit VI System Implementation: Page 1 of 6Documento6 páginasUnit VI System Implementation: Page 1 of 6Roshan ShresthaAinda não há avaliações

- Testing of Embedded Software ProductsDocumento2 páginasTesting of Embedded Software ProductsrockymadhuAinda não há avaliações

- Basics of Software TestingDocumento15 páginasBasics of Software TestingPratiksha JadhavAinda não há avaliações

- Software Development ProcessDocumento6 páginasSoftware Development Processparth007.uAinda não há avaliações

- Final Exam ReviewDocumento6 páginasFinal Exam ReviewSthephany Rojas SabogalAinda não há avaliações

- SQA Lec 12Documento27 páginasSQA Lec 12Laiba FarooqiAinda não há avaliações

- Avc Prot 2021bDocumento12 páginasAvc Prot 2021bfsrom3Ainda não há avaliações

- Software Maintenance: Corrective Maintenance - Corrective Software Maintenance Is What One WouldDocumento9 páginasSoftware Maintenance: Corrective Maintenance - Corrective Software Maintenance Is What One WouldMd. Fahim SahrierAinda não há avaliações

- System Testing DescriptionDocumento4 páginasSystem Testing DescriptionKiko DoragonAinda não há avaliações

- Oracles Contracts: ScopeDocumento48 páginasOracles Contracts: Scopedesert_05Ainda não há avaliações

- Anti-Virus Comparative: Proactive/retrospective TestDocumento10 páginasAnti-Virus Comparative: Proactive/retrospective TestthalhaAinda não há avaliações

- SE Chapter1Documento41 páginasSE Chapter1JasonDelumenAinda não há avaliações

- Software TestingDocumento31 páginasSoftware TestingDevanshi ShahAinda não há avaliações

- SWE2005 - Software Testing - Review 1.1.2Documento15 páginasSWE2005 - Software Testing - Review 1.1.2Sanjay dbzAinda não há avaliações

- INFS3203 Systems Deployment and Implementation: Dr. Seifeddine BouallegueDocumento37 páginasINFS3203 Systems Deployment and Implementation: Dr. Seifeddine BoualleguefgfdgfdgfdAinda não há avaliações

- Yeye ReportDocumento5 páginasYeye ReportRonna Mae TingcoAinda não há avaliações

- Software Testing - Wikipedia, The Free EncyclopediaDocumento20 páginasSoftware Testing - Wikipedia, The Free EncyclopediaSatheesh SwathiAinda não há avaliações

- 1.4 1 Install App SoftwareDocumento26 páginas1.4 1 Install App SoftwareHanz Albrech AbellaAinda não há avaliações

- Windows 10 Iot Enterprise (14393) : Turn Off Windows Update and Managing UpdatesDocumento3 páginasWindows 10 Iot Enterprise (14393) : Turn Off Windows Update and Managing UpdatesLAI YEEK HRVEAinda não há avaliações

- Firmware Update Best Practices For Immv1 SystemsDocumento17 páginasFirmware Update Best Practices For Immv1 SystemsVimal RajAinda não há avaliações

- Software Engineering Chapter 1Documento60 páginasSoftware Engineering Chapter 1Dnyaneshwar DeshmukhAinda não há avaliações

- Software Testing Is An Investigation Conducted To Provide Stakeholders With Information About TheDocumento23 páginasSoftware Testing Is An Investigation Conducted To Provide Stakeholders With Information About TheVenkat JayAinda não há avaliações

- Chapter 8-Software TestingDocumento5 páginasChapter 8-Software Testinggevanson967Ainda não há avaliações

- Chapter 4 - What Is System TestingDocumento4 páginasChapter 4 - What Is System TestingAamir AfzalAinda não há avaliações

- IBM Autonomic Computing and Solution Installation: David Cole IBM AC Customer and Partner ProgramsDocumento29 páginasIBM Autonomic Computing and Solution Installation: David Cole IBM AC Customer and Partner Programssupriya412Ainda não há avaliações

- LO 1 and 2Documento17 páginasLO 1 and 2bilisummaabokonaaAinda não há avaliações

- Application Software Installation GuidesDocumento6 páginasApplication Software Installation GuidesFuckin LifeAinda não há avaliações

- 7 PN 4 D 54 ZBPF 6Documento9 páginas7 PN 4 D 54 ZBPF 6marmarkk880219Ainda não há avaliações

- Research Paper Cyber ForensicsDocumento7 páginasResearch Paper Cyber Forensicsaflbrpwan100% (1)

- SSRS - How To Use Hive Database (Hortonworks) As Data Source For SSRS Report - TechNet Articles - United States (English) - TechNet WikiDocumento3 páginasSSRS - How To Use Hive Database (Hortonworks) As Data Source For SSRS Report - TechNet Articles - United States (English) - TechNet WikiVincent TacdaAinda não há avaliações

- ts3204 00aDocumento2 páginasts3204 00aziksonAinda não há avaliações

- Preventing SAP Customers From Leaking Secrets On Github - SAP BlogsDocumento5 páginasPreventing SAP Customers From Leaking Secrets On Github - SAP BlogsLohithAinda não há avaliações

- SkyEdge VPN Configuration and Management - 1106 PDFDocumento124 páginasSkyEdge VPN Configuration and Management - 1106 PDFbinod hadaAinda não há avaliações

- AASHTO M323 (Inactiva)Documento2 páginasAASHTO M323 (Inactiva)Stuar TencioAinda não há avaliações

- Quiz 3 ccn2Documento6 páginasQuiz 3 ccn2omarjudaiAinda não há avaliações

- 10 Questions For Assessing Data Security in The EnterpriseDocumento14 páginas10 Questions For Assessing Data Security in The EnterpriseMacloutAinda não há avaliações

- Previously: L3 Technologies Inc.Documento22 páginasPreviously: L3 Technologies Inc.Linssen KongAinda não há avaliações

- Cost of A Data Breach Report 2023Documento78 páginasCost of A Data Breach Report 2023Sazones Perú Gerardo SalazarAinda não há avaliações

- Cbi Qy Series Dat Dec 2014Documento8 páginasCbi Qy Series Dat Dec 2014Jose GallardoAinda não há avaliações

- USD Implementation EDocumento329 páginasUSD Implementation EJosé Antonio Torres ManzanoAinda não há avaliações

- Seclore Data Classification BrochureDocumento4 páginasSeclore Data Classification BrochureJulie SmithAinda não há avaliações

- Wyoming Wings Magazine, October 2011Documento23 páginasWyoming Wings Magazine, October 2011Wyoming Wing, Civil Air PatrolAinda não há avaliações

- Free Proxy ListDocumento2 páginasFree Proxy Listums6120Ainda não há avaliações

- Technology Roadmap PowerPoint TemplateDocumento2 páginasTechnology Roadmap PowerPoint Templatetrúc thuyên nguyễnAinda não há avaliações

- 2006 A Cryptosystem Based On Vigenère Cipher With Varying KeyDocumento6 páginas2006 A Cryptosystem Based On Vigenère Cipher With Varying Keyma za reAinda não há avaliações

- Cyberbullying Synthesis EssayDocumento2 páginasCyberbullying Synthesis EssayJuan Flores100% (2)

- Assign 1Documento4 páginasAssign 1sridharegspAinda não há avaliações

- Cyber TortDocumento21 páginasCyber TortAnonymous nkGJvo3nGJ100% (1)

- Database Design For Private High School Academic Information SystemDocumento8 páginasDatabase Design For Private High School Academic Information SystemIJAR JOURNALAinda não há avaliações

- DoS and DDoS AttackDocumento6 páginasDoS and DDoS AttackVikAinda não há avaliações

- Https WWW - Irctc.co - in Eticketing PrintTicketDocumento2 páginasHttps WWW - Irctc.co - in Eticketing PrintTicketamitcmsAinda não há avaliações

- MS ProtoDocumento365 páginasMS Protomohankumar4399473Ainda não há avaliações