Escolar Documentos

Profissional Documentos

Cultura Documentos

An Experiential Study of SVM and Naïve Bayes For Gender Recognization

Enviado por

Editor IJRITCCTítulo original

Direitos autorais

Formatos disponíveis

Compartilhar este documento

Compartilhar ou incorporar documento

Você considera este documento útil?

Este conteúdo é inapropriado?

Denunciar este documentoDireitos autorais:

Formatos disponíveis

An Experiential Study of SVM and Naïve Bayes For Gender Recognization

Enviado por

Editor IJRITCCDireitos autorais:

Formatos disponíveis

International Journal on Recent and Innovation Trends in Computing and Communication

Volume: 3 Issue: 9

ISSN: 2321-8169

5456 - 5460

_____________________________________________________________________________________

An Experiential Study of SVM and Nave Bayes for Gender Recognization

Sneha Pradeep Vanjari

Department of Computer Engineering,

SKN-Sinhagad Institute of Technology & Science,

Lonavala,

India

Email_id: snehavanjari9900@gmail.com

Guided By

V. D. Thombre

Department Computer Engineering,

SKN-Sinhagad Institute of Technology & Science,

Lonavala, India

Email_id: thombrevd@gmail.com

Abstract: Classification and regression are the important aspects of data mining. Data mining is the systematic procedure of

extracting useful data from large datasets. Nave Bayes and SVMs are useful for classification and regression. The nave Bayes

classifier is a typical generative classifier .while The SVM classifier is a typical Discriminative classifier .The naive Bayesian

(NB) classifier is one of the simple yet powerful classification methods. It is considered as one of the most effectual and

significant learning algorithms for machine learning and data mining and also has been treated as a core technique in information

retrieval. Support Vector Machines (SVM) are supervised learning models with associated learning algorithm that analyses data

and recognize patterns, used for classification and regression analysis. Here we attempt to analyze the performance both

algorithms i.e. Nave Bayes and SVMs through the development of useful application. With the accuracy of developed application

this will going to estimate the performance. This paper presents the novel idea towards the classification of the naive bayes and

SVMs algorithm by analyzing the human characteristics for the sake of their gender identification.

Keywords Data Mining, Naive Bayesian, Support Vector Machines.

__________________________________________________*****_________________________________________________

I.

Introduction:

With the rapid development of Internet applications, ecommerce and network communication, there is a geometric

multiples growth for information, which has brought our

lives more and more important influence. Almost all

information we want can be found in the network. But what

people care about most is how to dig out the most valuable

information from this large quantity of information. The

technology of automatic text classification is one of the

basic ways to solve these problems, and it is an important

research subject in information storage and retrieval.

Automatic text classification has many advantages, such as

needing no human intervention, saving a lot of manpower

and updating quickly. Faster classification and higher

precision will surely meet the practical application

requirements. In the digital library, accurate and efficient

text classification is the basis for good service to readers.

Spam filtering, personalized news service and intelligent

product recommendation which is relatively more popular at

shopping sites at present are all typical applications of

classification. Therefore, classification technology is more

and more widely applied to real life[1],[2].

Nave Bayes

Naive Bayes classification algorithm is one of the most

effective text classification methods, and in some areas its

performance can be comparable with neural networks and

decision tree learning.

Advantages

1. Requires small amount of training data to estimate

parameters.

2. Good results are obtained in most of case.

3. Easy to implement

Disadvantages

1. Assumption of class conditional independence leads to

loss of accuracy.

2. Practically dependencies exist among variables and

sometimes these dependencies cannot be modeled by nave

bayes.

Support Vector Machines (SVMs)

In machine learning, support vector machines (SVMs,

also support

vector

networks)

are supervised

learning models with associated learning algorithms that

analyze

data

and

recognize

patterns,

used

for classification and regression analysis. Given a set of

training examples, each marked as belonging to one of two

categories, an SVM training algorithm builds a model that

assigns new examples into one category or the other,

making it a non-probabilistic binary linear classifier. An

SVM model is a representation of the examples as points in

space, mapped so that the examples of the separate

categories are divided by a clear gap that is as wide as

possible. New examples are then mapped into that same

space and predicted to belong to a category based on which

side of the gap they fall on.

5456

IJRITCC | September 2015, Available @ http://www.ijritcc.org

_______________________________________________________________________________________

International Journal on Recent and Innovation Trends in Computing and Communication

Volume: 3 Issue: 9

ISSN: 2321-8169

5456 - 5460

_____________________________________________________________________________________

Advantages

1. It has a regularisation parameter, which makes the user

think about avoiding over-fitting.

2. It uses the kernel trick, so we can build in expert

knowledge about the problem via engineering the kernel.

3. It is an approximation to a bound on the test error rate.

II.

Related Work:

For quite few year, the interesting topic for research is

sentiment analysis [4]. Inorder for understanding the publics

opinions and experiences, sentiment analysis technique is

used for many social media applications [5]. Some of the

known applications are predicting the poll ratings [6],

prediction of stock market and event analyzing [3],

behavioral targeting [7], etc. There are many learning

methods used for analyzing the social media, and these

methods are similar to the commonly used sentiment analysis

methods used for reviewing products or movies. The methods

can either be supervised learning methods [8] or

unsupervised learning methods [6]. The social media sites

generate a very large amount of data which is unlabeled, and

so the unsupervised learning method becomes needy now-adays. There is no need of training data for unsupervised

learning techniques, making it efficient for using in any

domain.

Lexicon-based method is one of the most characteristic

ways of performing unsupervised sentiment analysis. In the

existing work [4], social media is been used for

prognosticating the future results. For instance, they have

used one of the most favoured sites Twitter, for forecasting

the box-office revenues of movie before they are released.

They used a linear regression method for prediction. But this

technique has limitations such as, emotion analysis not

considered; implementation of NLP technique is required

for improving the approach, exact entity recognition skill

not used and so on. The systems that are implemented are

not specific and they are used only for analyzing a single

entity, thus making them insufficient for opinion analysis.

III.

Implementation Details:

The fundamental focus of our framework is investigating the

performance of Nave Bayes and SVMs are used for the

classification and regression. So, identification of the best

algorithm is the main motivation behind this work. This

identification compares the performance of these algorithms

and, suggests the appropriate algorithm.

In addition to this it will be helpful in feature

recognition also so that it can be used in two ways i.e. real

time application and novel comparison approach via the

means of real time application.

A. System Architecture:

System design is process of defining the architecture,

modules, interface, and data for a system to gratify specified

requirements.

Fig.1: System Architecture

System Architecture is defined as following way:

The proposed system consist of four major components as

shown in figure

1: Graphical user interface- Allows user to interact with the

system.

2. Mining Structure- Uses SVM and Naive bayes processing

algorithms.

3. Data source View- Estimates the relevant characteristics

and compares it with the database.

4. Database - It stores the matching parameters[10].

Naive Bayesian Classier

This classifiers are probabilistic classiers. The process based

on maximum posteriori hypothesis and Bayes theorem. They

guess class membership probabilities. i.e. the probability that

given test sample based on correspondence class. It assumes

the class condition independence to decrease the training data

requirements and cost computation[11].

Support Vector Machine

It try to find out maximum margin separation hyper plane

between the data of two classes to test data points by

5457

IJRITCC | September 2015, Available @ http://www.ijritcc.org

_______________________________________________________________________________________

International Journal on Recent and Innovation Trends in Computing and Communication

Volume: 3 Issue: 9

ISSN: 2321-8169

5456 - 5460

_____________________________________________________________________________________

generalizing well. The basic support vector machine are

discriminative classiers and bi-nary classifiers[12].

IV.

Result and Experiment:

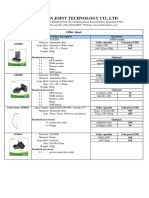

TABLE 1: Training Set

.

B. Algorithm:

Input: User data (height, Weight, foot size, chest size)

Output:

The following steps explain the implementations

sex

height

(feet)

weight

(lbs)

foot

size(inc

hes)

chest

size(inche

s)

Male

180

12

36

Male

5.92

(5'11")

190

11

37

Male

5.58

(5'7")

170

12

38

Ft=Foot size;

Ct=Chest size

Male

5.92

(5'11")

165

10

36

3. Input given to svm algorithm

Female

100

31

IS={Ht ,Wt ,Ft , Ct }

Ht=Height

Female

5.5 (5'6")

150

33

Wt=Weight

Ft=Foot size

Female

5.42

(5'5")

130

30

Ct=Chest size

Female

5.75

(5'9")

150

31

1. Start

2. Input given to naivesbayes algorithm

Ct }

IN={Ht ,Wt , Ft ,

Ht=Height;

Wt=Weight;

4. Take result from SVM and naives Bayes

On ={ONm ,ONf, ONo}

On = Output of naives bayes.

ONm=Output of naives bayes male.

ONf,=Output of naives bayes female.

ONo=Output of naives bayes other

Os ={OSm ,OSf, OSo}

Os = Output of SVM.

OSm = Output of SVM male.

OSf, = Output of SVM female.

OSo = Output of SVM other.

The training set is used to create classifier using Gaussian

distribution assumption. The examples of training set in

presented in Table 1. Probability of occurrence of classes

P(female) = P(male) = 0.5. This is considered frequency

from the prior probability distribution in the larger

population is presented in Table 3. Consider example Sex =

Sample, Height = 6 Feet, Weight = 130 lbs, foot size = 8

inches. And we want to decide which posterior is greater,

Male or Female

The posterior of male is gave

5. Compare the result.

7. Stop

The posterior of female is given by,

5458

IJRITCC | September 2015, Available @ http://www.ijritcc.org

_______________________________________________________________________________________

International Journal on Recent and Innovation Trends in Computing and Communication

Volume: 3 Issue: 9

ISSN: 2321-8169

5456 - 5460

_____________________________________________________________________________________

TABLE 2: Prior probability distribution

Sex

Mean

(height)

Variance

(height)

Mean

(weight)

Variance

(weight)

Mean

(foot

size)

Variance

(foot size)

Mea

n

(foot

size)

Variance

(foot size)

Mean

(foot

size)

Varianc

e (foot

size)

Male

5.855

3.5033e02

176.25

1.2292e+02

11.25

9.1667e-01

11.2

5

9.1667e-01

38.25

19.1667e

-01

Female

5.4175

9.7225e02

132.5

5.5833e+02

7.5

1.6667e+00

7.5

1.6667e+00

27.5

12.6667e

+00

Since posterior numerator is greater in the female case, we

predict the sample is female.

The evidence is calculated as,

Naive Bayes approach leads to loss of accuracy based on

class conditional independence and also practical

independencies are exist among variables, but it is not

handled by Naive Bayes algorithm.

Now we have to determine the probability distribution of

Sex:

=

where = 5.855 and

exp

= 3.5033.

are the parameters

of normal distribution. It is prior defined by training set.

posterior numerator (male) = their product = 6.1984.

Figure 2 : Comparison between SVM and Naive Baye

Acknowledgment:

We are thankful to the teachers for their valuable guidance.

We are thankful to the authorities of Savitribai Phule

University of Pune and reviewer for their valuable

suggestions. We also thank the college authorities for

providing the required infrastructure and support. Finally,

we would like to extend a heartfelt gratitude to friends and

family members.

posterior numerator(female) = their product = 5.3778 *

5459

IJRITCC | September 2015, Available @ http://www.ijritcc.org

_______________________________________________________________________________________

International Journal on Recent and Innovation Trends in Computing and Communication

Volume: 3 Issue: 9

ISSN: 2321-8169

5456 - 5460

_____________________________________________________________________________________

REFERENCES:

[1]

[2]

[3]

[4]

[5]

[6]

[7]

[8]

[9]

[10]

[11]

[12]

Yuguang Huang, Lei Li, naive bayes classification

algorithm based on small sample set, Proceedings of

IEEE CCIS2011

K. Sankar, S. Kannan and P. Jennifer, Prediction of

Code Fault Using Naive Bayes and SVM Classifiers,

Middle-East Journal of Scientific Research 20 (1): 108113, 2014

Geor gios Paltoglou and Mike Thelwall. Twitter,

MySpace, Digg: Unsupervised Sentiment Analysis in

Social Media ACM

Transactions on Intelligent

Systems and Technology, Vol. 3, No. 4, Article 66,

Publication date: September 2012

Xujuan Zhou,Xiaohui Tao,Jianming Yong and Zhenyu

Yang. Sentiment Analysis on Tweets for Social Events.

In Proceedings of the IEEE 17th International Conference

on Computer Supported Cooperative Work in Design

,pages 557-562,2013.

B. J. Jansen, M. Zhang, K. Sobel, and A. Chowdury.

Twitter power: Tweets as electronic word of mouth. J.

Am. Soc. Inf. Sci., 60(11):2169-2188, 2009.

K. Dave, S. Lawrence, and D. Pennock. Opinion

extraction and semantic classification of product

reviews. In Proceedings of the 12th International World

Wide Web Conference (WWW), pages 519-528, 2003.

S. Brody and N. Elhadad. An unsupervised aspect

sentiment model for online reviews. In HLT 10: Human

Language Technologies: The 2010 Annual Conference of

the North American Chapter of the Association for

Computational Linguistics, pages 804-812, Morristown,

NJ, USA, 2010. Association for Computational

Linguistics.

B.Liu.Opinion mining. Invited contribution to

Encyclopedia of Database Systems, 2008

Alena Neviarouskaya, Masaki Aono, Sentiment Word

Relations with Affect, Judgment, and Appreciation,

IEEE transactions on affective computing,

Roger S. Pressman, Software Engineering: A

Practitioners Approach, McGRAW Hill international

publication, seventh edition, 2004.

R.R. Yager, An extension of the naive bayesian

classier, Information Sciences , vol. 176, no. 5, pp.

577588, 2006

C. Cortes and V. Vapnik, Support-vector networks,

Machinelearning , vol. 20, no. 3, pp. 273297, 1995

5460

IJRITCC | September 2015, Available @ http://www.ijritcc.org

_______________________________________________________________________________________

Você também pode gostar

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeNo EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeNota: 4 de 5 estrelas4/5 (5794)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreNo EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreNota: 4 de 5 estrelas4/5 (1090)

- Never Split the Difference: Negotiating As If Your Life Depended On ItNo EverandNever Split the Difference: Negotiating As If Your Life Depended On ItNota: 4.5 de 5 estrelas4.5/5 (838)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceNo EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceNota: 4 de 5 estrelas4/5 (894)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureNo EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureNota: 4.5 de 5 estrelas4.5/5 (474)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersNo EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersNota: 4.5 de 5 estrelas4.5/5 (344)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)No EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Nota: 4.5 de 5 estrelas4.5/5 (119)

- The Emperor of All Maladies: A Biography of CancerNo EverandThe Emperor of All Maladies: A Biography of CancerNota: 4.5 de 5 estrelas4.5/5 (271)

- The Little Book of Hygge: Danish Secrets to Happy LivingNo EverandThe Little Book of Hygge: Danish Secrets to Happy LivingNota: 3.5 de 5 estrelas3.5/5 (399)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyNo EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyNota: 3.5 de 5 estrelas3.5/5 (2219)

- The Yellow House: A Memoir (2019 National Book Award Winner)No EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Nota: 4 de 5 estrelas4/5 (98)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaNo EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaNota: 4.5 de 5 estrelas4.5/5 (265)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryNo EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryNota: 3.5 de 5 estrelas3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnNo EverandTeam of Rivals: The Political Genius of Abraham LincolnNota: 4.5 de 5 estrelas4.5/5 (234)

- The Unwinding: An Inner History of the New AmericaNo EverandThe Unwinding: An Inner History of the New AmericaNota: 4 de 5 estrelas4/5 (45)

- Catering Reserving and Ordering System with MongoDB, Express, Node.js (39Documento5 páginasCatering Reserving and Ordering System with MongoDB, Express, Node.js (39radha krishnaAinda não há avaliações

- Chalk & TalkDocumento6 páginasChalk & TalkmathspvAinda não há avaliações

- Regression Based Comparative Study For Continuous BP Measurement Using Pulse Transit TimeDocumento7 páginasRegression Based Comparative Study For Continuous BP Measurement Using Pulse Transit TimeEditor IJRITCCAinda não há avaliações

- A Review of Wearable Antenna For Body Area Network ApplicationDocumento4 páginasA Review of Wearable Antenna For Body Area Network ApplicationEditor IJRITCCAinda não há avaliações

- Importance of Similarity Measures in Effective Web Information RetrievalDocumento5 páginasImportance of Similarity Measures in Effective Web Information RetrievalEditor IJRITCCAinda não há avaliações

- A Review of Wearable Antenna For Body Area Network ApplicationDocumento4 páginasA Review of Wearable Antenna For Body Area Network ApplicationEditor IJRITCCAinda não há avaliações

- IJRITCC Call For Papers (October 2016 Issue) Citation in Google Scholar Impact Factor 5.837 DOI (CrossRef USA) For Each Paper, IC Value 5.075Documento3 páginasIJRITCC Call For Papers (October 2016 Issue) Citation in Google Scholar Impact Factor 5.837 DOI (CrossRef USA) For Each Paper, IC Value 5.075Editor IJRITCCAinda não há avaliações

- Modeling Heterogeneous Vehicle Routing Problem With Strict Time ScheduleDocumento4 páginasModeling Heterogeneous Vehicle Routing Problem With Strict Time ScheduleEditor IJRITCCAinda não há avaliações

- Performance Analysis of Image Restoration Techniques at Different NoisesDocumento4 páginasPerformance Analysis of Image Restoration Techniques at Different NoisesEditor IJRITCCAinda não há avaliações

- Channel Estimation Techniques Over MIMO-OFDM SystemDocumento4 páginasChannel Estimation Techniques Over MIMO-OFDM SystemEditor IJRITCCAinda não há avaliações

- A Review of 2D &3D Image Steganography TechniquesDocumento5 páginasA Review of 2D &3D Image Steganography TechniquesEditor IJRITCCAinda não há avaliações

- Comparative Analysis of Hybrid Algorithms in Information HidingDocumento5 páginasComparative Analysis of Hybrid Algorithms in Information HidingEditor IJRITCCAinda não há avaliações

- A Review of 2D &3D Image Steganography TechniquesDocumento5 páginasA Review of 2D &3D Image Steganography TechniquesEditor IJRITCCAinda não há avaliações

- Channel Estimation Techniques Over MIMO-OFDM SystemDocumento4 páginasChannel Estimation Techniques Over MIMO-OFDM SystemEditor IJRITCCAinda não há avaliações

- Fuzzy Logic A Soft Computing Approach For E-Learning: A Qualitative ReviewDocumento4 páginasFuzzy Logic A Soft Computing Approach For E-Learning: A Qualitative ReviewEditor IJRITCCAinda não há avaliações

- Diagnosis and Prognosis of Breast Cancer Using Multi Classification AlgorithmDocumento5 páginasDiagnosis and Prognosis of Breast Cancer Using Multi Classification AlgorithmEditor IJRITCCAinda não há avaliações

- A Study of Focused Web Crawling TechniquesDocumento4 páginasA Study of Focused Web Crawling TechniquesEditor IJRITCCAinda não há avaliações

- Efficient Techniques For Image CompressionDocumento4 páginasEfficient Techniques For Image CompressionEditor IJRITCCAinda não há avaliações

- Prediction of Crop Yield Using LS-SVMDocumento3 páginasPrediction of Crop Yield Using LS-SVMEditor IJRITCCAinda não há avaliações

- Network Approach Based Hindi Numeral RecognitionDocumento4 páginasNetwork Approach Based Hindi Numeral RecognitionEditor IJRITCCAinda não há avaliações

- Predictive Analysis For Diabetes Using Tableau: Dhanamma Jagli Siddhanth KotianDocumento3 páginasPredictive Analysis For Diabetes Using Tableau: Dhanamma Jagli Siddhanth Kotianrahul sharmaAinda não há avaliações

- 45 1530697786 - 04-07-2018 PDFDocumento5 páginas45 1530697786 - 04-07-2018 PDFrahul sharmaAinda não há avaliações

- 44 1530697679 - 04-07-2018 PDFDocumento3 páginas44 1530697679 - 04-07-2018 PDFrahul sharmaAinda não há avaliações

- Itimer: Count On Your TimeDocumento4 páginasItimer: Count On Your Timerahul sharmaAinda não há avaliações

- A Clustering and Associativity Analysis Based Probabilistic Method For Web Page PredictionDocumento5 páginasA Clustering and Associativity Analysis Based Probabilistic Method For Web Page Predictionrahul sharmaAinda não há avaliações

- Hybrid Algorithm For Enhanced Watermark Security With Robust DetectionDocumento5 páginasHybrid Algorithm For Enhanced Watermark Security With Robust Detectionrahul sharmaAinda não há avaliações

- Safeguarding Data Privacy by Placing Multi-Level Access RestrictionsDocumento3 páginasSafeguarding Data Privacy by Placing Multi-Level Access Restrictionsrahul sharmaAinda não há avaliações

- A Content Based Region Separation and Analysis Approach For Sar Image ClassificationDocumento7 páginasA Content Based Region Separation and Analysis Approach For Sar Image Classificationrahul sharmaAinda não há avaliações

- Vehicular Ad-Hoc Network, Its Security and Issues: A ReviewDocumento4 páginasVehicular Ad-Hoc Network, Its Security and Issues: A Reviewrahul sharmaAinda não há avaliações

- 41 1530347319 - 30-06-2018 PDFDocumento9 páginas41 1530347319 - 30-06-2018 PDFrahul sharmaAinda não há avaliações

- Image Restoration Techniques Using Fusion To Remove Motion BlurDocumento5 páginasImage Restoration Techniques Using Fusion To Remove Motion Blurrahul sharmaAinda não há avaliações

- Space Complexity Analysis of Rsa and Ecc Based Security Algorithms in Cloud DataDocumento12 páginasSpace Complexity Analysis of Rsa and Ecc Based Security Algorithms in Cloud Datarahul sharmaAinda não há avaliações

- Efficacy of Platelet-Rich Fibrin On Socket Healing After Mandibular Third Molar ExtractionsDocumento10 páginasEfficacy of Platelet-Rich Fibrin On Socket Healing After Mandibular Third Molar Extractionsxiaoxin zhangAinda não há avaliações

- A Study On Financial Performance of Small and MediumDocumento9 páginasA Study On Financial Performance of Small and Mediumtakele petrosAinda não há avaliações

- Data Sheet: High-Speed DiodesDocumento7 páginasData Sheet: High-Speed DiodesZoltán ÁgostonAinda não há avaliações

- 3ADW000379R0301 DCS550 Manual e CDocumento310 páginas3ADW000379R0301 DCS550 Manual e CLaura SelvaAinda não há avaliações

- Manhattan Project SummaryDocumento5 páginasManhattan Project Summaryapi-302406762Ainda não há avaliações

- 13 Nilufer-CaliskanDocumento7 páginas13 Nilufer-Caliskanab theproAinda não há avaliações

- A6V12050595 - Valve Actuator DIL-Switch Characteristic Overview - deDocumento42 páginasA6V12050595 - Valve Actuator DIL-Switch Characteristic Overview - depolo poloAinda não há avaliações

- Low rank tensor product smooths for GAMMsDocumento24 páginasLow rank tensor product smooths for GAMMsDiego SotoAinda não há avaliações

- CM Group Marketing To Gen Z ReportDocumento20 páginasCM Group Marketing To Gen Z Reportroni21Ainda não há avaliações

- Calmark - Birtcher 44 5 10 LF L DatasheetDocumento2 páginasCalmark - Birtcher 44 5 10 LF L DatasheetirinaAinda não há avaliações

- ZXJ 10 SPCDocumento7 páginasZXJ 10 SPCMuhammad Yaseen100% (1)

- Forensic Pharmacy: Dr. Zirwa AsimDocumento35 páginasForensic Pharmacy: Dr. Zirwa AsimZirwa AsimAinda não há avaliações

- TM-1870 AVEVA Everything3D - (2.1) Draw Administration (CN)Documento124 páginasTM-1870 AVEVA Everything3D - (2.1) Draw Administration (CN)yuehui niuAinda não há avaliações

- Vehicle Tracker Offer SheetDocumento1 páginaVehicle Tracker Offer SheetBihun PandaAinda não há avaliações

- Development Drop - Number - Peformance - For - EstimateDocumento11 páginasDevelopment Drop - Number - Peformance - For - Estimateanon_459056029Ainda não há avaliações

- HIBAH PKSM Sps 2021Documento9 páginasHIBAH PKSM Sps 2021Gargazi Bin HamidAinda não há avaliações

- Journal of Travel & Tourism MarketingDocumento19 páginasJournal of Travel & Tourism MarketingSilky GaurAinda não há avaliações

- Schippers and Bendrup - Ethnomusicology Ecology and SustainabilityDocumento12 páginasSchippers and Bendrup - Ethnomusicology Ecology and SustainabilityLuca GambirasioAinda não há avaliações

- The Machine-Room-Less Elevator: Kone E MonospaceDocumento8 páginasThe Machine-Room-Less Elevator: Kone E MonospaceAbdelmuneimAinda não há avaliações

- Flap System RiginDocumento12 páginasFlap System RiginHarold Reyes100% (1)

- Toolbox Meeting Or, TBT (Toolbox TalkDocumento10 páginasToolbox Meeting Or, TBT (Toolbox TalkHarold PonceAinda não há avaliações

- 1729Documento52 páginas1729praj24083302Ainda não há avaliações

- Check For Palindrome: Compute GCD and LCMDocumento3 páginasCheck For Palindrome: Compute GCD and LCMAadhi JAinda não há avaliações

- Soft Computing Techniques Assignment1 PDFDocumento14 páginasSoft Computing Techniques Assignment1 PDFshadan alamAinda não há avaliações

- IIT BOMBAY RESUME by SathyamoorthyDocumento1 páginaIIT BOMBAY RESUME by SathyamoorthySathyamoorthy VenkateshAinda não há avaliações

- Formulating and Solving LPs Using Excel SolverDocumento8 páginasFormulating and Solving LPs Using Excel SolverAaron MartinAinda não há avaliações

- Atpl Formula MergedDocumento74 páginasAtpl Formula Mergeddsw78jm2mxAinda não há avaliações

- Basf Masteremaco Application GuideDocumento15 páginasBasf Masteremaco Application GuideSolomon AhimbisibweAinda não há avaliações