Escolar Documentos

Profissional Documentos

Cultura Documentos

Sonia Singh and Priyanka Gupta (Data Mining Research Paper)

Enviado por

manalidubalTítulo original

Direitos autorais

Formatos disponíveis

Compartilhar este documento

Compartilhar ou incorporar documento

Você considera este documento útil?

Este conteúdo é inapropriado?

Denunciar este documentoDireitos autorais:

Formatos disponíveis

Sonia Singh and Priyanka Gupta (Data Mining Research Paper)

Enviado por

manalidubalDireitos autorais:

Formatos disponíveis

International Journal of Advanced Information Science and Technology (IJAIST)

Vol.27, No.27, July 2014

ISSN: 2319:2682

COMPARATIVE STUDY ID3, CART AND C4.5

DECISION TREE ALGORITHM: A SURVEY

Sonia Singh

Assistant Professor

Department of computer science

University of Delhi

New Delhi, India

14sonia.singh@gmail.com

Abstract: Decision tree learning algorithm has been

successfully used in expertsystems in capturing

knowledge. The main task performed in these

systems isusing inductive methods to the given

values of attributes of an unknown objectto determine

appropriate classification according to decision tree

rules.It is oneof the most effective forms to represent

and evaluate the performance of algorithms, due to its

various

eye

catchingfeatures:

simplicity,

comprehensibility, no parameters, and being able to

handle mixed-type data. There are many decision tree

algorithm available named ID3, C4.5, CART,

CHAID, QUEST, GUIDE, CRUISE, and CTREE.

We have explained three most commonly used

decision tree algorithm in this paper to understand

their use and scalability on different types of

attributes and feature. ID3(Iterative Dichotomizer 3)

developed by J.R Quinlan in 1986, C4.5 is an

evolution of ID3, presented by the same author

(Quinlan, 1993).CART stands for Classification and

Regression Trees developed by Breiman et al.in

1984).

Keywords: Decision tree, ID3, C4.5, CART,

Regression, Information Gain, Gini Index, Gain

Ratio, Pruning,

I INTRODUCTION

A decision tree is a tree in which each branch node

represents a choice between a number of alternatives,

and each leaf node represents a classification or

decision [8].Decision tree are commonly used for

gaining information for the purpose of decision making. Decision tree starts with a root node on

which it is for users to take actions. From this node,

users split each node recursively according to

decision tree learning algorithm. The final result is a

decision tree in whicheach branch represents a

possible

scenario

of

decision

and

its

outcome[1].ID3,CART and C4.5 are basically most

Priyanka Gupta

Assistant Professor

Department of computer science

University of Delhi

New Delhi, India

shinepriyanka@gmail.com

common decision tree algorithms in data mining

which use different splitting criteria for splitting the

node at each level to form a homogeneous(i.e. it

contains objectsbelonging to the same category)

node.

II DECISION TREE

A decision tree is a classifier expressed as a recursive

partition of the instance space. The decision tree

consists of nodes that form a rooted tree, meaning it

is a directed tree with a node called root that has no

incoming edges. All other nodes have exactly one

incoming edge. A node with outgoing edges is called

an internal or test node. All other nodes are called

leaves (also known as terminal or decision nodes). In

a decision tree, each internal node splits the instance

space into two or more sub-spaces according to a

certain discrete function of the input attributes values.

Each leaf is assigned to one class representing the

most appropriate targetvalue. Instances are classified

bynavigating them from the root of the tree down to a

leaf, according to theoutcome of the tests along the

path [2].

A decision tree is a tree in which each branch node

represents a choice between a number of alternatives,

and each leaf node represents a classification or

decisionis shown in Fig: 1 below in which each

attribute name is represented in rectangles, attribute

values are represented in ovals and the class

(decision) is represented in diamonds .Decision tree

are commonly used for gaining information for the

purpose of decision -making. Decision tree starts

with a root node on which it is for users to take

actions. From this node, users split each node

recursively according to decision tree learning

algorithm. The final result is a decision tree in

whicheach branch represents a possible scenario of

decision and its outcome [2].

97

International Journal of Advanced Information Science and Technology (IJAIST)

Vol.27, No.27, July 2014

Decision Tree Learning Algorithm

Decision tree learning is a method for approximating

discrete-valued targetfunctions, in which the learned

function is represented by a decision tree.Decision

tree learning is one of the most widely used and

practical methodsfor inductive inference'. (Tom M

Mitchell, 1997, p52) Decision tree learning algorithm

has been successfully used in expertsystems in

capturing knowledge. The main task performed in

these systems isusing inductive methods to the given

values of attributes of an unknown objectto determine

appropriate classification according to decision tree

Day

O utlook

1

2

3

4

5

6

7

8

9

10

11

12

13

14

Temperature

Sunny

Sunny

Overcast

Rain

Rain

Rain

Overcast

Sunny

Sunny

Rain

Sunny

Overcast

Overcast

Rain

Hot

Hot

Hot

Mild

Cool

Cool

Cool

Mild

Cool

Mild

Mild

Mild

Hot

Mild

ISSN: 2319:2682

rules.Decision trees classify instances by traverse

from root node to leaf node. We start from root node

of decision tree, testing the attribute specified by

thisnode, and then moving down the tree branch

according to the attribute value in thegiven set. This

process is the repeated at the sub-tree level. Decision

Tree Algorithm is suited because of the following

[1]:

1. Instance is represented as attribute-value

pairs. For example, attribute 'Temperature'

and its value 'hot', 'mild', 'cool'. Represented

in Fig: 1 below.

Humidity

Wind

Play (CLASS)

High

High

High

High

Normal

Normal

Normal

High

Normal

Normal

Normal

High

Normal

High

Weak

Strong

Weak

Weak

Weak

Strong

Strong

Weak

Weak

Weak

Strong

Strong

Weak

Strong

No

No

Yes

Yes

Yes

No

Yes

No

Yes

Yes

Yes

Yes

Yes

No

O UTLO O K

O vercast

Sunny

Rain

HUMIDITY

WIND

YES

High

NO

Normal

Strong

NO

YES

Weak

YES

Fig: 1 Decision Tree Example

2. The target function has discrete output values. It

can easily deal withinstance which is assigned to a

Boolean decision, such as 'true' and

'false','p(positive)' and 'n(negative)'.

98

International Journal of Advanced Information Science and Technology (IJAIST)

Vol.27, No.27, July 2014

3. The training data may contain errors. This can be

dealt with pruning techniques that we will not cover

here.

ISSN: 2319:2682

Classification Error: It is computed as:

Classification error (t) = 1-max [p (i/t)]

We will cover the 3 widely used decision tree

learning algorithms: ID3, CART and C4.5.

Decision Tree learning an attractive Inductive

learningmethod because of the following reason

[1]:

1. Decision tree is a good generalization for

unobservedinstance, only if the instances are

described in terms of

features that are correlated with the target class .

2. The methods are efficient in computation that is

proportional to the number of observed training

instances.

3. The resulting decision tree provides a

representation of the concept that appeal to human

because it renders the Classification process selfevident.

III SPLITTING CRITERIA

Basically all decision tree algorithms require splitting

criteria for splitting a node to form a tree.In most of

the cases, the discrete splitting functions are

univariate. Univariate means that an internal node is

split according to the value of a single attribute [2].

The algorithm used searches for the best attribute

upon which to split. There are various splitting

criteria based on impurity of a node. The main aim of

splitting criteria is to reduce the impurity of a node.

There are many measures of splitting that can be used

to determine the best way to split the records. These

splitting measures are defined in terms of the class

distribution of the records before and after splitting.

The example of impurity measures includes [3]:

Entropy: It is the measure of impurity of a node. It is

defined (for a binary class with values) as:

Entropy (t) = - p (i/t) log2p (i/t)

Gini Index: It is another measure of impuritythat

measures the divergences betweenthe probability

distributions of the target attributes values. The Gini

Index has been used in various works such as

(Breiman et al., 1984) and (Gleanedet al., 1991) and

it is defined as: [3]:

Where, p (i/t) denote the fraction of records

belonging to class i at a given node t.

Suppose an Attribute A has three values and Class

label has two classifier then Entropy, Gini Index and

Classification Error are computed as follow in Fig: 2

From Table 2 in Fig: 2 it is observed that all the three

measures attain their maximum value when the class

distribution is uniform (i.e., when p= 0.5, where p

refers to the fraction of records that belong to one of

the two class).The maximum values for the measures

are attained when all the records belong to the same

class (i.e., when p equals 0 or 1).

Information Gain: Information gain is an impuritybased criterion that uses the entropy measure as the

impurity measure (Quinlan, 1987).It is the difference

between the entropy of the node before splitting

(parent node) and after splitting (child node).

Info Gain ( ) = Entropy (parent node)entropy (child node)

Gain Ratio:The gain ratio normalizes the

information gain as follows (Quinlan, 1993) [2].

Gain Ratio = Information gain (

Entropy

Impurity measures such as entropy and Gini Index

tend to favor attributes that have large number of

distinct values. Therefore Gain Ratio is computed

which is used to determine the goodness of a split[3].

Every splitting criterion has their own significance

and usage according to their characteristic and

attributes type.

Twoing Criteria: The Gini Index may encounter

problems when the domain of the target attribute is

relatively wide (Breiman et al., 1984). In this case it

is possible to employ binary criterion called twoing

criteria. This criterion is defined as:

Twoing Criteria (t) = PLPR( (|p (i/tL)-p (i/tR)|)) 2

4

Gini Index = 1- [p (i/t)] 2

99

International Journal of Advanced Information Science and Technology (IJAIST)

Vol.27, No.27, July 2014

ISSN: 2319:2682

From Table 2 in Fig: 2 it is observed that all the three

measures attain their maximum value when the class

distribution is uniform (i.e., when p= 0.5, where p

refers to the fraction of records that belong to one of

the two class).The maximum values for the measures

are attained when all the records belong to the same

class (i.e., when p equals 0 or 1).

Where, p (i/t) denote the fraction of records

belonging to class i at a given node t.

Suppose an Attribute A has three values and Class

label has two classifier then Entropy, Gini Index

Index and Classification Error are computed as

follow in Fig: 2

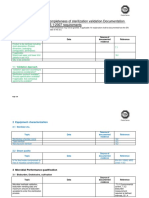

A

Node N1

CLAS S NO

CLAS S YES

Node N2

N1

0

6

Node N3

N2

1

5

N3

3

3

Table: 1 Showing Class Distribution

Impurity

measure

Node 1

Node 2

Node 3

Entropy

-(0/6)log2(0/6)(6/6)log2(6/6)=0

-(1/6)log2(1/6)(5/6)log2(5/6)=0.650

-(3/6)log2(3/6)(3/6)log2(3/6)=1

Gini Index

Index

1-(0/6)2-(6/6)2=0

1-(1/6)2-(5/6)2=0.278

1-(3/6)2-(3/6)2=0.5

Classification

error

1-max[0/6,6/6]=0

1-max[1/6,5/6]=0.167

1-max[0/6,6/6]=0.5

Table: 2

Figure: 2 Computing Impurity M easures

Where L and R refer to the left and right sides of a

givensplit respectively, and p (i|t) is the relative

frequency of class i at node t (Breiman, 1996).

Twoing attempts to segregate data more evenly than

the Gini Index rule, separating whole groupsof data

andidentifying groups that make up 50 percent ofthe

remaining data at each successive node

Stopping Criteria

The splitting (growing) phase continues until a

stopping criterion is triggered. The following

conditions are common stopping rules [1]:

1. All instances in the training set belong to a single

value of y.

2. The maximu m tree depth has been reached.

3. The number of cases in the terminal node is less

than the minimum number of cases for parent nodes.

4. If the node were split, the number of cases in one

or more child nodes would be less than the minimum

number of cases for child nodes.

5. The best splitting criteria is not greater than a

certain threshold.

IV DECISION TREE ALGORITHMS

ID3

The ID3 algorithm is considered as a very simple

decision tree algorithm (Quinlan, 1983). The ID3

algorithm is a decision-tree building algorithm. It

determines the classification of objects by testing the

values of the properties. It builds a decision tree for

the givendata in a top-down fashion, starting from a

100

International Journal of Advanced Information Science and Technology (IJAIST)

Vol.27, No.27, July 2014

set of objects and a specification of properties.At

each node of the tree, one property is tested based on

maximizing information gain andminimizing entropy,

and the results are used to split the object set. This

process isrecursively done until the set in a given

sub-tree is homogeneous (i.e. it contains

objectsbelonging to the same category). This

becomes a leaf node of the decision tree [4].The ID3

algorithm uses a greedy search. It selects a test using

the information gaincriterion, and then never explores

the possibility of alternate choices. This makes it a

veryefficient algorithm, in terms of processing time.

It has the following advantages and disadvantages

[5]:

Advantages

Understandable prediction rules are created

from the training data.

Builds the fastest tree.

Builds a short tree.

Only need to test enough attributes until all

data is classified.

Finding leaf nodes enables test data to be

pruned, reducing number of tests.

Whole dataset is searched to create tree.

Disadvantages

Data may be over-fitted or over-classified, if

a small sample is tested.

Only one attribute at a time is tested for

making a decision.

Does not handle numeric attributes and

missing values.

CART

CART stands for Classification and Regression Trees

(Breiman et al., 1984).It is characterized by the fact

that it constructs binary trees, namely each internal

node has exactly two outgoing edges. The splits are

selected using the twoing criteria and the obtained

tree is pruned by costcomplexity Pruning. When

provided, CART can consider misclassification costs

in the tree induction. It also enables users to provide

prior probability distribution. An important feature of

CART is its ability to generate regression trees.

Regression trees are trees where their leaves predict a

real number and not a class. In case of regression,

CART looks for splits that minimize the prediction

squared error (the leastsquared deviation). The

prediction in each leaf is based on the weighted mean

for node [5]. It has the following advantages and

disadvantages[6]:

Advantages

CART can easily handle both numerical and

categorical variables.

ISSN: 2319:2682

CART algorithm will itself identify the most

signicant variables and eliminate nonsignicant ones.

CART can easily handle outliers.

Disadvantages

CART may have unstable decision tree.

Insignificant modification of learning

sample such as eliminating several

observations and cause changes in decision

tree: increase or decrease of tree complexity,

changes in splitting variables and values.

CART splits only by one variable.

.

C4.5

C4.5 is an evolution of ID3, presented by the same

author (Quinlan, 1993). The C4.5 algorithm generates

a decision tree for the given data by recursively

splitting that data. The decision tree grows using

Depth-first strategy. The C4.5 algorithm considers all

the possible tests that can split the data and selects a

test that gives the best information gain (i.e. highest

gain ratio). This test removes ID3s bias in favor of

wide decision trees [8]. For each discrete attribute,

one test is used to produce many outcomes as the

number of distinct values of the attribute. For each

continuous attribute, the data is sorted, and the

entropy gain is calculated based on binary cuts on

each distinct value in one scan of the sorted data.

This process is repeated for all continuous attributes.

The C4.5 algorithm allows pruning of the resulting

decision trees. This increases the error rates on the

training data, but importantly, decreases the error

rates on the unseen testing data. The C4.5 algorithm

can also deal with numeric attributes, missing values,

and noisydata. It has the following advantages and

disadvantages [7]:

Advantages

C4.5 can handle both continuous and

discrete attributes. In order to handle

continuous attributes, it creates a threshold

and then splits the list into those whose

attribute value is above the threshold and

those that are less than or equal to it [8].

C4.5 allows attribute values to be marked

as? For missing. Missing attribute values are

simply not used in gain and entropy

calculations .

C4.5 goes back through the tree once it's

been created and attempts to remove

branches that do not help by replacing them

with leaf nodes.

Disadvantage]

C4.5 constructs empty branches; it is the

most crucial step for rule generation in

101

International Journal of Advanced Information Science and Technology (IJAIST)

Vol.27, No.27, July 2014

C4.5.We have found many nodes with zero

values or close to zero values. These values

neither contribute to generate rules nor help

to construct any class for classification task.

Rather it makes the tree bigger and more

complex [7].

Over fitting happens when algorithm model

picks

up

data

with

uncommon

characteristics. Generally C4.5 algorithm

constructs trees and grows it branches just

ISSN: 2319:2682

deep enough toperfectly classify the training

examples. This strategy performs well with

noise free data. But most of the time this

approach over fits the training examples

with noisy data. Currently there are two

approaches are widely using to bypass this

over-fitting in decision tree learning [7].

Susceptible to noise.

The basic Characteristic of the above three

algorithms are explained in Table 3 below:

Characteristic()

Algorithm()

S plitting Criteria

Attribute type

Missing values

Pruning S trategy

Outlier Detection

Susceptible

outliers

ID3

Information Gain

Handles

only

Categorical value

Do not handle

missing values.

No pruning

done

CART

Towing Criteria

Handles

both

Categorical

and

Numeric value

Handle

values.

missing

Cost-Complexity

pruning is used

Can

Outliers

Gain Ratio

Handles

both

Categorical

and

Numeric value

Handle

values.

missing

Error

Based

pruning is used

Susceptible

outliers

C4.5

is

on

handle

on

Table 3: basic characteristic of decision tree algorithms

V CONCLUSION

In this paper we have studied the various basic

properties of the decision tree algorithms which

provides as a better understanding of these algorithms

.We can apply them on different types of data sets

having different types of values and properties and

can attain a best result by knowing that which

algorithm will give the best result on a specific type

of data set.

REFERENCES

[1] Wei Peng, Juhua Chen and Haiping Zhou, of ID3, An

Implementation Decision Tree Learning Algorithm,

University of New South Wales, School of Computer

Science & Engineering, Sydney, NSW 2032, Australia .

[2] LiorRokach and OdedM aiman, Chapter 9 Decision

Trees Department of InduatrialEngineering,Tel-Aviv

University

[3] Vipin Kumar, Pang-Ning Tan and M ichael Steinbach,

Introduction to Data M ining Pearson.

[4]AjanthanRajalingam,

DamandeepM atharu,

KobiVinayagamoorthy,

NarinderpalGhoman,

Data

Mining Course Project: Income Analysis SFWR

ENG/COM SCI 4TF3, December 10, 2002.

[5] Ahmed Bahgat El Seddawy, Prof. Dr Turkey Sultan,

Dr. Ayman Khedr, Applying Classification Technique

Using DID3 Algorithm to Improve Decision Support Under

Uncertain

Situations.

Department of Business

Information System, Arab Academy for Science and

Technology and Department of Information System,

Helwan University , Egypt. International Journal of

Modern Engineering Research , Vol 3, Issue 4, July- Aug

2013 pp-2139-2146.

[6]Roman

Timofeev

to

Prof.

Dr.

Wolfgang

HardleClassification and Regression Trees (CART).

Theory and Applications, CASE- Center of Applied

Statistics and Economics, Humboldt University, Berlin Dec

20, 2004.

[7] M ohammad M M azid,A B M Shawkat Ali, Kevin

Tickle, Improved C4.5 Algorithm for Rule Based

Classification School of Computing Science, Central

Queensland University, Australia.

[8] http://www.wikipedia.com/

102

International Journal of Advanced Information Science and Technology (IJAIST)

Vol.27, No.27, July 2014

ISSN: 2319:2682

Authors Profile

Sonia Singh received M.Sc.

Degree from Delhi University in

Computer Science in 2012.

Received bachelors degree from

Delhi University in B.Sc.(H)

Computer Science in 2010.

.Currently working as Assistant

Photo

Professor in Delhi University.

here

Priyanka Gupta received M.Sc.

Degree from Delhi University in

Computer Science in 2012.

Received bachelors degree from

Delhi University in B.Sc. (H)

Computer Science in 2009.

.Currently working as Assistant

Pastein Delhi University.

Professor

here

103

Você também pode gostar

- Alternating Decision Tree: Fundamentals and ApplicationsNo EverandAlternating Decision Tree: Fundamentals and ApplicationsAinda não há avaliações

- Journal On Decision TreeDocumento5 páginasJournal On Decision TreeIzzatul Atiq RosseliAinda não há avaliações

- ML Decode TE ITDocumento71 páginasML Decode TE ITomsonawane15203Ainda não há avaliações

- Decision Tree ReportDocumento29 páginasDecision Tree ReportIshan DalmiaAinda não há avaliações

- ID3 AlgorithmDocumento3 páginasID3 Algorithmsharad verma100% (1)

- Performance Analysis of Decision Tree ClassifiersDocumento9 páginasPerformance Analysis of Decision Tree ClassifiersEighthSenseGroupAinda não há avaliações

- Decision TreeDocumento20 páginasDecision TreeTsabbit AqdamiAinda não há avaliações

- Experiment No-2Documento4 páginasExperiment No-2Apurva PatilAinda não há avaliações

- Classification Using Decision TreesDocumento0 páginaClassification Using Decision TreespacharneajayAinda não há avaliações

- JETIR1809788Documento4 páginasJETIR1809788Agusti Frananda Alfonsus NaibahoAinda não há avaliações

- Decision Tree Algorithm, Explained-1-22Documento22 páginasDecision Tree Algorithm, Explained-1-22shylaAinda não há avaliações

- Decision Tree and Related Techniques For Classification in ScalationDocumento12 páginasDecision Tree and Related Techniques For Classification in ScalationZazkyeyaAinda não há avaliações

- Decisiontree 2Documento16 páginasDecisiontree 2shilpaAinda não há avaliações

- Classification Algorithm in Data Mining: AnDocumento6 páginasClassification Algorithm in Data Mining: AnMosaddek HossainAinda não há avaliações

- Performance Evaluation of Decision Tree Classifiers On Medical DatasetsDocumento4 páginasPerformance Evaluation of Decision Tree Classifiers On Medical DatasetsTarun GoyalAinda não há avaliações

- Knowledge Mining Using Classification Through ClusteringDocumento6 páginasKnowledge Mining Using Classification Through ClusteringGaurav GuptaAinda não há avaliações

- Decision Trees & The Iterative Dichotomiser 3 (ID3) AlgorithmDocumento8 páginasDecision Trees & The Iterative Dichotomiser 3 (ID3) AlgorithmMuftiaAlfianAinda não há avaliações

- Decitions TreeDocumento6 páginasDecitions Treedmonter67Ainda não há avaliações

- Decision Tree Analysis On J48 Algorithm PDFDocumento6 páginasDecision Tree Analysis On J48 Algorithm PDFArdyantoDwiSaputraAinda não há avaliações

- Research Scholars Evaluation Based On Guides View Using Id3Documento4 páginasResearch Scholars Evaluation Based On Guides View Using Id3esatjournalsAinda não há avaliações

- Lesson 7 Supervised Method (Decision Trees) AlgorithmsDocumento12 páginasLesson 7 Supervised Method (Decision Trees) AlgorithmsVictor AjraebrillAinda não há avaliações

- Feature Selection Based On Fuzzy EntropyDocumento5 páginasFeature Selection Based On Fuzzy EntropyInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Decision TreeDocumento57 páginasDecision TreePrabhjit Singh100% (1)

- Machine Learning QNADocumento1 páginaMachine Learning QNApratikmovie999Ainda não há avaliações

- Ijret - Research Scholars Evaluation Based On Guides View Using Id3Documento4 páginasIjret - Research Scholars Evaluation Based On Guides View Using Id3International Journal of Research in Engineering and TechnologyAinda não há avaliações

- Decision Tree Algorithm: and Classification Problems TooDocumento12 páginasDecision Tree Algorithm: and Classification Problems TooAva WhiteAinda não há avaliações

- Decision Trees and How To Build and Optimize Decision Tree ClassifierDocumento16 páginasDecision Trees and How To Build and Optimize Decision Tree ClassifierShalini SinghalAinda não há avaliações

- Decision Trees and Random Forest Q&aDocumento48 páginasDecision Trees and Random Forest Q&aVishnuAinda não há avaliações

- A Comparative Study Between Feature Selection Algorithms - OkDocumento10 páginasA Comparative Study Between Feature Selection Algorithms - OkOscar Mauricio AragónAinda não há avaliações

- Lecture Notes 3Documento11 páginasLecture Notes 3vivek guptaAinda não há avaliações

- Unit-3 DWDMDocumento11 páginasUnit-3 DWDMPRANITHA REDDYAinda não há avaliações

- Reducts and Discretization Concepts, Tools For Predicting Student's PerformanceDocumento9 páginasReducts and Discretization Concepts, Tools For Predicting Student's Performancepro_naveenAinda não há avaliações

- Unit-3 ClassificationDocumento28 páginasUnit-3 ClassificationAnand Kumar BhagatAinda não há avaliações

- 10 2Documento10 páginas10 2uxamaAinda não há avaliações

- Bs 31267274Documento8 páginasBs 31267274IJMERAinda não há avaliações

- Classification Through Machine Learning Technique: C4.5 Algorithm Based On Various EntropiesDocumento8 páginasClassification Through Machine Learning Technique: C4.5 Algorithm Based On Various EntropiesMohamed Hechmi JERIDIAinda não há avaliações

- Chapter 3Documento5 páginasChapter 3murli dharAinda não há avaliações

- Decision Tree: "For Each Node of The Tree, The Information Value MeasuresDocumento3 páginasDecision Tree: "For Each Node of The Tree, The Information Value MeasuresAditya Narain SinghAinda não há avaliações

- Adaptive Boosting Assisted Multiclass ClassificationDocumento5 páginasAdaptive Boosting Assisted Multiclass ClassificationInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Decision Tree Algorithm, ExplainedDocumento20 páginasDecision Tree Algorithm, ExplainedAli KhanAinda não há avaliações

- Decision Tree For Classification (ID3 Information Gain Entropy)Documento3 páginasDecision Tree For Classification (ID3 Information Gain Entropy)vishweshhampaliAinda não há avaliações

- Comparison of Different Clustering Algorithms Using WEKA ToolDocumento3 páginasComparison of Different Clustering Algorithms Using WEKA ToolIJARTESAinda não há avaliações

- Designing An Improved Id3 Decision Tree AlgorithmDocumento5 páginasDesigning An Improved Id3 Decision Tree AlgorithmInternational Journal of Application or Innovation in Engineering & ManagementAinda não há avaliações

- Data Structures: Notes For Lecture 13 Techniques of Data Mining by Samaher Hussein AliDocumento8 páginasData Structures: Notes For Lecture 13 Techniques of Data Mining by Samaher Hussein Alisamaher husseinAinda não há avaliações

- A Survey On Decision Tree Algorithms of Classification in Data MiningDocumento5 páginasA Survey On Decision Tree Algorithms of Classification in Data MininglastofspadesAinda não há avaliações

- Decision Tree RDocumento5 páginasDecision Tree RDivya BAinda não há avaliações

- Unit Iii DMDocumento48 páginasUnit Iii DMSuganthi D PSGRKCWAinda não há avaliações

- Decision TreeDocumento43 páginasDecision TreeKimhorng HornAinda não há avaliações

- ID3 DecisionTreeDocumento21 páginasID3 DecisionTreeSmeet SinghAinda não há avaliações

- Malacad Activity3Documento8 páginasMalacad Activity3Regine MalacadAinda não há avaliações

- Day48 Decision TreesDocumento5 páginasDay48 Decision TreesIgor FernandesAinda não há avaliações

- Decision Tree 2Documento20 páginasDecision Tree 2nguyenxuanthai140585Ainda não há avaliações

- 1.decision Trees ConceptsDocumento70 páginas1.decision Trees ConceptsSuyash JainAinda não há avaliações

- Decision TreeDocumento57 páginasDecision TreeArjun KhoslaAinda não há avaliações

- DTminer AbstractDocumento2 páginasDTminer Abstractapi-19728676Ainda não há avaliações

- 95 339 1 PBDocumento13 páginas95 339 1 PBVijay ManiAinda não há avaliações

- Classification DecisionTreesNaiveBayeskNNDocumento75 páginasClassification DecisionTreesNaiveBayeskNNDev kartik AgarwalAinda não há avaliações

- Ijarcce 140Documento4 páginasIjarcce 140Agusti Frananda Alfonsus NaibahoAinda não há avaliações

- It2305 Java Programming LabDocumento42 páginasIt2305 Java Programming LabSenthil KumarAinda não há avaliações

- Ics e Programs AnswersiDocumento30 páginasIcs e Programs AnswersiKhadija AbbasAinda não há avaliações

- Final List 3Documento1.539 páginasFinal List 3manalidubalAinda não há avaliações

- Cho1 INTERNETDocumento10 páginasCho1 INTERNETBarcelona CataluniaAinda não há avaliações

- Introduction To HTMLDocumento22 páginasIntroduction To HTMLmanalidubalAinda não há avaliações

- Lab Manual - Web Technology ProgrammingDocumento11 páginasLab Manual - Web Technology ProgrammingmanalidubalAinda não há avaliações

- OOPJ GTU Dec-2010Documento30 páginasOOPJ GTU Dec-2010manalidubalAinda não há avaliações

- hw1 SolDocumento3 páginashw1 SolmanalidubalAinda não há avaliações

- Link For E-BalagurusamyDocumento1 páginaLink For E-BalagurusamymanalidubalAinda não há avaliações

- Roll No: - Enrolment No: - Gujarat Technological UniversityDocumento1 páginaRoll No: - Enrolment No: - Gujarat Technological UniversitymanalidubalAinda não há avaliações

- Chapter 1-pDocumento3 páginasChapter 1-pBhagyaSenanayakeAinda não há avaliações

- 1st PageDocumento2 páginas1st PagemanalidubalAinda não há avaliações

- Architecture IntroductionDocumento34 páginasArchitecture IntroductionSvsn Sandeep SvsnAinda não há avaliações

- Lin AlgDocumento27 páginasLin Algapi-3841322100% (1)

- Hibernate Spring Maven Eclipse TutorialDocumento33 páginasHibernate Spring Maven Eclipse Tutorialalvaro_cachoAinda não há avaliações

- Spring MVC Step by StepDocumento68 páginasSpring MVC Step by Stepapi-3727274100% (1)

- Available at VTU HUB (Android App) : Atme College of Engineering, MysuruDocumento32 páginasAvailable at VTU HUB (Android App) : Atme College of Engineering, MysuruRahul Singh PariharAinda não há avaliações

- Sterilization Review Questionnaire ETODocumento5 páginasSterilization Review Questionnaire ETOsaberAinda não há avaliações

- Chapter 3 Resurvey and Verification SurveyDocumento18 páginasChapter 3 Resurvey and Verification SurveyNaqibDarwishAinda não há avaliações

- BSCE Worksheet Mod 3 Lessons12 - FAMILY-NAME-3Documento7 páginasBSCE Worksheet Mod 3 Lessons12 - FAMILY-NAME-3RINCONADA ReynalynAinda não há avaliações

- Light Previous Years Shobhit NirwanDocumento12 páginasLight Previous Years Shobhit NirwanPramod ChoudharyAinda não há avaliações

- Project 2020 1 IT02 KA229 079474Documento3 páginasProject 2020 1 IT02 KA229 079474RECAİ TAŞAinda não há avaliações

- TDS-EN-Congrout AF - Rev 013-Aug 21Documento1 páginaTDS-EN-Congrout AF - Rev 013-Aug 21LONG LASTAinda não há avaliações

- DLP-Compound Sentences To Show Cause and Effect RelationshipDocumento1 páginaDLP-Compound Sentences To Show Cause and Effect RelationshipJocelyn G. EmpinadoAinda não há avaliações

- BBA Lecture NotesDocumento36 páginasBBA Lecture NotesSaqib HanifAinda não há avaliações

- Science4 Water CycleDocumento8 páginasScience4 Water CycleJoy PartozaAinda não há avaliações

- Institute For Career Research Editors - Careers in Medical Research-Institute For Career Research (2007)Documento32 páginasInstitute For Career Research Editors - Careers in Medical Research-Institute For Career Research (2007)Cynthia CAinda não há avaliações

- Pressure Answer SchemeDocumento4 páginasPressure Answer SchemetrisyiajottAinda não há avaliações

- 10C21Documento26 páginas10C21Trần ThịnhAinda não há avaliações

- Dragline Cycle TimeDocumento11 páginasDragline Cycle TimeanfiboleAinda não há avaliações

- Exp-2 - Blocked-Rotor Test On A Three-Phase IMDocumento3 páginasExp-2 - Blocked-Rotor Test On A Three-Phase IMMudit BhatiaAinda não há avaliações

- Designing CLT or Glulam Beams With Notches Accounting For Moisture EffectsDocumento6 páginasDesigning CLT or Glulam Beams With Notches Accounting For Moisture EffectsSujit BhandariAinda não há avaliações

- Whole Brain Learning System Outcome-Based Education: Oral Communication Grade 11Documento20 páginasWhole Brain Learning System Outcome-Based Education: Oral Communication Grade 11HeiyoungAinda não há avaliações

- IUCAA ReportDocumento4 páginasIUCAA ReportRADHIKA BHARADIYAAinda não há avaliações

- Homework Set #4Documento2 páginasHomework Set #4mazhariAinda não há avaliações

- Chapter 3 SolutionsDocumento4 páginasChapter 3 SolutionsCHÍNH ĐÀO QUANGAinda não há avaliações

- Response of Buried Pipes Taking Into Account Seismic and Soil Spatial VariabilitiesDocumento8 páginasResponse of Buried Pipes Taking Into Account Seismic and Soil Spatial VariabilitiesBeh RangAinda não há avaliações

- SCIENCE MELCsDocumento18 páginasSCIENCE MELCsAntoneaAinda não há avaliações

- CPM Precalculus Homework AnswersDocumento7 páginasCPM Precalculus Homework Answerscqxxvzhlf100% (1)

- Influence of Soil Structure Interaction On Seismic Demands - 2019 - EngineeringDocumento15 páginasInfluence of Soil Structure Interaction On Seismic Demands - 2019 - Engineeringabubaker ahmedAinda não há avaliações

- MOSFET - Working, Types, Operation, Advantages & ApplicationsDocumento28 páginasMOSFET - Working, Types, Operation, Advantages & ApplicationsgezahegnAinda não há avaliações

- Mayan CivilizationDocumento16 páginasMayan CivilizationOwen Wisdom100% (1)

- Acr-Rpms TrainingDocumento4 páginasAcr-Rpms TrainingHardy MisagalAinda não há avaliações

- Amity International School, Pushp Vihar English Worksheet - Class 12Documento3 páginasAmity International School, Pushp Vihar English Worksheet - Class 12ViplavAinda não há avaliações

- The Complete Strategy LandscapeDocumento1 páginaThe Complete Strategy LandscapeHmoud AMAinda não há avaliações

- Determinant and OtherDocumento9 páginasDeterminant and Otherpema karmoAinda não há avaliações

- ChatGPT Side Hustles 2024 - Unlock the Digital Goldmine and Get AI Working for You Fast with More Than 85 Side Hustle Ideas to Boost Passive Income, Create New Cash Flow, and Get Ahead of the CurveNo EverandChatGPT Side Hustles 2024 - Unlock the Digital Goldmine and Get AI Working for You Fast with More Than 85 Side Hustle Ideas to Boost Passive Income, Create New Cash Flow, and Get Ahead of the CurveAinda não há avaliações

- Scary Smart: The Future of Artificial Intelligence and How You Can Save Our WorldNo EverandScary Smart: The Future of Artificial Intelligence and How You Can Save Our WorldNota: 4.5 de 5 estrelas4.5/5 (55)

- Generative AI: The Insights You Need from Harvard Business ReviewNo EverandGenerative AI: The Insights You Need from Harvard Business ReviewNota: 4.5 de 5 estrelas4.5/5 (2)

- ChatGPT Millionaire 2024 - Bot-Driven Side Hustles, Prompt Engineering Shortcut Secrets, and Automated Income Streams that Print Money While You Sleep. The Ultimate Beginner’s Guide for AI BusinessNo EverandChatGPT Millionaire 2024 - Bot-Driven Side Hustles, Prompt Engineering Shortcut Secrets, and Automated Income Streams that Print Money While You Sleep. The Ultimate Beginner’s Guide for AI BusinessAinda não há avaliações

- ChatGPT Money Machine 2024 - The Ultimate Chatbot Cheat Sheet to Go From Clueless Noob to Prompt Prodigy Fast! Complete AI Beginner’s Course to Catch the GPT Gold Rush Before It Leaves You BehindNo EverandChatGPT Money Machine 2024 - The Ultimate Chatbot Cheat Sheet to Go From Clueless Noob to Prompt Prodigy Fast! Complete AI Beginner’s Course to Catch the GPT Gold Rush Before It Leaves You BehindAinda não há avaliações

- The Master Algorithm: How the Quest for the Ultimate Learning Machine Will Remake Our WorldNo EverandThe Master Algorithm: How the Quest for the Ultimate Learning Machine Will Remake Our WorldNota: 4.5 de 5 estrelas4.5/5 (107)

- Machine Learning: The Ultimate Beginner's Guide to Learn Machine Learning, Artificial Intelligence & Neural Networks Step by StepNo EverandMachine Learning: The Ultimate Beginner's Guide to Learn Machine Learning, Artificial Intelligence & Neural Networks Step by StepNota: 4.5 de 5 estrelas4.5/5 (19)

- Artificial Intelligence: A Guide for Thinking HumansNo EverandArtificial Intelligence: A Guide for Thinking HumansNota: 4.5 de 5 estrelas4.5/5 (30)

- Working with AI: Real Stories of Human-Machine Collaboration (Management on the Cutting Edge)No EverandWorking with AI: Real Stories of Human-Machine Collaboration (Management on the Cutting Edge)Nota: 5 de 5 estrelas5/5 (5)

- Four Battlegrounds: Power in the Age of Artificial IntelligenceNo EverandFour Battlegrounds: Power in the Age of Artificial IntelligenceNota: 5 de 5 estrelas5/5 (5)

- Artificial Intelligence: The Insights You Need from Harvard Business ReviewNo EverandArtificial Intelligence: The Insights You Need from Harvard Business ReviewNota: 4.5 de 5 estrelas4.5/5 (104)

- 100M Offers Made Easy: Create Your Own Irresistible Offers by Turning ChatGPT into Alex HormoziNo Everand100M Offers Made Easy: Create Your Own Irresistible Offers by Turning ChatGPT into Alex HormoziAinda não há avaliações

- The AI Advantage: How to Put the Artificial Intelligence Revolution to WorkNo EverandThe AI Advantage: How to Put the Artificial Intelligence Revolution to WorkNota: 4 de 5 estrelas4/5 (7)

- Demystifying Prompt Engineering: AI Prompts at Your Fingertips (A Step-By-Step Guide)No EverandDemystifying Prompt Engineering: AI Prompts at Your Fingertips (A Step-By-Step Guide)Nota: 4 de 5 estrelas4/5 (1)

- Who's Afraid of AI?: Fear and Promise in the Age of Thinking MachinesNo EverandWho's Afraid of AI?: Fear and Promise in the Age of Thinking MachinesNota: 4.5 de 5 estrelas4.5/5 (13)

- Artificial Intelligence: The Complete Beginner’s Guide to the Future of A.I.No EverandArtificial Intelligence: The Complete Beginner’s Guide to the Future of A.I.Nota: 4 de 5 estrelas4/5 (15)

- AI and Machine Learning for Coders: A Programmer's Guide to Artificial IntelligenceNo EverandAI and Machine Learning for Coders: A Programmer's Guide to Artificial IntelligenceNota: 4 de 5 estrelas4/5 (2)

- Artificial Intelligence & Generative AI for Beginners: The Complete GuideNo EverandArtificial Intelligence & Generative AI for Beginners: The Complete GuideNota: 5 de 5 estrelas5/5 (1)

- Creating Online Courses with ChatGPT | A Step-by-Step Guide with Prompt TemplatesNo EverandCreating Online Courses with ChatGPT | A Step-by-Step Guide with Prompt TemplatesNota: 4 de 5 estrelas4/5 (4)

- HBR's 10 Must Reads on AI, Analytics, and the New Machine AgeNo EverandHBR's 10 Must Reads on AI, Analytics, and the New Machine AgeNota: 4.5 de 5 estrelas4.5/5 (69)

- Power and Prediction: The Disruptive Economics of Artificial IntelligenceNo EverandPower and Prediction: The Disruptive Economics of Artificial IntelligenceNota: 4.5 de 5 estrelas4.5/5 (38)

- Mastering Large Language Models: Advanced techniques, applications, cutting-edge methods, and top LLMs (English Edition)No EverandMastering Large Language Models: Advanced techniques, applications, cutting-edge methods, and top LLMs (English Edition)Ainda não há avaliações