Escolar Documentos

Profissional Documentos

Cultura Documentos

Figure by Mit Opencourseware

Enviado por

Raul Gil BayardoTítulo original

Direitos autorais

Formatos disponíveis

Compartilhar este documento

Compartilhar ou incorporar documento

Você considera este documento útil?

Este conteúdo é inapropriado?

Denunciar este documentoDireitos autorais:

Formatos disponíveis

Figure by Mit Opencourseware

Enviado por

Raul Gil BayardoDireitos autorais:

Formatos disponíveis

16.

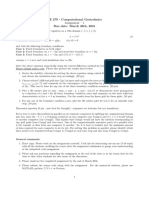

323 Lecture 3

Dynamic Programming

Principle of Optimality

Dynamic Programming

Discrete LQR

Figure by MIT OpenCourseWare.

Spr 2008

Dynamic Programming

16.323 31

DP is a central idea of control theory that is based on the

Principle of Optimality: Suppose the optimal solution for a

problem passes through some intermediate point (x1, t1), then

the optimal solution to the same problem starting at (x1, t1)

must be the continuation of the same path.

Proof? What would the implications be if it was false?

This principle leads to:

Numerical solution procedure called Dynamic Programming for

solving multi-stage decision making problems.

Theoretical results on the structure of the resulting control law.

Texts:

Dynamic Programming (Paperback) by Richard Bellman (Dover)

Dynamic Programming and Optimal Control (Vol 1 and 2) by D. P.

Bertsekas

June 18, 2008

Spr 2008

16.323 32

Classical Examples

Shortest Path Problems (Bryson gure 4.2.1) classic robot naviga

tion and/or aircraft ight path problems

Figure by MIT OpenCourseWare.

Goal is to travel from A to B in the shortest time possible

Travel times for each leg are shown in the gure

There are 20 options to get from A to B could evaluate each

and compute travel time, but that would be pretty tedious

Alternative approach: Start at B and work backwards, invoking

the principle of optimality along the way.

First step backward can be either up (10) or down (11)

10

6

B

x

7

11

Figure by MIT OpenCourseWare.

Consider the travel time from point x

Can go up and then down 6 + 10 = 16

June 18, 2008

Spr 2008

16.323 33

Or can go down and then up 7 + 11 = 18

Clearly best option from x is go up, then down, with a time of 16

From principle of optimality, this is best way to get to B for any

path that passes through x.

Repeat process for all other points, until nally get to initial point

shortest path traced by moving in the directions of the arrows.

Figure by MIT OpenCourseWare.

Key advantage is that only had to nd 15 numbers to solve this

problem this way rather than evaluate the travel time for 20 paths

Modest dierence here, but scales up for larger problems.

If n = number of segments on side (3 here) then:

Number of routes scales as (2n)!/(n!)2

Number DP computations scales as (n + 1)2 1

June 18, 2008

Spr 2008

Example 2

16.323 34

Routing Problem [Kirk, page 56] through a street maze

Figure by MIT OpenCourseWare.

Similar problem (minimize cost to travel from c to h) with a slightly

more complex layout

Once again, start at end (h) and work backwards

Can get to h from e, g directly, but there are 2 paths to h from e.

Basics: Jgh

= 2, and

Jfh = Jf g + Jgh

=5

Optimal cost from e to h given by

Jeh

= min{Jef gh, Jeh} = min{[Jef + Jfh], Jeh}

= min{2 + 5, 8} = 7 e f g h

Also Jdh

= Jde

+ Jeh

= 10

Principle of optimality tells that, since we already know the best

way to h from e, do not need to reconsider the various options

from e again when starting at d just use the best.

Optimal cost from c to h given by

Jch

= min{Jcdh, Jcf h} = min{[Jcd + Jdh

], [Jcf + Jfh]}

= min{[5 + 10], [3 + 5]} = 8 c f g h

June 18, 2008

Spr 2008

16.323 35

Examples show the basis of dynamic programming and use of principle

of optimality.

In general, if there are numerous options at location that next

lead to locations x1, . . . , xn, choose the action that leads to

Jh

= min [Jx1 + Jx1h], [Jx2 + Jx2h], . . . , [Jxn + Jxnh]

xi

Can apply the same process to more general control problems. Typi

cally have to assume something about the system state (and possible

control inputs), e.g., bounded, but also discretized.

Roadmap:

Grid the time/state and quantized control inputs.

Time/state grid, evaluate necessary control

Discrete time problem discrete LQR

Continuous time problem calculus of variations cts LQR

Figure 3.1: Classic picture of discrete time/quantized space grid with the linkages

possible through the control commands. Again, it is hard to evaluate all options

moving forward through the grid, but we can work backwards and use the principle

of optimality to reduce this load.

June 18, 2008

Spr 2008

Classic Control Problem

16.323 36

Consider the problem of minimizing:

tf

min J = h(x(tf )) +

g(x(t), u(t), t)) dt

t0

subject to

x = a(x, u, t)

x(t0) = xed

tf = xed

Other constraints on x(t) and u(t) can also be included.

Step 1 of solution approach is to develop a grid over space/time.

Then look at possible nal states xi(tf ) and evaluate nal costs

For example, can discretize the state into 5 possible cases x1,. . . ,x5

Ji = h(xitf ) , i

Step 2: back up 1 step in time and consider all possible ways of

completing the problem.

To evaluate the cost of a control action, must approximate the

integral in the cost.

June 18, 2008

Spr 2008

16.323 37

Consider the scenario where you are at state xi at time tk , and apply

j

control uij

k to move to state x at time tk+1 = tk + t.

Approximate cost is

tk+1

g(x(t), u(t), t)) dt g(xik , uij

k , tk )t

tk

Can solve for control inputs directly from system model:

xjk+1

xik

a(xik , uij

k , tk )t

a(xik , ukij , tk )

xjk+1 xki

=

t

which can be solved to nd uij

k.

Process is especially simple if the control inputs are ane:

x = f (x, t) + q(x, t)u

which gives

j

i

i

1 xk+1 xk

uij

=

q(x

,

t

)

f (xik , tk )

k k

k

t

j

So for any combination of xik and xk+1

can evaluate the incremental

cost J(xik , xjk+1) of making this state transition

Assuming already know the optimal path from each new terminal

point (xjk+1), can establish optimal path to take from xik using

j

i

i

j

J (xk , tk ) = min J(xk , xk+1) + J (xk+1)

j

xk+1

Then for each xik , output is:

Best xik+1 to pick, because it gives lowest cost

Control input required to achieve this best cost.

Then work backwards in time until you reach xt0 , when only one value

of x is allowed because of the given initial condition.

June 18, 2008

Spr 2008

Other Considerations

16.323 38

With bounds on the control, then certain state transitions might not

be allowed from 1 time-step to the next.

With constraints on the state, certain values of x(t) might not be

allowed at certain times t.

Extends to free end time problems, where

tf

min J = h(x(tf ), tf ) +

g(x(t), u(t), t)) dt

t0

with some additional constraint on the nal state m(x(tf ), tf ) = 0.

Gives group of points that (approximately) satisfy the terminal

constraint

Can evaluate cost for each, and work backwards from there.

June 18, 2008

Spr 2008

16.323 39

Process extends to higher dimensional problems where the state is a

vector.

Just have to dene a grid of points in x and t, which for two

dimensions would look like:

Figure by MIT OpenCourseWare.

Figure 3.2: At any time tk , have a two dimensional array of grid points.

Previous formulation picked xs and used those to determine the us.

For more general problems, might be better o picking the us and

using those to determine the propagated xs

ij

i

i

j

J (xk , tk ) = min J(xk , uk ) + J (xk+1, tk+1)

ij

uk

= min

ij

uk

g(xik , ukij , tk )t

+J

j

(xk+1

, tk+1)

To do this, must quantize the control inputs as well.

But then likely that terminal points from one time step to the next

will not lie on the state discrete points must interpolate the cost

to go between them.

June 18, 2008

Spr 2008

16.323 310

Option 1: nd the control that moves the state from a point on

one grid to a point on another.

Option 2: quantize the control inputs, and then evaluate the result

ing state for all possible inputs

xjk+1 = xik + a(xik , uij

k , tk )t

Issue at that point is that xjk+1 probably will not agree with the

tk+1 grid points must interpolate the available J .

See, for example, R.E.Larson A survey of dynamic programming

computational procedures, IEEE TAC Dec 1967 (on web) or sec

tion 3.6 in Kirk.

j

Do this for all admissible uij

k and resulting xk+1 , and then take

J (xik , tk ) = min J(xik , uij

k , tk )

ij

uk

Main problem with dynamic programming is how badly it scales.

Given Nt points in time and Nx quantized states of dimension n

Number of points to consider is N = NtNxn

Curse of Dimensionality R. Bellman, Dynamic Pro

gramming (1957) now from Dover.

June 18, 2008

Spr 2008

DP Example

16.323 311

See Kirk pg.59:

J = x2(T ) +

u2(t) dt

with x = ax + u, where 0 x 1.5 and 1 u 1

Must quantize the state within the allowable values and time within

the range t [0, 2] using N =2, t = T /N = 1.

Approximate the continuous system as:

x

x(t + t) x(t)

= ax(t) + u(t)

t

which gives that

xk+1 = (1 + at)xk + (t)uk

Very common discretization process (Euler integration approxima

tion) that works well if t is small

Use approximate calculation from previous section cost becomes

J = x2(T ) +

N

1

u2k t

k=0

Take = 2 and a = 0 to simplify things a bit.

With 0 x(k) 1.5, take x quantized into four possible values

xk {0, 0.5, 1.0, 1.5}

With control bounded |u(k)| 1, assume it is quantized into ve

possible values: uk {1, 0.5, 0, 0.5, 1}

June 18, 2008

Spr 2008

16.323 312

Start evaluate cost associated with all possible terminal states

j

j 2

x

j2

J2 = h(x

2

) = (x

2

)

0

0

0.5

0.25

1

1

1.5

2.25

Given x1 and possible x2, can evaluate the control eort required to

make that transition:

u(1)

xi

1

0

0.5

1

1.5

x

j2

= x

i

1

+ u(1)

0 0.5 1 1.5

0

0.5 1 1.5

-0.5 0 0.5 1

-1 -0.5 0 0.5

-1.5 -1 -0.5 0

which can be used to compute the cost increments:

ij

J

12

xi

1

0

0.5

1

1.5

x

j2

0 0.5 1 1.5

0 0.5 2 XX

0.5 0 0.5 2

2 0.5 0 0.5

XX 2 0.5 0

ij

and costs at time t = 1 given by J1 = J

12

+ J2(x2j )

J1

x

i

1

0

0.5

1

1.5

June 18, 2008

xj

2

0 0.5 1

0 0.75 3

0.5 0.25 1.5

2 0.75 1

XX 2.25 1.5

1.5

XX

4.25

2.75

2.25

Spr 2008

16.323 313

Take min across each row to determine best action at each possible

x1 J1(xj1)

xi1

0

0.5

1

1.5

xj2

0

0.5

0.5

1

Can repeat the process to nd the costs at time t = 0 which are

ij

J0 = J01

+ J1(xj1

)

J0

xi

0

0

0.5

1

1.5

xj

1

0 0.5

1

0 0.75 2.75

0.5 0.25 1.25

2 0.75 0.75

XX 2.25 1.25

1.5

XX

3.5

2

1.5

and again, taking min across the rows gives the best actions:

xi0

0

0.5

1

1.5

xj1

0

0.5

0.5

1

So now we have a complete strategy for how to get from any xi0 to

the best x2 to minimize the cost

This process can be highly automated, and this clumsy presenta

tion is typically not needed.

June 18, 2008

Spr 2008

Discrete LQR

16.323 314

For most cases, dynamic programming must be solved numerically

often quite challenging.

A few cases can be solved analytically discrete LQR (linear quadratic

regulator) is one of them

Goal: select control inputs to minimize

N 1

1 T

1 T

J = xN HxN +

[xk Qk xk + ukT Rk uk ]

2

2

k=0

so that

1 T

T

gd(xk , uk ) =

x Qk xk + uk Rk uk

2 k

subject to the dynamics

xk+1 = Ak xk + Bk uk

Assume that H = H T 0, Q = QT 0, and R = RT > 0

Including any other constraints greatly complicates problem

Clearly JN [xN ] = 12 xTN HxN now need to nd JN 1[xN 1]

JN 1[xN 1] = min {gd(xN 1, uN 1) + JN [xN ]}

uN 1

1 T

T

T

= min

xN 1QN 1xN 1 + uN 1RN 1uN 1 + xN HxN ]

uN 1 2

Note that xN = AN 1xN 1 + BN 1uN 1, so that

1 T

JN 1[xN 1] = min

xN 1QN 1xN 1 + uTN 1RN 1uN 1

uN 1 2

T

+ {AN 1xN 1 + BN 1uN 1} H {AN 1xN 1 + BN 1uN 1}

June 18, 2008

Spr 2008

16.323 315

Take derivative with respect to the control inputs

JN 1[xN 1]

= uTN 1RN 1 +{AN 1xN 1 + BN 1uN 1}T HBN 1

uN 1

Take transpose and set equal to 0, yields

T

RN 1 + BN 1HBN 1 uN 1 + BNT 1HAN 1xN 1 = 0

Which suggests a couple of key things:

The best control action at time N 1, is a linear state feedback

on the state at time N 1:

1 T

T

uN 1 = RN 1 + BN 1HBN 1

BN 1HAN 1xN 1

FN 1xN 1

Furthermore, can show that

2JN 1[xN 1]

= RN 1 + BNT 1HBN 1 > 0

2

uN 1

so that the stationary point is a minimum

With this control decision, take another look at

1

JN 1[xN 1] = xTN 1 QN 1 + FNT 1RN 1FN 1+

2

T

{AN 1 BN 1FN 1} H {AN 1 BN 1FN 1} xN 1

1 T

x

PN 1xN 1

2 N 1

Note that PN = H, which suggests a convenient form for gain F :

1 T

FN 1 = RN 1 + BNT 1PN BN 1

BN 1PN AN 1

(3.20)

June 18, 2008

Spr 2008

16.323 316

Now can continue using induction assume that at time k the control

will be of the form uk = Fk xk where

1 T

Fk = Rk + BkT Pk+1Bk

Bk Pk+1Ak

and Jk[xk ] = 12 xTk Pk xk where

Pk = Qk + FkT Rk Fk + {Ak Bk Fk }T Pk+1 {Ak Bk Fk }

Recall that both equations are solved backwards from k + 1 to k.

Now consider time k 1, with

1

Jk1[xk1] = min

xTk1Qk1xk1 + uTk1Rk1uk1 + Jk[xk ]

uk1 2

Taking derivative with respect to uk1 gives,

Jk1[xk1]

= uTk1Rk1 + {Ak1xk1 + Bk1uk1}T Pk Bk1

uk1

so that the best control input is

1 T

uk1 = Rk1 + BkT1Pk Bk1

Bk1Pk Ak1xk1

= Fk1xk1

Substitute this control into the expression for Jk1[xk1] to show that

1

Jk1[xk1] = xTk1Pk1xk1

2

and

Pk1 = Qk1 + FkT1Rk1Fk1 +

{Ak1 Bk1Fk1}T Pk {Ak1 Bk1Fk1}

Thus the same properties hold at time k 1 and k, and N and N 1

in particular, so they will always be true.

June 18, 2008

Spr 2008

16.323 317

Algorithm

Can summarize the above in the algorithm:

(i)

(ii)

(iii)

PN = H

1 T

Fk = Rk + BkT Pk+1Bk

Bk Pk+1Ak

Pk = Qk + FkT Rk Fk + {Ak Bk Fk }T Pk+1 {Ak Bk Fk }

cycle through steps (ii) and (iii) from N 1 0.

Notes:

The result is a control schedule that is time varying, even if A, B,

Q, and R are constant.

Clear that Pk and Fk are independent of the state and can be

computed ahead of time, o-line.

Possible to eliminate the Fk part of the cycle, and just cycle

through Pk

1 T

T

T

Pk = Qk +Ak Pk+1 Pk+1Bk Rk + Bk Pk+1Bk

Bk Pk+1 Ak

Initial assumption Rk > 0 k can be relaxed, but we must ensure

that Rk+1 + BkT Qk+1Bk > 0. 2

In the expression:

J

(xik , tk )

= min

ij

uk

g(xik , ukij , tk )t

+J

(xjk+1, tk+1)

the term J (xjk+1, tk+1) plays the role of a cost-to-go, which is a

key concept in DP and other control problems.

2 Anderson

and Moore, Optimal Control: Linear Quadratic Methods, pg. 30

June 18, 2008

Spr 2008

Suboptimal Control

16.323 318

The optimal initial cost is J0[x0] = 12 xT0 P0x0. One question: how

would the cost of a dierent controller strategy compare?

uk = Gk xk

Can substitute this controller into the cost function and compute

N 1

1 T

1 T

J = xN HxN +

[xk Qk xk + ukT Rk uk ]

2

2

JG =

1 T

1

xN HxN +

2

2

k=0

N

1

xTk [Qk + GTk Rk Gk ]xk

k=0

where

xk+1 = Ak xk + Bk uk = (Ak Bk Gk )xk

Note that:

N 1

1

1 T

xk+1Sk+1xk+1 xTk Sk xk = xTN SN xN x0T S0x0

2

2

k=0

So can rearrange the cost function as

N 1

1 T

1 T

JG = xN HxN +

xk [Qk + GTk Rk Gk Sk ]xk

2

2

k=0

1

+ xTk+1Sk+1xk+1 xTN SN xN xT0 S0x0

2

Now substitute for xk+1 = (Ak Bk Gk )xk , and dene Sk so that

SN = H

Sk = Qk + GTk Rk Gk + {Ak Bk Gk }T Sk+1 {Ak Bk Gk }

which is another recursion, that gives

1

JG = xT0 S0x0

2

So that for a given x0, we can compare P0 and S0 to evaluate the

extent to which the controller is suboptimal.

June 18, 2008

Spr 2008

Steady State

16.323 319

Assume3

Time invariant problem (LTI) i.e., A, B, Q, R are constant

System [A, B] stabilizable uncontrollable modes are stable.

For any H, then as N , the recursion for P tends to a constant

solution with Pss 0 that is bounded and satises (set Pk Pk+1)

1 T

T

T

Pss = Q + A Pss PssB R + B PssB

B Pss A (3.21)

Discrete form of the famous Algebraic Riccati Equation

Typically hard to solve analytically, but easy to solve numerically.

Can be many PSD solutions of (3.21), recursive solution will be

one.

Let Q = C T C 0, which is equivalent to having cost measurements

z = Cx and state penalty zT z = xT C T Cx = xT Qx. If [A, C]

detectable, then:

Independent of H, recursion for P has a unique steady state

solution Pss 0 that is the unique PSD solution of (3.21).

The associated steady state gain is

1 T

T

Fss = R + B PssB

B PssA

and using Fss, the closed-loop system xk+1 = (A BFss)xk is

asymptotically stable, i.e.,

|(A BFss)| < 1

Detectability required to ensure that all unstable modes penalized

in state cost.

If, in addition, [A, C] observable4, then there is a unique Pss > 0

3 See

Lewis and Syrmos, Optimal Control, Thm 2.4-2 and Kwakernaak and Sivan, Linear Optimal Control Systems, Thm 6.31

4 Guaranteed

June 18, 2008

if Q > 0

Spr 2008

Discrete LQR Example

16.323 320

Integrator scalar system x = u, which gives

xk+1 = xk + uk t

so that A = 1, B = t = 1 and

N 1

1

1 2

2

J = x(N ) +

[xk + uk2 ]

4

2

k=0

so that N = 10, Q = R = 1, H = 1/2 (numbers in code/gures might dier)

Note that this is a discrete system, and the rules for stability are

dierent need |i(A BF )| < 1.

Open loop system is marginally stable, and a gain 1 > F > 0 will

stabilize the system.

Figure 3.3: discrete LQR comparison to constant gain, G = 0.25

Plot shows discrete LQR results: clear that the Pk settles to a con

stant value very quickly

Rate of reaching steady state depends on Q/R. For Q/R large

reaches steady state quickly

(Very) Suboptimal F gives an obviously worse cost

June 18, 2008

Spr 2008

16.323 321

But a reasonable choice of a constant F in this case gives nearly

optimal results.

Figure 3.4: discrete LQR comparison to constant gain, G = F (0)

Figure 3.5: State response comparison

State response consistent

June 18, 2008

Spr 2008

Gain Insights

16.323 322

Note that from Eq. 3.20 we know that

1 T

T

FN 1 = RN 1 + BN 1PN BN 1

BN 1PN AN 1

which for the scalar case reduces to

BN 1PN AN 1

FN 1 =

RN 1 + BN2 1PN

So if there is a high weighting on the terminal state, then H

and PN is large. Thus

FN 1

BN 1PN AN 1

A

BN2 1PN

B

and

A

xN = (A BF )xN 1 = (A B )xN 1 = 0

B

regardless of the value of xN 1. This is a nilpotent controller.

If control penalty set very small, so that R 0 (Q/R large), then

FN 1

BN 1PN AN 1

A

BN2 1

PN

B

and xN = 0 as well.

State penalized, but control isnt, so controller will exert as much

eort as necessary to make x small.

In fact, this will typically make x(1) = 0 regardless of x(0) if there

are no limits on the control eort.

June 18, 2008

Spr 2008

Discrete scalar LQR

1

2

3

4

5

6

7

8

% 16.323 Spring 2008

% Jonathan How

% integ.m: integrator system

clear all

close all

%A=1;B=1;Q=1;R=1;H=0.5;N=5;

A=1;B=1;Q=1;R=2;H=.25;N=10;

9

10

11

12

13

14

15

P(N+1)=H; % shift indices to avoid index of 0

for j=N-1:-1:0

i=j+1; % shift indices to avoid index of 0

F(i)=inv(R+B*P(i+1)*B)*B*P(i+1)*A;

P(i)=(A-B*F(i))*P(i+1)*(A-B*F(i))+F(i)*R*F(i)+Q;

end

16

17

18

19

20

21

22

23

% what if we used a fixed gain of F(0), which stabilizes

S(N+1)=H; % shift indices to avoid index of 0

for j=N-1:-1:0

i=j+1; % shift indices to avoid index of 0

G(i)=F(1);

S(i)=(A-B*G(i))*S(i+1)*(A-B*G(i))+G(i)*R*G(i)+Q;

end

24

25

26

27

28

29

30

31

32

33

34

35

36

37

time=[0:1:N];

figure(1);clf

plot(time,P,ks,MarkerSize,12,MarkerFaceColor,k)

hold on

plot(time,S,rd,MarkerSize,12,MarkerFaceColor,r)

plot(time(1:N),F,bo,MarkerSize,12,MarkerFaceColor,b)

hold off

legend(Optimal P,Suboptimal S with G=F(0),Optimal F,Location,SouthWest)

xlabel(Time)

ylabel(P/S/F)

text(2,1,[S(0)-P(0) = ,num2str(S(1)-P(1))])

axis([-.1 N -1 max(max(P),max(S))+.5] )

print -dpng -r300 integ.png

38

39

40

41

42

43

44

45

% what if we used a fixed gain of G=0.25, which stabilizes

S(N+1)=H; % shift indices to avoid index of 0

for j=N-1:-1:0

i=j+1; % shift indices to avoid index of 0

G(i)=.25;

S(i)=(A-B*G(i))*S(i+1)*(A-B*G(i))+G(i)*R*G(i)+Q;

end

46

47

48

49

50

51

52

53

54

55

56

57

58

59

figure(2)

%plot(time,P,ks,time,S,rd,time(1:N),F,bo,MarkerSize,12)

plot(time,P,ks,MarkerSize,12,MarkerFaceColor,k)

hold on

plot(time,S,rd,MarkerSize,12,MarkerFaceColor,r)

plot(time(1:N),F,bo,MarkerSize,12,MarkerFaceColor,b)

hold off

legend(Optimal P,Suboptimal S with G=0.25,Optimal F,Location,SouthWest)

text(2,1,[S(0)-P(0) = ,num2str(S(1)-P(1))])

axis([-.1 N -1 max(max(P),max(S))+.5] )

ylabel(P/S/F)

xlabel(Time)

print -dpng -r300 integ2

60

61

62

63

64

65

66

% state response

x0=1;xo=x0;xs1=x0;xs2=x0;

for j=0:N-1;

k=j+1;

xo(k+1)=(A-B*F(k))*xo(k);

xs1(k+1)=(A-B*F(1))*xs1(k);

June 18, 2008

16.323 323

Spr 2008

67

68

69

70

71

72

73

74

75

76

77

78

79

xs2(k+1)=(A-B*G(1))*xs2(k);

end

figure(3)

plot(time,xo,bo,MarkerSize,12,MarkerFaceColor,b)

hold on

plot(time,xs1,ks,MarkerSize,9,MarkerFaceColor,k)

plot(time,xs2,rd,MarkerSize,12,MarkerFaceColor,r)

hold off

legend(Optimal,Suboptimal with G=F(0),Suboptimal with G=0.25,Location,North)

%axis([-.1 5 -1 3] )

ylabel(x(t))

xlabel(Time)

print -dpng -r300 integ3.png;

80

June 18, 2008

16.323 324

Spr 2008

Appendix

16.323 325

Def: LTI system is controllable if, for every x(t) and every nite

T > 0, there exists an input function u(t), 0 < t T , such that the

system state goes from x(0) = 0 to x(T ) = x.

Starting at 0 is not a special case if we can get to any state

in nite time from the origin, then we can get from any initial

condition to that state in nite time as well. 5

Thm: LTI system is controllable i it has no uncontrollable states.

Necessary and sucient condition for controllability is that

rank Mc

rank

B

AB A2B An1B = n

Def: LTI system is observable if the initial state x(0) can be

uniquely deduced from the knowledge of the input u(t) and output

y(t) for all t between 0 and any nite T > 0.

If x(0) can be deduced, then we can reconstruct x(t) exactly be

cause we know u(t) we can nd x(t) t.

Thm: LTI system is observable i it has no unobservable states.

We normally just say that the pair (A,C) is observable.

Necessary and sucient condition for observability is that

CA

2

rank Mo

rank

CA = n

...

CAn1

5 This

controllability from the origin is often called reachability.

June 18, 2008

Você também pode gostar

- A First Course in Predictive Control, Second Edition PDFDocumento427 páginasA First Course in Predictive Control, Second Edition PDFmarmaduke32100% (4)

- Foxboro PIDA (Low)Documento75 páginasFoxboro PIDA (Low)Cecep AtmegaAinda não há avaliações

- 16.323 Principles of Optimal Control: Mit OpencoursewareDocumento27 páginas16.323 Principles of Optimal Control: Mit Opencoursewaremousa bagherpourjahromiAinda não há avaliações

- Hasbun PosterDocumento21 páginasHasbun PosterSuhailUmarAinda não há avaliações

- 5.1 Dynamic Programming and The HJB Equation: k+1 K K K KDocumento30 páginas5.1 Dynamic Programming and The HJB Equation: k+1 K K K KMaria ZourarakiAinda não há avaliações

- Optimal Control ExercisesDocumento79 páginasOptimal Control ExercisesAlejandroHerreraGurideChile100% (2)

- OTS Talk Topputo PDFDocumento86 páginasOTS Talk Topputo PDFKorrine LifshitsAinda não há avaliações

- Michal Iz Ak, Daniel G Orges and Steven Liu (Izak - Goerges - Sliu@eit - Uni-Kl - De) Institute of Control Systems, University of Kaiserslautern, GermanyDocumento1 páginaMichal Iz Ak, Daniel G Orges and Steven Liu (Izak - Goerges - Sliu@eit - Uni-Kl - De) Institute of Control Systems, University of Kaiserslautern, GermanyAbbas AbbasiAinda não há avaliações

- Transfer FunctionsDocumento40 páginasTransfer FunctionsengAinda não há avaliações

- Dynopt - Dynamic Optimisation Code For Matlab: Fikar/research/dynopt/dynopt - HTMDocumento12 páginasDynopt - Dynamic Optimisation Code For Matlab: Fikar/research/dynopt/dynopt - HTMdhavalakkAinda não há avaliações

- FALLSEM2013-14 CP1806 30-Oct-2013 RM01 II OptimalControl UploadedDocumento10 páginasFALLSEM2013-14 CP1806 30-Oct-2013 RM01 II OptimalControl UploadedRajat Kumar SinghAinda não há avaliações

- Module 3Documento35 páginasModule 3waqasAinda não há avaliações

- 15.1 Dynamic OptimizationDocumento32 páginas15.1 Dynamic OptimizationDaniel Lee Eisenberg JacobsAinda não há avaliações

- Mathematicalmethods Lecture Slides Week8 NumericalMethodsDocumento48 páginasMathematicalmethods Lecture Slides Week8 NumericalMethodsericAinda não há avaliações

- Branch&Bound MIPDocumento35 páginasBranch&Bound MIPperplexeAinda não há avaliações

- Dinamik ProgrammingDocumento31 páginasDinamik ProgrammingAbrar SmektwoAinda não há avaliações

- Lecture 4 ControlDocumento23 páginasLecture 4 ControlPamela ChemutaiAinda não há avaliações

- Parabolic Pdes - Explicit Method: Heat Flow and DiffusionDocumento5 páginasParabolic Pdes - Explicit Method: Heat Flow and DiffusionjirihavAinda não há avaliações

- Control NotesDocumento47 páginasControl Notestilahuntenaye4Ainda não há avaliações

- U 2Documento20 páginasU 2ganeshrjAinda não há avaliações

- CH 9 MDPDocumento97 páginasCH 9 MDPMuhammad Asad KhanAinda não há avaliações

- Direct and Indirect Approaches For Solving Optimal Control Problems in MATLABDocumento13 páginasDirect and Indirect Approaches For Solving Optimal Control Problems in MATLABBharat MahajanAinda não há avaliações

- Dynamic Programing and Optimal ControlDocumento276 páginasDynamic Programing and Optimal ControlalexandraanastasiaAinda não há avaliações

- Advanced Algorithms Course. Lecture Notes. Part 11: Chernoff BoundsDocumento4 páginasAdvanced Algorithms Course. Lecture Notes. Part 11: Chernoff BoundsKasapaAinda não há avaliações

- PAR Final 2022Q2Documento7 páginasPAR Final 2022Q2Albert BausiliAinda não há avaliações

- A Short Report On MATLAB Implementation of The Girsanov Transformation For Reliability AnalysisDocumento8 páginasA Short Report On MATLAB Implementation of The Girsanov Transformation For Reliability AnalysisYoung EngineerAinda não há avaliações

- HW1Documento11 páginasHW1Tao Liu YuAinda não há avaliações

- Namic ProgrammingDocumento18 páginasNamic ProgrammingBogdan ManeaAinda não há avaliações

- LQR MIT OcwDocumento7 páginasLQR MIT OcwTushar GirotraAinda não há avaliações

- Selected ProblemsDocumento11 páginasSelected Problemsasanithanair35Ainda não há avaliações

- ECOM 6302: Engineering Optimization: Chapter ThreeDocumento56 páginasECOM 6302: Engineering Optimization: Chapter Threeaaqlain100% (1)

- Assignment 3: Trajectory Compression: 2022 Fall EECS205002 Linear Algebra Due: 2022/12/14Documento4 páginasAssignment 3: Trajectory Compression: 2022 Fall EECS205002 Linear Algebra Due: 2022/12/14Samuel DharmaAinda não há avaliações

- Notes HQDocumento96 páginasNotes HQPiyush AgrahariAinda não há avaliações

- Dynamic ProgrammingDocumento44 páginasDynamic ProgrammingAarzoo VarshneyAinda não há avaliações

- Assn 2Documento3 páginasAssn 2●●●●●●●1Ainda não há avaliações

- Introduction To Optimal ControlDocumento28 páginasIntroduction To Optimal ControlTsedenia TamiruAinda não há avaliações

- Approximate Solutions To Dynamic Models - Linear Methods: Harald UhligDocumento12 páginasApproximate Solutions To Dynamic Models - Linear Methods: Harald UhligTony BuAinda não há avaliações

- DPOCexam2017 Solution BBDocumento20 páginasDPOCexam2017 Solution BBAbdesselem BoulkrouneAinda não há avaliações

- Me 507 Applied Optimal Co#Trol: Middle East Technical University Mechanical Engineering DepartmentDocumento6 páginasMe 507 Applied Optimal Co#Trol: Middle East Technical University Mechanical Engineering DepartmentulasatesAinda não há avaliações

- L4 Discrete Time Optimal Control Indirect LQ AREDocumento26 páginasL4 Discrete Time Optimal Control Indirect LQ AREdhayanethraAinda não há avaliações

- State RegulatorDocumento23 páginasState RegulatorRishi Kant SharmaAinda não há avaliações

- HW SignalDocumento4 páginasHW SignalHiếu VũAinda não há avaliações

- Introduction To Simulation - Lecture 16: Methods For Computing Periodic Steady-State - Part IIDocumento32 páginasIntroduction To Simulation - Lecture 16: Methods For Computing Periodic Steady-State - Part IIHenrique Mariano AmaralAinda não há avaliações

- 16 Primal DualDocumento20 páginas16 Primal Dualdexxt0rAinda não há avaliações

- Chapter 3: Linear Time-Invariant Systems 3.1 MotivationDocumento23 páginasChapter 3: Linear Time-Invariant Systems 3.1 Motivationsanjayb1976gmailcomAinda não há avaliações

- Brief Notes On Solving PDE's and Integral EquationsDocumento11 páginasBrief Notes On Solving PDE's and Integral Equations140557Ainda não há avaliações

- Ch3 SlidesDocumento30 páginasCh3 SlidesYiLinLiAinda não há avaliações

- Numerical Optimization: Unit 9: Penalty Method and Interior Point Method Unit 10: Filter Method and The Maratos EffectDocumento24 páginasNumerical Optimization: Unit 9: Penalty Method and Interior Point Method Unit 10: Filter Method and The Maratos EffectkahvumidragaispeciAinda não há avaliações

- NaDocumento67 páginasNaarulmuruguAinda não há avaliações

- Volume 35 Number 4Documento14 páginasVolume 35 Number 4AnandAinda não há avaliações

- Computer Implementation of Control Systems: Karl-Erik Årzen, Anton CervinDocumento58 páginasComputer Implementation of Control Systems: Karl-Erik Årzen, Anton CervinAnonymous cW0QeMAinda não há avaliações

- Econ LectureDocumento35 páginasEcon Lectureicopaf24Ainda não há avaliações

- 3.3 Complexity of Algorithms: ExercisesDocumento3 páginas3.3 Complexity of Algorithms: Exerciseslixus mwangiAinda não há avaliações

- Solving Dynare Eui2014 PDFDocumento84 páginasSolving Dynare Eui2014 PDFduc anhAinda não há avaliações

- Sol FM1115FinalExam2015Documento13 páginasSol FM1115FinalExam2015Colly LauAinda não há avaliações

- Multiagent RolloutDocumento25 páginasMultiagent RolloutMartina MerolaAinda não há avaliações

- ELEC4632 - Lab - 01 - 2022 v1Documento13 páginasELEC4632 - Lab - 01 - 2022 v1wwwwwhfzzAinda não há avaliações

- Signals and Systems: Lecture #2: Introduction To SystemsDocumento8 páginasSignals and Systems: Lecture #2: Introduction To Systemsking_hhhAinda não há avaliações

- CE 279 - Computational Geotechnics Assignment - 1 Due Date: March 26th, 2021Documento1 páginaCE 279 - Computational Geotechnics Assignment - 1 Due Date: March 26th, 2021Abrar NaseerAinda não há avaliações

- Green's Function Estimates for Lattice Schrödinger Operators and Applications. (AM-158)No EverandGreen's Function Estimates for Lattice Schrödinger Operators and Applications. (AM-158)Ainda não há avaliações

- MDL Exam 2016Documento2 páginasMDL Exam 2016Raul Gil BayardoAinda não há avaliações

- Support Lecture 1Documento4 páginasSupport Lecture 1Raul Gil BayardoAinda não há avaliações

- Torsional - 205a PDFDocumento122 páginasTorsional - 205a PDFRaul Gil BayardoAinda não há avaliações

- Historia Del Control OptimoDocumento8 páginasHistoria Del Control OptimoRaul Gil BayardoAinda não há avaliações

- Lecture 1Documento17 páginasLecture 1Raul Gil BayardoAinda não há avaliações

- Lecture 2Documento24 páginasLecture 2Raul Gil BayardoAinda não há avaliações

- Support Lecture 1Documento4 páginasSupport Lecture 1Raul Gil BayardoAinda não há avaliações

- Support Lecture 1Documento4 páginasSupport Lecture 1Raul Gil BayardoAinda não há avaliações

- Automated Hydroponics Systems For Domestic Usage On Smart CitiesDocumento2 páginasAutomated Hydroponics Systems For Domestic Usage On Smart CitiesRaul Gil BayardoAinda não há avaliações

- Ilovepdf Merged PDFDocumento4 páginasIlovepdf Merged PDFRaul Gil BayardoAinda não há avaliações

- Hydroponics PDFDocumento2 páginasHydroponics PDFRaul Gil BayardoAinda não há avaliações

- Content Optimal Control C Invest AvDocumento2 páginasContent Optimal Control C Invest AvRaul Gil BayardoAinda não há avaliações

- TIP31CDocumento5 páginasTIP31Canon_136451958Ainda não há avaliações

- Ilovepdf Merged PDFDocumento4 páginasIlovepdf Merged PDFRaul Gil BayardoAinda não há avaliações

- Universidad Politecnica de Sinaloa: Raúl GilDocumento5 páginasUniversidad Politecnica de Sinaloa: Raúl GilRaul Gil BayardoAinda não há avaliações

- GilbayardoraulDocumento1 páginaGilbayardoraulRaul Gil BayardoAinda não há avaliações

- Good MorningDocumento1 páginaGood MorningRaul Gil BayardoAinda não há avaliações

- Artificial Intelligence: Mechatronics Engineering Raúl Gil English IX Teacher: Rebeca TervenDocumento5 páginasArtificial Intelligence: Mechatronics Engineering Raúl Gil English IX Teacher: Rebeca TervenRaul Gil BayardoAinda não há avaliações

- Spank BangDocumento1 páginaSpank BangRaul Gil BayardoAinda não há avaliações

- Licona FloresDocumento7 páginasLicona FloresRaul Gil BayardoAinda não há avaliações

- Gil Bayardo RaulDocumento8 páginasGil Bayardo RaulRaul Gil BayardoAinda não há avaliações

- Gil Bayardo RaulDocumento8 páginasGil Bayardo RaulRaul Gil BayardoAinda não há avaliações

- GilbayardoraulDocumento1 páginaGilbayardoraulRaul Gil BayardoAinda não há avaliações

- United States of America EssayDocumento2 páginasUnited States of America EssayRaul Gil BayardoAinda não há avaliações

- Raul GilDocumento1 páginaRaul GilRaul Gil BayardoAinda não há avaliações

- Book ReviewDocumento1 páginaBook ReviewRaul Gil BayardoAinda não há avaliações

- Universidad Politécnica de Sinaloa: I.S.O.PDocumento6 páginasUniversidad Politécnica de Sinaloa: I.S.O.PRaul Gil BayardoAinda não há avaliações

- Raul GilDocumento1 páginaRaul GilRaul Gil BayardoAinda não há avaliações

- Gil Bayardo RaulDocumento8 páginasGil Bayardo RaulRaul Gil BayardoAinda não há avaliações

- ISTQB Foundation Level: Chapter 5, Test ManagementDocumento28 páginasISTQB Foundation Level: Chapter 5, Test ManagementMoses PanugantiAinda não há avaliações

- MIS Unit 1Documento7 páginasMIS Unit 1kartik pathakAinda não há avaliações

- Blank QFD TemplateDocumento1 páginaBlank QFD Templatem_azhagarAinda não há avaliações

- Computer Aided Software EngineeringDocumento9 páginasComputer Aided Software EngineeringFilzah HusnaAinda não há avaliações

- Advanced TransportpropulsionsDocumento11 páginasAdvanced TransportpropulsionsGaddam SubramanyamAinda não há avaliações

- UNIT III TheoryDocumento6 páginasUNIT III TheoryRanchuAinda não há avaliações

- DHL Glo Freight Iso9001Documento31 páginasDHL Glo Freight Iso9001Passwortistsehrgeheim123Ainda não há avaliações

- Korelasi Antara Fixation Eye Tracking Metric Dengan: Performance Measurement Usability TestingDocumento10 páginasKorelasi Antara Fixation Eye Tracking Metric Dengan: Performance Measurement Usability TestingRizaldi IsmayadiAinda não há avaliações

- Topic: Second Language Acquisition Reading: Hummel Ch. 4Documento39 páginasTopic: Second Language Acquisition Reading: Hummel Ch. 4Thanh NguyenAinda não há avaliações

- Sqa IDocumento124 páginasSqa ITrần Thị Mỹ TiênAinda não há avaliações

- Jensen Carlo Ilagan - CPT 311 - PERT CHART ACTIVITYDocumento3 páginasJensen Carlo Ilagan - CPT 311 - PERT CHART ACTIVITYSean DyAinda não há avaliações

- Simulado 5Documento10 páginasSimulado 5Danillo AraujoAinda não há avaliações

- SAD Chapter OneDocumento8 páginasSAD Chapter OneabdallaAinda não há avaliações

- ML Project ProposalDocumento4 páginasML Project ProposalfjmjascqtwtukyxqcaAinda não há avaliações

- Computer Applications in Mining SyllabusDocumento2 páginasComputer Applications in Mining SyllabusMartin JanuaryAinda não há avaliações

- Main PPT2Documento31 páginasMain PPT2Shanthi KishoreAinda não há avaliações

- Zatona MLDocumento4 páginasZatona MLalcinialbob1234Ainda não há avaliações

- Inverted Pendulum NotesDocumento36 páginasInverted Pendulum Notesstephanie-tabe-1593Ainda não há avaliações

- Lecture 3 (CHP 3) ModelsDocumento45 páginasLecture 3 (CHP 3) ModelsRita RanveerAinda não há avaliações

- Dpb6013 HRM - Chapter 3 HRM Planning w1Documento24 páginasDpb6013 HRM - Chapter 3 HRM Planning w1Renese LeeAinda não há avaliações

- Zero Trust NetworkingDocumento10 páginasZero Trust NetworkingFaris AL HashmiAinda não há avaliações

- PID ControlDocumento22 páginasPID ControlJessica RossAinda não há avaliações

- Informatics in Logistics ManagementDocumento26 páginasInformatics in Logistics ManagementNazareth0% (1)

- 5 2021 09 13 Tipe Pemeliharaan Bambang SAPDocumento37 páginas5 2021 09 13 Tipe Pemeliharaan Bambang SAPPandu BryantAinda não há avaliações

- Experiment 1Documento8 páginasExperiment 1Vikram SainiAinda não há avaliações

- Nature and Elements of CommunicationDocumento5 páginasNature and Elements of CommunicationSharmaine Grace Emilio ColladoAinda não há avaliações

- IS 4420 Database Fundamentals Database Development Process Leon ChenDocumento28 páginasIS 4420 Database Fundamentals Database Development Process Leon Chensiddley2Ainda não há avaliações

- Sociolinguistics and Language VariationDocumento5 páginasSociolinguistics and Language VariationThe hope100% (1)