Escolar Documentos

Profissional Documentos

Cultura Documentos

Vehicle Detection and Counting in Traffi PDF

Enviado por

alokTítulo original

Direitos autorais

Formatos disponíveis

Compartilhar este documento

Compartilhar ou incorporar documento

Você considera este documento útil?

Este conteúdo é inapropriado?

Denunciar este documentoDireitos autorais:

Formatos disponíveis

Vehicle Detection and Counting in Traffi PDF

Enviado por

alokDireitos autorais:

Formatos disponíveis

Vehicle detection and counting in traffic video on highway

CHAPTER-1

INTRODUCTION

The result of the increase in vehicle traffic, many problems have appeared. For example,

traffic accidents, traffic congestion, traffic induced air pollution and so on. Traffic congestion has

been a significantly challenging problem. It has widely been realized that increases of

preliminary transportation infrastructure, more pavements, and widened road, have not been able

to relieve city congestion. As a result, many investigators have paid their attentions on intelligent

transportation system (ITS), such as predict the traffic flow on the basis of monitoring the

activities at traffic intersections for detecting congestions. To better understand traffic flow, an

increasing reliance on traffic surveillance is in a need for better vehicle detection at a wide-area.

Automatic detecting vehicles in video surveillance data is a very challenging problem in

computer vision with important practical applications, such as traffic analysis and security.

Vehicle detection and counting is important in computing traffic congestion on highways.

The main goal Vehicle detection and counting in traffic video project is to develop

methodology for automatic vehicle detection and its counting on highways. A system has been

developed to detect and count dynamic vehicles efficiently. Intelligent visual surveillance for

road vehicles is a key component for developing autonomous intelligent transportation systems.

The entropy mask method does not require any prior knowledge of road feature extraction on

static images. Detecting and Tracking vehicles in surveillance video which uses segmentation

with initial background subtraction using morphological operator to determine salient regions in

a sequence of video frames. Edges are be counting which shows how many areas are of

particular size then particular to car areas is locate the points and counting the vehicles in the

domain of traffic monitoring over highways.

Automatic detecting and tracking vehicles in video surveillance data is a very challenging

problem in computer vision with important practical applications, such as traffic analysis and

security. Video cameras are a relatively inexpensive surveillance tool. Manually reviewing the

large amount of data they generate is often impractical. Thus, algorithms for analyzing video

Dept. of CS&E Page 1

Vehicle detection and counting in traffic video on highway

which require little or no human input is a good solution. Video surveillance systems are focused

on background modeling, moving vehicle classification and tracking. The increasing availability

of video sensors and high performance video processing hardware opens up exciting possibilities

for tackling many video understanding problems, among which vehicle tracking and target

classification are very important. A vehicle tracking and classification system is described as one

that can categorize moving vehicles and further classifies the vehicles into various classes.

Traffic management and information systems depend mainly on sensors for estimating

the traffic parameters. In addition to vehicle counts, a much larger set of traffic parameters like

vehicle classifications, lane changes, etc., can be computed. Vehicle detection and counting uses

a single camera mounted usually on a pole or other tall structure, looking down on the traffic

scene. The system requires only the camera calibration parameters and direction of traffic for

initialization. Two common themes associated with tracking traffic movement and recognizing

accident information from real time video sequences are

The video information must be segmented and turned into vehicles.

The behavior of these vehicles are monitored (they are tracked) for immediate

decision making purposes.

1.1 Problem Definition

Current approaches of monitoring traffic include manual counting of vehicles, or counting

vehicles using magnetic loops on the road. The main drawback of these approaches, besides the

fact that they are expensive, is that these systems only count.

The current image processing method uses temporal differencing method this fail in

complete extraction of shapes of vehicle, in feature-based tracking method extracted image is

blur, active contour method is very difficult to implement and in region-based tracking is very

time consuming, so in this project Adaptive Background subtraction method for detection and

ostu’s method to overcome these all problems.

Dept. of CS&E Page 2

Vehicle detection and counting in traffic video on highway

1.2 Scope of the Project

The main purpose Vehicle detection and counting from video sequence method is to

Tracking and counting vehicles for High quality videos and also identify the colure of each

vehicle.

1.3 Objectives

Detection of multiple moving vehicles in a video sequence.

Tracking of the detected vehicles.

Colure identification of Vehicles.

Counting the total number of vehicles in videos.

1.4 Literature survey

1. Gupte S., Masoud O., Martin R. F. K. and Papanikolopoulos N. P., proposed ―Detection

and Classification Vehicles‖ in the March, 2002,

The presents algorithms for vision-based detection and classification of vehicles

in monocular image sequences of traffic scenes recorded by a stationary camera.

Processing is done at three levels: raw images, region level, and vehicle level. Vehicles

are modeled as rectangular patches with certain dynamic behavior. The proposed method

is based on the establishment of correspondences between regions and vehicles, as the

vehicles move through the image sequence. Experimental results from highway scenes

are provided which demonstrate the effectiveness of the method. Briefly describe an

interactive camera calibration tool that is developed for recovering the camera parameters

using features in the image selected by the user.

2. Toufiq P., Ahmed Egammal and Anurag Mittal, proposed ―A Framework for Feature

Selection for Background Subtraction‖, in 2006.

Background subtraction is a widely used paradigm to detect moving vehicles in

video taken from a static camera and is used for various important applications such as

video surveillance, human motion analysis, etc. Various statistical approaches have been

Dept. of CS&E Page 3

Vehicle detection and counting in traffic video on highway

proposed for modeling a given scene background. However, there is no theoretical

framework for choosing which features to use to model different regions of the scene

background. They introduce a novel framework for feature selection for background

modeling and subtraction. A boosting algorithm, namely Real Boost, is used to choose

the best combination of features at each pixel. Given the probability estimates from a

pool of features calculated by Kernel Density Estimate (KDE) over a certain time period,

the algorithm selects the most useful ones to discriminate foreground vehicles from the

scene background. The results show that the proposed framework successfully selects

appropriate features for different parts of the image.

3. Toufiq P., Ahmed Egammal and Anurag Mittal, proposed ―A Framework for Feature

Selection for Background Subtraction‖, In 2006.

Background subtraction is a widely used paradigm to detect moving objects in

video taken from a static camera and is used for various important applications such as

video surveillance, human motion analysis. Various statistical approaches have been

proposed for modeling a given scene background. However, there is no theoretical

framework for choosing which features to use to model different regions of the scene

background. The paper introduces a novel framework for feature selection for

background modeling and subtraction. A boosting algorithm, namely Real Boost, is used

to choose the best combination of features at each pixel. Given the probability estimates

from a pool of features calculated by Kernel Density Estimate (KDE) over a certain time

period, the algorithm selects the most useful ones to discriminate foreground objects from

the scene background. The results show that the proposed framework successfully selects

appropriate features for different parts of the image.

Dept. of CS&E Page 4

Vehicle detection and counting in traffic video on highway

CHAPTER-2

SOFTWARE AND HARDWARE REQUIREMENT

SPECIFICATION

The software and hardware requirement for the vehicle detection and counting method is

as follows:

2.1 Hardware requirements

Processor : Pentium IV onwards

RAM : 2 GB or higher

Hard Disk Space : 20 GB or higher

Input : Video Sequence

2.2 Software requirements

Operating System : Windows XP or higher

Software : Matlab 7.10 (R2013a)

MATLAB (matrix laboratory): is a numerical computing environment and fourth

generation programming language. MATLAB allows matrix manipulations, plotting

of functions and data, implementation of algorithms, creation of user interfaces, and interfacing

with programs written in other languages, including C, C++, Java, and Fortran. Image processing

Toolbox provides a comprehensive set of reference-standard algorithms, functions, and apps for

image processing, analysis, visualization, and algorithm development. users can perform image

enhancement, image deblurring, feature detection, noise reduction, image segmentation,

geometric transformations, and image registration. Many toolbox functions are multithreaded to

take advantage of multicore and multiprocessor computers.

Image Processing Toolbox supports a diverse set of image types, including high dynamic

range, giga pixel resolution, embedded profile, and tomographic. Visualization functions let

Dept. of CS&E Page 5

Vehicle detection and counting in traffic video on highway

users explore an image, examine a region of pixels, adjust the contrast, create contours or

histograms, and manipulate regions of interest (ROIs). With toolbox algorithms users can restore

degraded images, detect and measure features, analyze shapes and textures, and adjust color

balance.

Dept. of CS&E Page 6

Vehicle detection and counting in traffic video on highway

CHAPTER-3

HIGH LEVEL DESIGN

3.1 Design consideration

In the design consideration the high level design of our project it includes four modules,

for this architecture pattern is as follows

3.2 Architecture Design

The figure3.1, gives an overview of the moving vehicle detection in a video sequence.

The system makes use of an existing video sequence. The first frame is considered as the

reference frame. The subsequent frames are taken as the input frames. They are compared and

the background is eliminated. If a vehicle is present in the input frame, it’ll be retained. The

detected vehicle is thus tracked by various techniques, namely, adaptive background method and

blob analysis method.

Input Segmentation Detection Tracking Counting

Frames

Figure: 3.1 overview of vehicle detection and counting system.

In the adaptive background subtraction algorithm, assume that the first frame is

background for the video clips considered. The architecture of the proposed algorithm is shown

in Figure3.1. The flow of the algorithm for background elimination is as shown in figure3.2

Video clip is read and it is converted to frames. In the first stage difference between frames are

computed i.e. FR1and FR1+j. In the next stage these differences are compared, and in the third

stage pixels having the same values in the frame difference are eliminated. The fourth phase is

the post processing stage executed on the image obtained in third stage and the fifth phase is the

vehicle detection .and vehicle tuning .And final stage is counting vehicles.

Dept. of CS&E Page 7

Vehicle detection and counting in traffic video on highway

Input video clip

FR1 FR1+j

Background

registration

Image subtraction

Foreground detection

Image segmentation

Vehicle detection Vehicle tuning

Vehicle counting

Figure3.2 Architechture of vehicle detection and counting.

3.2.1 Background Registration

A general detecting approach is to extract salient regions from the given video clip using

a learned background modeling technique. This involves subtracting every image from the

background scene. The first frame is assumed as initial background and thresholding the

resultant difference image to determine the foreground image. A vehicle is a group of pixels that

move in a coherent manner, either as a lighter region over a darker background or vice versa.

Dept. of CS&E Page 8

Vehicle detection and counting in traffic video on highway

Often the vehicle may be of the same color as the background, or may be some portion of it may

be aged with the background, due to which detecting the vehicle becomes difficult. This leads to

an erroneous vehicle count.

3.2.2 Foreground Detection

Detecting information can use to refine the vehicle type and also to correct errors which

are caused due to occlusions. After registering the static vehicles the background image is

subtracted from the video frames to obtain the foreground dynamic vehicles. Post processing is

performed on the foreground dynamic vehicles to reduce the noise interference.

3.2.3 Image Segmentation

Image segmentation steps as follows:

The segmentation of vehicle regions of interest. In this step, regions which may

contain unknown object have to be detected.

Next step focuses on the extraction of suitable features and then extraction of

vehicles. The main purpose of feature extraction is to reduce data by means of

measuring certain features that distinguish the input patterns.

The final is classification. It assigns a label to an vehicle based on the information

provided by its descriptors. The investigation is made on the mathematical

morphology operators for segmentation of a gray-scale image.

3.2.4 Vehicle Tuning

The irregular vehicle motion, there always exist some noise regions both in the vehicle

and background region .Moreover, the vehicle boundaries are also not very smooth, hence a post

processing technique is applied on the foreground image. Filters termed median filters are used,

whose response is based on ordering (ranking) the pixels contained in the image area

encompassed by the filter. The final output of the vehicle tuning phase is a binary image of the

vehicle detected is termed as mask1.

Dept. of CS&E Page 9

Vehicle detection and counting in traffic video on highway

3.2.5 Vehicle Counting

The tracked binary image mask1 forms the input image for counting. This image is

scanned from top to bottom for detecting the presence of vehicle. Two variables are maintained

that is count that keeps track of the number of vehicles and count register countreg, which

contains the information of the registered vehicle. When a new vehicle is encountered it is first

checked to see whether it is already registered in the buffer, if the vehicle is not registered then it

is assumed to be a new vehicle and count is incremented, otherwise it is treated as a part of an

already existing vehicle and the presence of the vehicle is neglected. This concept is applied for

the entire image and the final count of vehicle is present in variable count. A fairly good

accuracy of count is achieved. Sometimes due to occlusions two vehicles are merged together

and treated as a single entity.

3.3 Modules Specification

Vehicle detection and counting method mainly consist of 4 different modules.

3.3.1 Vehicles Detection

Moving vehicle detection is in video analysis. It can be used in many regions such as

video surveillance, traffic monitoring and people tracking. there are three common motion

segmentation techniques, which are frame difference, entropy mask and optical flow method.

Frame difference method has less computational complexity, and it is easy to implement, but

generally does a poor job of extracting the complete shapes of certain types of moving vehicles.

Adaptive background subtraction uses the current frame and the reference image.

Difference between the current frame and the reference frame is above the threshold is

considered as moving vehicle. Optical flow method can detect the moving vehicle even when the

camera moves, but it needs more time for its computational complexity, and it is very sensitive to

the noise. The motion area usually appears quite noisy in real images and optical flow estimation

involves only local computation. So the optical flow method cannot detect the exact contour of

Dept. of CS&E Page 10

Vehicle detection and counting in traffic video on highway

the moving vehicle. From the above estimations, it is clear that there are some shortcomings in

the traditional moving vehicle detection methods

• Frame difference cannot detect the exact contour of the moving vehicle.

• Optical flow method is sensitive to the noise.

3.3.2 Vehicle Tracking

Vehicle tracking involves continuously identifying the detected vehicle in video sequence

and is done by specifically marking the boundary around the detected vehicle. Vehicle tracking

is a challenging problem. Difficulties in tracking vehicles can arise due to abrupt vehicle motion,

changing appearance patterns of the vehicle and the scene, non rigid vehicle structures, vehicle-

to-vehicle and vehicle-to-scene occlusions, and camera motion.

Tracking is usually performed in the context of higher-level applications that require the

location and/or shape of the vehicle in every frame. Typically, assumptions are made to constrain

the tracking problem in the context of a particular application. There are three key steps in video

analysis- detection of interesting moving vehicle, tracking of such vehicle from frame to frame,

and analysis of vehicle tracks to recognize their behavior. Therefore, the use of vehicle tracking

is pertinent in the tasks of

Motion-based recognition, that is, human identification based on gait, automatic vehicle

detection.

Automated surveillance, that is, monitoring a scene to detect suspicious activities or

Unlikely events.

Video indexing, that is, automatic annotation and retrieval of the videos in multimedia

Databases.

Human-computer interaction, that is, gesture recognition, eye gaze tracking for data

Input to computers, etc.

Traffic monitoring, that is, real-time gathering of traffic statistics to direct traffic flow.

Vehicle navigation, that is, video-based path planning and obstacle avoidance

capabilities.

Dept. of CS&E Page 11

Vehicle detection and counting in traffic video on highway

Tracking vehicle can be complex due to

Noise can occur in the images.

Complexity in vehicle motion.

Non-rigid or articulated nature of vehicle may arise.

Partial and full vehicle occlusions can be happen.

Complexity in vehicle shapes because different vehicles have different shapes.

Real-time processing requirements also can create a problem.

Several programmers proposed their own algorithms, some of them are-

Edge detection is used to identify these changes. An important property of edges is that they

are less sensitive to illumination changes compared to color features. Algorithms that track the

boundary of the vehicles usually use edges as the representative feature, and this algorithm

designed by Bowyer et al in 2001.

Optical flow is a dense field of displacement vectors which defines the translation of each

pixel in a region. It is computed using the brightness constraint, which assumes brightness

constancy of corresponding pixels in consecutive frames, designed by Horn and Schunk in 1981.

Texture is a measure of the intensity variation of a surface which quantifies properties such

as smoothness and regularity. Compared to color, texture requires a processing step to generate

the descriptors.

Based on all these points and available data this project is in automated surveillance

category, because of its fully automated working and can be implemented in any situations,

automated surveillance like traffic, border, etc. And the proposed a new method for tracking

called Matrix scan method .Matrix scan method has two steps. Firstly, the output image matrix is

scanned horizontally, starting at the first x-direction coordinate value, the pixel values in the

corresponding column are summed up. The x-direction coordinate value is incremented by one

and total pixel value in the next column is calculated. This process is repeated until the last value

in the x-direction is found. As a result, the total pixel values of each column are calculated. Each

total value is compared to a certain threshold value in order to determine the x-coordinate where

an vehicle starts or ends within the image.

Secondly, the horizontal scanning method is repeated vertically, thus calculating the total

pixel value in each row, and then apply thresholding to determine the y-coordinate where an

Dept. of CS&E Page 12

Vehicle detection and counting in traffic video on highway

vehicle starts or ends within the image. The background of the image that contains the vehicle is

uniform, as it has already been set to white or black at the end of the first phase.

3.3.3 Vehicle counting

Steps to count vehicle

Traverse the mask1 image to detect a vehicle.

If vehicle encountered then check for registration in countreg.

If the vehicle is not registered then increment count and register the vehicle in

countreg labeled with the new count.

Repeat steps 2-4 until traversing not completed.

3.3.4 Color identification

Vehicle color identification by using slandered color model. In slandered color model

Color is identified using intensity of the threshold images. The color spaces can separate

chromatic and illumination components, maintaining Slandered model regardless of the

brightness can lead to an unstable model especially for very bright or dark vehicles conversion

also requires computational resources particularly in large images. The idea of preserving

intensity components and saving computational costs lead us back to the RGB space. As the

requirement to identify moving shadows, we need to consider a color model that can separate

slandered and brightness components.

Dept. of CS&E Page 13

Vehicle detection and counting in traffic video on highway

3.3.5 Data flow diagram for detection modules

Read the input video

Background frames

Convert

video

Foreground frames

Subtraction

Detect the vehicle

Fig 3.1 Data flow diagram for detection of vehicle

Above figure 3.1 as shows read video clip as the input and read the video is converted

into frames. The converted frame is differences from foreground frame and background frame.

Frame differences processing stage executed on the image using image subtraction and the

background is eliminated thus maintaining only the foreground vehicle and counting the detected

vehicle. Moving vehicle detection is the video analysis can be used in many regions for video

surveillance, traffic monitoring and vehicle tracking. The common motion segmentation

techniques are frame difference, using adoptive background substraction method.

Dept. of CS&E Page 14

Vehicle detection and counting in traffic video on highway

3.3.6 Data flow diagram for tracking modules

Detected vehicle

Convertin Convert

g into gray scale

gray scale image into

image binary

image

Image

segmentation

Tracking vehicle

Figure 3.2 Data flow diagram for tracking vehicles

Detect vehicle can converting into grayscale image and grayscale image is converted into

binary image. The binary image is segmentation to vehicle regions of interest, after the detection

of vehicle regions contain unknown objects have to be detected. The extraction of suitable

features is vehicle and then extraction of vehicle tracking. tracking vehicle can arise due to

abrupt vehicle motion, changing appearance patterns of both the vehicle and the scene, Tracking

is usually performed in the context of higher-level applications that require the location and/or

shape of the vehicle in every frame. vehicle identified and select the unidentified pixel on every

side of the object and change the color of the pixel.The every multiple moving vehicle present

in the frame will be tracked successfully.

Dept. of CS&E Page 15

Vehicle detection and counting in traffic video on highway

3.3.6 Data flow diagram for counting modules

Tracked vehicle

Traverse

the image

Check for

vehicle

Vehicle counting

Figure 3.3 vehicle counting

The tracked binary image mask1 forms the input image for counting. The image is

scanned from top to bottom for detecting the presence of vehicle. Two variables are maintained

that is count that keeps track of the number of vehicles and count register variable countreg,

which contains the information of the registered vehicle. When a new vehicle is encountered is

checked to see whether it is already registered in the buffer, if the vehicle is not registered then it

is assumed to be a new vehicle and count is incremented, otherwise it is treated as a part of an

already existing vehicle and the presence of the vehicle is neglected. The concept is applied for

the entire image and the final count of vehicle is present in variable count. A fairly good

accuracy of count is achieved. Sometimes due to occlusions two vehicles are merged together

and treated as a single entity.

Dept. of CS&E Page 16

Vehicle detection and counting in traffic video on highway

CHAPTER-4

DETAILED DESIGN

In detail design the algorithms of each modules which is used in this project and the

detail description of each module is explained.

4.1 Algorithm for modules

Four major functions are involved in the proposed technique. The first function is to read

and divide a video clip into number of frames. Second function is to implement the major

procedures like finding frame differences and identifying the background registered image .Next

post-processing is performed, and the background is eliminated thus maintaining only the

foreground vehicles the last function assists in counting the detected vehicles. The aim of the

algorithm is to design an efficient counting system on highways .Given a video clip, the initial

problem is segregating it into number of frames. Each frame is then considered as an

independent image, which is in RGB format and is converted into Gray scale image. Next the

difference between the frames at certain intervals is computed. This interval can be decided

based on the motion of moving vehicle in a video sequence.

If the vehicle is moving quite fast, then the difference between every successive frame is

considered. Im-dilate and Im-errosion the morphological operator used for segmenting the

vehicles edges counting with bw fill which shows how many areas are big in size, then particular

to car areas we locate the points then above the car areas them counter will be incremented step

are again repeat till the end of video sequence.

Mathematical morphology is used for analyzing vehicle shape characteristics such as size

and connectivity, which are not easily accessed by linear approaches. Morphological operations

are used for image segmentation. The advantages of morphological approaches over linear

approaches are direct geometric interpretation, simplicity and efficiency in hardware

implementation .Basic operation of am orphology-based approach is the translation of a

structuring element over the image and the erosion and/or dilation of the image content based on

the shape of the structuring element. A morphological operation analyzes and manipulates the

structure of an image by marking the locations where the structuring element fits.

Dept. of CS&E Page 17

Vehicle detection and counting in traffic video on highway

In mathematical morphology, neighborhoods are, therefore, defined by the structuring

element, that is the shape of the structuring element determines the shape of the neighborhood in

the image. The fundamental mathematical morphology operations dilation and erosion, based on

Minkowski algebra are used (Equation 1)

Dilation

D(A,B)=A Xor B= Ủβ€B(A+B)……(1)

In equation (1) is either set A or B can be thought of as an "image‖, A is usually considered as

the image and B is called a structuring element. The new intensity value of the center pixel is

determined according to the following rules.

For dilation, if any pixel of the rectangle fits at or under the image intensity

profile, the center pixel of the rectangle is given the maximum intensity of the

pixel and its two neighbors in the original image; otherwise the pixel is set to

zero intensity.

For erosion, if the whole rectangle fits at or under the image intensity profile,

the center pixel is given the minimum intensity of the pixel and its two

neighbors in the original image, otherwise the pixel is set to zero intensity. In

short dilation causes vehicles to dilate or grow in size; erosion causes vehicles

to shrink. The amount and the way that they grow or shrink depend upon the

choice of the structuring element.

4.1.1 Algorithm to Read Video

Step 1: Initialize an array M_ Array [ ] to zeros.

Step 2: Declare two global variables m and n which stores the row and column values

of video frames respectively.

Step 3: to for i = 1 to No_ of_ Frames in steps of 1 with

Interval 4 frames

• Read each frame of video clip and store it.

• Store the video frames into M_ Array [ ].

• Increment variable k which stores the total number of frames M_Array.

Dept. of CS&E Page 18

Vehicle detection and counting in traffic video on highway

end for

Step 4: for i = 1 to k

Convert images obtained in step 3 from RGB to gray format and store that in a

three- dimensional arrayT [m, n, l].

Initialize array variable to Read video and store two matrix value that is rows and

columns of video frame. Read each frame from video clips and then store frames into array then

increment the array position to store the next frame this process continues untill final frame is

read and store in array .Image is converted into RGB to gray image and store in 3 dimensional

array wher m and n is the row and column value is given to the particular number.

4.1.2 Algorithm for Background Registration

ALGORITHM BGRegist ( )

//Input: M_Array

//Output: An Image with Registered Background in bg array

//Initialize array [ b] to zeros

Step 1: for I =1 to m

for j=1 to n

for k=1 to l-1

if abs(double(T(i,j,l-k))-double(T(i,j,k)))<10

b(i,j)=T(i,j,k)

end if

end for

end for

end for

Step 2: Convert b array values to unsigned integers and store it bin to array called

background.

Step 3: Fill the hole regions in image background and store bit in bg array

Step 4: Show the output images background.

The background subtraction method is used to obtain the difference image by subtracting

two different frames for this operation initially set first frame as background image then fill the

Dept. of CS&E Page 19

Vehicle detection and counting in traffic video on highway

hole region of image background by using ImFill function and store into the background array.

finally shows the background image. . The involves subtracting every image from the

background scene. The first frame is assumed as initial background and thresholding the

resultant difference image to determine the foreground image A vehicle is a group of pixels that

move in a coherent manner, either as a lighter region over a darker background or vice versa.

Often the vehicle may be of the same color as the background, or may be some portion of it may

be aged with the background, due to which detecting the vehicle becomes difficult. This leads to

an erroneous vehicle count.

4.1.3 Algorithm for Background Elimination

ALGORITHM BGElimin( )

//Input: d is a specific Video Frame

//Output: An image with Foreground Vehicles is stored in’c’

//Initialize c array to zeros

Step 1: for i = 2 to m-1

for j = 2 to n-1

if the difference between the pixel values of d array with bg or b

array is less than 20 store value 0 for that pixel in array c

else111

store pixel value

end if

end for

end for

Step 2: Convert the values in c array and apply median filter.

Step 3: Show the output images c, d

The vehicle foreground image is store into the array variable c is initializing to zero .if d

is the specific video Frame. the difference between the pixel values of d array with background

image b and array is less than 20 store the value zero for the difference between the pixel in

Dept. of CS&E Page 20

Vehicle detection and counting in traffic video on highway

array c otherwise store the pixel value. Convert stored value c array and apply median filter for

removing the noise.

4.1.4 Algorithm for Counting

ALGORITHM Count ( )

//Initialise count = 0 and counted register buffer

countveg = 0

Step 1: Traverse the vehicle image to detect a vehicle from top to bottom.

Step 2: If vehicle encountered then check for registration in countveg.

Step 3: If the vehicle is not registered then increment count and register the vehicle in

countveg, labelled with the new count.

Step 4: Repeat steps 2-4 until traversing not completed.

The tracked binary image mask1 forms the input image for counting. This image is

scanned from top to bottom for detecting the presence of vehicle. Two variables are maintained

that is count that keeps track of the number of vehicles and count register countreg, which

contains the information of the registered vehicle. When a new vehicle is encountered is checked

to see whether it is already registered in the buffer, if the vehicle is not registered then it is

assumed to be a new vehicle and count is incremented, otherwise it is treated as a part of an

already existing vehicle and the presence of the vehicle is neglected. The concept is applied for

the entire image and the final count of vehicle is present in variable count. A fairly good

accuracy of count is achieved. Sometimes due to occlusions two vehicles are merged together

and treated as a single entity.

Dept. of CS&E Page 21

Vehicle detection and counting in traffic video on highway

CHAPTER-5

IMPLEMENTATION REQUIREMENTS

The implementation of the vehicle detection and counting on traffic video by using image

processing with simulation software is as follows

5.1 Simulation Software

Simulation is performed using MATLAB Software. This is an interactive system whose

basic data element is an array that does not require dimensioning. It is a tool used for formulating

solutions to many technical computing problems, especially those involving matrix

representation. This tool emphasizes a lot of importance on comprehensive prototyping

environment in the solution of digital image processing. Vision is most advanced of our senses,

hence images play an important role in humans’ perception, and MATLAB is a very efficient

tool for image processing.

5.2 Main Pseudo code

function varargout = MAINCODE(varargin)

MAINCODE MATLAB code for MAINCODE.fig

MAINCODE, by itself, creates a new MAINCODE or raises the existing

H = MAINCODE returns the handle to a new MAINCODE or the handle to

gui_Singleton = 1;

gui_State = struct('gui_Name', mfilename, ...

'gui_Singleton', gui_Singleton, ...

'gui_OpeningFcn', @MAINCODE_OpeningFcn, ...

'gui_OutputFcn', @MAINCODE_OutputFcn, ...

'gui_LayoutFcn', [] , ...

'gui_Callback', []);

if nargin && ischar(varargin{1})

gui_State.gui_Callback = str2func(varargin{1});

end

Dept. of CS&E Page 22

Vehicle detection and counting in traffic video on highway

if nargout

[varargout{1:nargout}] = gui_mainfcn(gui_State, varargin{:});

else

gui_mainfcn(gui_State, varargin{:});

end

function MAINCODE_OpeningFcn(hObject, eventdata, handles, varargin)

handles.output = hObject;

a = ones(256,256);

axes(handles.axes1);

imshow(a);

axes(handles.axes2);

imshow(a);

axes(handles.axes3);

imshow(a);

guidata(hObject, handles);

function varargout = MAINCODE_OutputFcn(hObject, eventdata, handles)

varargout{1} = handles.output;

--- Executes on button press in pushbutton3.

function pushbutton3_Callback(hObject, eventdata, handles)

hObject handle to pushbutton3 (see GCBO)

eventdata reserved - to be defined in a future version of MATLAB

handles structure with handles and user data (see GUIDATA)

close all;

--- Executes on button press in pushbutton4.

function pushbutton4_Callback(hObject, eventdata, handles)

hObject handle to pushbutton4 (see GCBO)

eventdata reserved - to be defined in a future version of MATLAB

handles structure with handles and user data (see GUIDATA)

Dept. of CS&E Page 23

Vehicle detection and counting in traffic video on highway

a = ones(256,256);

axes(handles.axes1);

imshow(a);

axes(handles.axes2);

imshow(a);

axes(handles.axes3),imshow(a);

set(handles.text12,'string','');

set(handles.text13,'string','');

set(handles.text14,'string','');

set(handles.edit1,'string','');

Graphical Objects in MATLAB are arranged in a structure called the Graphics Object

Hierarchy which can be viewed as a rooted tree with the nodes representing the objects and the

root vertex corresponding to the root object. The form of such a tree depends on the particular set

of objects initialised and the precedence relationship between its nodes is reflected in the object’s

properties Parent and Children. If for example, the handle h of object A is the value of the

property Parent of object B, then A is the parent of B, and, accordingly, B is a child of A. The

possibility to have one or another form of hierarchy depends on the admissible values of objects’

properties Parent and/or Children. One also has to be aware of some specific rules which apply

to the use of particular objects. For example, it is recommended that do not parent any objects to

the annotation axes, and do not change explicitly annotation axes’ properties Similarly, parenting

annotation objects to standard axes may cause errors enter the code.

5.2.2 Pseudo code for background subtraction

function pushbutton2_Callback(hObject, eventdata, handles)

diry2=handles.diry;

videoReader = vision.VideoFileReader(diry2);%visiontraffic.avi

blobAnalysis = vision.BlobAnalysis('BoundingBoxOutputPort', true, ...

'AreaOutputPort', false, 'CentroidOutputPort', false, ...

'MinimumBlobArea', 150);

fontSize = 14;

Dept. of CS&E Page 24

Vehicle detection and counting in traffic video on highway

i=1;

alpha = 0.5; count=0;

while ~isDone(videoReader)

thisFrame = step(videoReader);

if i == 1

Background = thisFrame;

else

Change background slightly at each frame

Background(t+1)=(1-alpha)*I+alpha*Background

Background = (1-alpha)* thisFrame + alpha * Background;

end

Display the changing/adapting background.

subplot(1, 3, 1);

axes(handles.axes1),imshow(Background);

title('Adaptive Background');

Calculate a difference between this frame and the background.

differenceImage = thisFrame - Background;

Threshold with Otsu method.

grayImage = rgb2gray(differenceImage); Convert to gray level

thresholdLevel = graythresh(grayImage); Get threshold.

binaryImage = im2bw( grayImage, thresholdLevel); Do the binarization

se = strel('square', 5);

binaryImage=imdilate(binaryImage,se); binaryImage=imdilate(binaryImage,se);

binaryImage=imopen(binaryImage,se);

binaryImage=imclose(binaryImage,se);

[binaryImage,IDX] = imfill(binaryImage,'holes');

[binaryImage,IDX] = imfill(binaryImage,'holes');

[binaryImage,IDX] = imfill(binaryImage,'holes');

blobAnalysis = vision.BlobAnalysis('BoundingBoxOutputPort', true, ...

Dept. of CS&E Page 25

Vehicle detection and counting in traffic video on highway

'AreaOutputPort', false, 'CentroidOutputPort', false, ...

'MinimumBlobArea', 150);

bbox = step(blobAnalysis, binaryImage);

result = insertShape(thisFrame, 'Rectangle', bbox, 'Color', 'green');

labeledImage = bwlabel(binaryImage, 8);

if count==0

count=contv(binaryImage,grayImage);

cres=count;

else

[cres,count]=cont_up(count,cres,binaryImage,grayImage);

end

numCars = size(bbox, 1);

if numCars>=1

[ colour ,loc] = colour_iden(thisFrame,binaryImage);

colour=cell2mat(colour);

set(handles.text12,'string',text);

[ colour ,loc] = colour_iden(thisFrame,binaryImage);

result = insertText(result, [loc(2,h) loc(1,h)], num2str(h), 'BoxOpacity', 1,'FontSize', 8);

set(handles.text13,'string','VEICLE COUNT');

set(handles.text14,'string',num2str(count));

result = insertText(result, [1 1], count, 'BoxOpacity', 1,'FontSize', 8);

Plot the binary image.

subplot(1, 3, 2);

axes(handles.axes2),imshow(binaryImage);

title('Binarized Difference Image');

subplot(1, 3, 3);

axes(handles.axes3),imshow(result);

title('Tracking of veicle');

i=i+1;

Dept. of CS&E Page 26

Vehicle detection and counting in traffic video on highway

for g=1:

set(handles.edit2,'string','1.red 2.blue 3.rdfdd ');

pause(0.1);

end

The preprocessing, background substraction is foreground detection, and data validation.

Preprocessing consists of a collection of simple image processing tasks that change the raw input

video into a format that can be processed by subsequent. Background modeling uses the new

video frame to calculate and update A background model provides a statistical description of the

entire background scene. Foreground detection then identi_es pixels in the video frame that

cannot be adequately explained by the background model, and outputs them as a binary

candidate foreground mask. Finally, data validation examines the candidate mask, eliminates

those pixels that do not correspond to actual moving vehicles, and outputs the foreground mask.

The Domain knowledge and computationally-intensive vision algorithms are often used in data

validation. Real-time processing is still feasible as these sophisticated algorithms are applied

only on the small number of candidate foreground pixels.

.

5.3 Pseudo code for detection and tracking

videoReader = vision.VideoFileReader(diry2);%visiontraffic.avi

blobAnalysis = vision.BlobAnalysis('BoundingBoxOutputPort', true, ...

'AreaOutputPort', false, 'CentroidOutputPort', false, ...

'MinimumBlobArea', 150);

fontSize = 14;

i=1;

alpha = 0.5; count=0;

while ~isDone(videoReader)

thisFrame = step(videoReader);

if i == 1

Background = thisFrame;

else

Dept. of CS&E Page 27

Vehicle detection and counting in traffic video on highway

Change background slightly at each frame

Background(t+1)=(1-alpha)*I+alpha*Background

Background = (1-alpha)* thisFrame + alpha * Background;

end

Display the changing/adapting background.

subplot(1, 3, 1);

axes(handles.axes1),imshow(Background);

title('Adaptive Background');

Calculate a difference between this frame and the background.

differenceImage = thisFrame - Background;

Threshold with Otsu method.

grayImage = rgb2gray(differenceImage); Convert to gray level

thresholdLevel = graythresh(grayImage); Get threshold.

binaryImage = im2bw( grayImage, thresholdLevel); Do the binarization

se = strel('square', 5);

binaryImage=imdilate(binaryImage,se); binaryImage=imdilate(binaryImage,se);

binaryImage=imopen(binaryImage,se);

binaryImage=imclose(binaryImage,se);

[binaryImage,IDX] = imfill(binaryImage,'holes');

[binaryImage,IDX] = imfill(binaryImage,'holes');

[binaryImage,IDX] = imfill(binaryImage,'holes');

blobAnalysis = vision.BlobAnalysis('BoundingBoxOutputPort', true, ...

'AreaOutputPort', false, 'CentroidOutputPort', false, ...

'MinimumBlobArea', 150);

bbox = step(blobAnalysis, binaryImage);

result = insertShape(thisFrame, 'Rectangle', bbox, 'Color', 'green');

Detecting changes in image sequences of the same scene, captured at different times, is of

significant interest due to a large number of applications in several disciplines. Video

surveillance is among the important applications, which require reliable detection of changes in

the scene. There are several different approaches for such a detection problem. These methods

Dept. of CS&E Page 28

Vehicle detection and counting in traffic video on highway

can be separated into two conventional classes: temporal differencing and background modeling

and subtraction . The former approach is possibly the simplest one, also capable of adapting to

changes in the scene with a lower computational load. However, the detection performance of

temporal differencing is usually quite poor in real-life surveillance applications. On the other

hand, background modeling and subtraction approach has been used successfully in several

algorithms Background subtraction is easy. If you want to subtract a constant value, or a

background with the same size as your image, you simply write img = img -

background. imsubtract simply makes sure that the output is zero wherever the background is

larger than the image. Background estimation is hard. There you need to know what kind of

image you're looking at, otherwise, the background estimation will fail.

Tracking moving vehicles in video streams has been an area of research in computer vision. In

a real time system for measuring traffic parameters is described. Tracking uses a feature-based

method along with occlusion reasoning for tracking vehicles in congested traffic scenes. In order

to handle occlusions, instead of tracking entire vehicles, vehicle sub features are tracked.

Tracking is usually performed in the context of higher-level applications that require the location

and/or shape of the vehicle in every frame. Typically, assumptions are made to constrain the

tracking problem in the context of a particular application. There are three key steps in video

analysis: detection of interesting moving vehicle, tracking of vehicle from frame to frame, and

analysis of vehicle tracks to recognize their behavior. Otsu's method is an image processing

technique that can be used to convert a greyscale image into a purely binary image by calculating

a threshold to split pixels into frames.

5.4 Pseudo code for counting

function [cres,count]=cont_up(count,cres,binaryImage,grayImage)

labeledImage = bwlabel(binaryImage, 8);

stats = regionprops(labeledImage,'BoundingBox','Area');

area = cat(1,stats.Area);

if length(area)>1

[~,maxAreaIdx] = max(area);

bb = round(stats(maxAreaIdx).BoundingBox);

Dept. of CS&E Page 29

Vehicle detection and counting in traffic video on highway

a=bb(2)+bb(4);

b=bb(1)+bb(3);

s=size(labeledImage,1);

if a<=s && b<=s

croppedImage = grayImage(bb(2):bb(2)+bb(4),bb(1):bb(1)+bb(3),:);

croppedImage1 = binaryImage(bb(2):bb(2)+bb(4),bb(1):bb(1)+bb(3),:);

else

a=a-(a-s);

b=b-(b-s);

croppedImage = grayImage(bb(2):a,bb(1):b,:);

croppedImage1 = binaryImage(bb(2):a,bb(1):b,:);

end

croppedImage=imresize(croppedImage,[50,50]);

cd database

imwrite(croppedImage,strcat(num2str(1),'.jpg'));

cd ..

end

area=area(area>150);

[~,maxAreaIdx] = max(area);

if length(area)<cres

cres=length(area);

count=count;

else if length(area)>cres

a=countnew(area,grayImage,stats);

count1=length(area)-cres;

count=count+count1;

cres=length(area);

else if length(area)==count

count=count;

cres=cres;

Dept. of CS&E Page 30

Vehicle detection and counting in traffic video on highway

end

end

end

end

function out=countnew(area,grayImage,stats)

out=0;

for i=1:length(area)

bb = round(stats(i).BoundingBox);

note that regionprops switches x and y (it's a long story)

a=bb(2)+bb(4);

b=bb(1)+bb(3);

s=size(grayImage,1);

if a<=s && b<=s

croppedImage = grayImage(bb(2):bb(2)+bb(4),bb(1):bb(1)+bb(3),:);

croppedImage1 = binaryImage(bb(2):bb(2)+bb(4),bb(1):bb(1)+bb(3),:);

else

a=a-(a-s);

b=b-(b-s);

croppedImage = grayImage(bb(2):a,bb(1):b,:);

croppedImage1 = binaryImage(bb(2):a,bb(1):b,:);

end

croppedImage=imresize(croppedImage,[50,50]);

cd database

b1=imread('1.jpg');

cd ..

a1=corr2(croppedImage,b1);

if a1>=0.45

out=out+1;

end

end

Dept. of CS&E Page 31

Vehicle detection and counting in traffic video on highway

end

The tracked binary image mask1 forms the input image for counting. Binary image is

scanned from top to bottom for detecting the presence of vehicle. Two variables are maintained

that is count that keeps track of the number of vehicles and count register countreg, which

contains the information of the registered vehicle. When a new vehicle is encountered it is first

checked to see whether it is already registered in the buffer, if the vehicle is not registered then it

is assumed to be a new vehicle and count is incremented, otherwise it is treated as a part of an

already existing vehicle and the presence of the vehicle is neglected. the Blob Analysis block to

calculate statistics for labeled regions in a binary image. The block returns quantities such as the

centroid, bounding box, label matrix, and blob count. The Blob Analysis block supports input

and output counting vehicle.

5.5 Pseudo code for color identification

function [ c,loc ] = colour_iden( originalImage,binaryImage)

labeledImage = bwlabel(binaryImage, 8);

stats = regionprops(labeledImage,'BoundingBox','Area');

area = cat(1,stats.Area);

i1=1;

for i=1:length(area)

if area(i)>150

bb = round(stats(i).BoundingBox);

a=bb(2)+bb(4);

b=bb(1)+bb(3);

s=size(labeledImage,1);

s1=size(labeledImage,2);

if a<s && b<s1

for j=1:3

croppedImage(:,:,j) = originalImage(bb(2):bb(2)+bb(4),bb(1):bb(1)+bb(3),j);

end

else

Dept. of CS&E Page 32

Vehicle detection and counting in traffic video on highway

a=a-(a-s);

b=b-(b-s);

for j=1:3

croppedImage(:,:,j) = originalImage(bb(2):a,bb(1):b,j);

end

end

loc(1,i)=bb(2);

loc(2,i)=bb(1);

croppedImage=croppedImage*255;

r=mean2(croppedImage(:,:,1));

g=mean2(croppedImage(:,:,2));

b=mean2(croppedImage(:,:,3));

c(i1)=colur1(r,g,b);

i1=i1+1;

end

clear croppedImage;

end

end

function c=colur1(r,g,b)

chipNames = {'DarkSkin';'LightSkin';'BlueSky';'Foliage';'BlueFlower';'Bluish

Green';'Orange';'PurpleRed';ModerateRed';'Purple';'YellowGreen';'OrangeYellow';'Blue';'Green';'

White';'Yellow';'Magenta';'Cyan';'Red';'Neutral 8';'Neutral 65';'Neutral 5';'Neutral 35';'Black'};

sRGB_Values = ([...115,82,68194,150,13098,122,15787,108,67133,128,177103,189,170

214,126,4480,91,166193,90,9994,60,108157,188,64224,163,4656,61,15070,148,73175,54,

60,199,31187,86,1498,133,161243,243,242200,200,200160,160,160122,122,12185,85,85

52,52,52]);

r1=abs(sRGB_Values(:,1)-r);

g1=abs(sRGB_Values(:,1)-g);

Dept. of CS&E Page 33

Vehicle detection and counting in traffic video on highway

b1=abs(sRGB_Values(:,1)-b);

a=[r1,g1,b1]';

[d,f]=min(sum(a));

c=chipNames(f);

end

many color spaces can separate chromatic and illumination components, maintaining

Slandered model regardless of the brightness can lead to an unstable model especially for very

bright or dark vehicles conversion also requires computational resources particularly in large

images. The idea of preserving intensity components and saving computational costs lead us

back to the RGB space. As the requirement to identify moving shadows, we need to consider a

color model that can separate slandered and brightness components. It should be compatible and

make use of our mixture model.

Dept. of CS&E Page 34

Vehicle detection and counting in traffic video on highway

CHAPTER-6

TESTING

The actual purpose of testing is to discover errors. Testing is the process of trying to

discover every conceivable fault or weakness in a work product. It is the process of exercising

software with the intent of ensuring that the Software system meets its requirements and user

expectations and does not fail in an unacceptable manner.

TYPES OF TESTING

There are many types of testing methods are available in that mainly used testing

methods are as follows

6.1.1 Unit Testing

Unit testing involves the design of test cases that validate that the internal program logic

is functioning properly, and that program produces valid outputs. All decision branches and

internal code flow should be validated. It is the testing of individual software units of the

application. It is done after the completion of individual unit before integration

6.1.2 Integration Testing

Software integration testing is the incremental integration testing of two or more

integrated software components on a single platform to produce failures caused by interface

defects. The task of the integration test is to check that components or software applications e.g

components in the software system or one step up software applications at the company level

interact without error.

Dept. of CS&E Page 35

Vehicle detection and counting in traffic video on highway

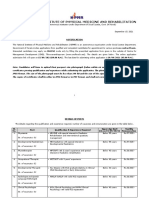

6.2 Detection Phase Of Moving cars

Test Input Frame Expected Output Frame Remark

Case Result

Test passed

1

Moving Test passed

2 vehicle in

Clausius

image

Test passed

3

Table 6.2: Testing for the Vehicle Detection of a moving cars.

The above tables 6.2 give different test cases done on the videos at different frame

location to detect the vehicle. In the moving cars video, the frame sequences used are 70, 168

and 232 respectively.

Dept. of CS&E Page 36

Vehicle detection and counting in traffic video on highway

6.3 Tracking Phase of Moving cars

Test Input Frame Expected Output Frame Remark

Case Result

Test

1 passed

Moving Test

2 vehicle passed

tracking.

Test

3 passed

Table 6.3: Testing for Vehicle Tracking by Matrix Scan of a moving cars.

The above tables 6.3 give different test cases done on the videos at different frame in

tracking multiple moving vehicle. In the moving cars video, the frame sequences used are 70,

168 and 232 respectively.

Dept. of CS&E Page 37

Vehicle detection and counting in traffic video on highway

6.3 Counting Phase of Moving cars

Test Input Frame Expected Output Frame Remark

Case Result

Test

1 passed

Moving Test

2 vehicle passed

tracking.

Test

3 passed

Table 6.3: Testing for Vehicle counting of moving cars.

The above figure 6.3 shows test case for vehicle counting the test case 1 is input frame is

1 and out frame is counting the number of vehicle is 1 then test case is passed. second test case

and third test case also passed. . The concept is applied for the entire image and the final count of

vehicle is present in variable count. A fairly good accuracy of count is achieved. Sometimes due

to occlusions two vehicles are merged together and treated as a single entity.

Dept. of CS&E Page 38

Vehicle detection and counting in traffic video on highway

CHAPTER -7

RESULT

Figure 7.1 Graphical user interfaces

Graphical user interface is the browses, select the required video from the directory and also play

the videos. The Graphical user interface is used clear and close all videos. The following vehicle

are grouped under the category of MATLAB User Interface Objects are UI controls check

boxes, earditable text fields, list boxes, pop-up menus, push buttons, radio buttons, sliders, static

text boxes, toggle buttons. UI toolbar (uitoolbar), can parent object of type uipushtool and

uitoggletool UI context menus and UI menus vehicle of type uicontextmenu and uimenu

Container vehicle uipanel and uibuttongroup.

Dept. of CS&E Page 39

Vehicle detection and counting in traffic video on highway

Figure 7.1 snapshot of detection and tracking of vehicles

By using the detection and counting methods, various results are obtained. Video sequence taken

containing moving cars and walking person. These videos are processed to get a detected and

extracted object. Following snapshots shows the results obtained in each step of the process. The

output of the segmentation is a binary vehicle mask perform region extraction on mask. In the

region tracking want to associate regions in frame i+1 with the regions in frame . The allows us

to compute the velocity of the region as it moves across the image and also helps in the vehicle

tracking stage. There are certain problems that need to be handled for reliable and robust region

tracking.

Dept. of CS&E Page 40

Vehicle detection and counting in traffic video on highway

Figure 7.2 Snapshot of vehicles counting.

Figure 7.2 shows the count of total tracked vehicles that are passed still this given frame.

Initially count register is set to zero ,when any moving vehicles are tracked than count register is

incremented. When a new vehicle is encountered is checked to see whether it is already

registered in the buffer, if the vehicle is not registered then it is assumed to be a new vehicle and

count is incremented, otherwise it is treated as a part of an already existing vehicle and the

presence of the vehicle is neglected.

Dept. of CS&E Page 41

Vehicle detection and counting in traffic video on highway

Figure 7.3 moving vehicles counting and color identification.

Each Moving vehicles color is identified and displayed as shown in the figure 7.3, color

of vehicles in the given video is determined by using slandered color model. Color spaces can

separate chromatic and illumination components, maintaining Slandered model regardless of the

brightness can lead to an unstable model especially for very bright or dark vehicles conversion

also requires computational resources particularly in large images. The idea of preserving

intensity components and saving computational costs lead us back to the RGB space.

Dept. of CS&E Page 42

Vehicle detection and counting in traffic video on highway

CHAPTER-7

CONCLUSION

A system has been developed to detect and count dynamic vehicles on highways efficiently.

The system effectively combines simple domain knowledge about vehicle classes with time

domain statistical measures to identify target vehicles in the presence of partial occlusions and

ambiguous poses, and the background clutter is effectively rejected. The experimental results

show that the accuracy of counting vehicles was 96%, although the vehicle detection was 100%

which is attributed towards partial occlusions.

The computational complexity of our algorithm is linear in the size of a video frame and the

number of vehicles detected. As we have considered traffic on highways there is no question of

shadow of any cast such as trees but sometimes due to occlusions two vehicles are merged

together and treated as a single entity.

Future Scope

Several future enhancements can be made to the system. The detection and tracking and

counting of moving vehicle can be extended to real-time live video feeds. Apart from the

detection and extraction, process of recognition can also be done. By using recognition

techniques, the vehicle in question can be classified. Recognition techniques would require an

additional database to match with the given vehicle.The system is designed for the detection and

tracking and counting of a multiple moving vehicle. It can be further devised to alarming

system.

Dept. of CS&E Page 43

Vehicle detection and counting in traffic video on highway

REFERENCES

[1] P.M.Daigavane and Dr. P.R.Bajaj , Real Time Vehicle Detection and Counting Method for

Unsupervised Traffic Video on Highways Mrs.

[2] Chen S. C., Shyu M. L. and Zhang C., ―An Intelligent Framework for Spatio-Temporal

Vehicle Tracking‖, 4th International IEEE Conference on Intelligent Transportation Systems,

Oakland,California, USA, Aug. 2001.

[3] Gupte S., Masoud O., Martin R. F. K. and Papanikolopoulos N. P.,―Detection and

Classification of Vehicles‖, In IEEE Transactions on Intelligent Transportation Systems, vol. 3,

no. 1, March, 2002, pp. 37–47.

[4] Dailey D. J., Cathey F. and Pumrin S., ―An Algorithm to Estimate Mean Traffic Speed Using

Uncalibrated Cameras‖, In IEEE Transactions on Intelligent Ttransportations Systems, vol. 1, no.

2,pp. 98-107, June, 2000.

[5] S. Cheung and C. Kamath, ―Ro[1] Chen S. C., Shyu M. L. and Zhang C., ―An Unsupervised

Segmentation Framework for Texture Image Queries‖, In the 25th IEEE Computer Society

International Computer Software and Applications Conference (COMPSAC), Chicago, Illinois,

USA, Oct.2000.

[6] N. Kanhere, S. Pundlik and S. Birchfield, ―Vehicle Segmentation and Tracking from a Low-

Angle Off-Axis Camera‖, In IEEE Conference on Computer Vision and Pattern Recognition‖,

San Diego, June,2005.

[7] Deva R., David A., Forsyth and Andrew Z., ― Tracking People by Learning their

Appearance‖, In IEEE Transactions on Pattern Analysis and Machine Intelligence,vol. 29, no1,

Jan. 2007.

[8] Toufiq P., Ahmed Egammal and Anurag Mittal, ―A Framework for Feature Selection for

Background Subtraction‖, In Proceedings of IEEE Computer Society Conference on Computer

Vision and Pattern Recognition(CVPR’06), 2006.

[9] P. Kaewtra Kulpong and R. Bowden, ―An Improved Adaptive Background Mixture Model

for Real-time Tracking with Shadow Detection‖, In Proceedings of the 2nd European Workshop

on Advanced Video-Based Surveillance Systems, Sept. 2001.

Dept. of CS&E Page 44

Vehicle detection and counting in traffic video on highway

APPENDICES

Functions

Functions differ from scripts. They take explicit input and output arguments. As all the

other matlab commands, a function can be called within a script or from the command line.

Functions used

There are many simulation functions are there some of them which are used in this

project are as follows

1. mmreader class

Syntax of mmreader function as fallows.

obj = mmreader (filename)

obj = mmreader (filename, 'PropertyName', PropertyValue)

Description

The mmreader function is used to Create multimedia reader vehicle for reading video

files.Use mmreader with the read method to read video data from a multimedia file into the

MATLAB workspace.

2. read

Syntax of read as fallows

video = read(obj)

video = read(obj, index)

Description

video = read(obj) reads in all video frames from the file associated with obj.

Dept. of CS&E Page 45

Vehicle detection and counting in traffic video on highway

video = read(obj, index) reads only the specified frames. Index can be a single number or a two-

element array representing an index range of the video stream

3. size

Systax of Size function as fallows

d = size(X)

[m,n] = size(X)

m = size(X,dim)

[d1,d2,d3,...,dn] = size(X),

Description

The size is used to Array dimensions where d = size(X) returns the sizes of each dimension of

array X in a vector d with ndims(X) elements. If X is a scalar, which MATLAB software regards

as a 1-by-1 array, size(X) returns the vector [11].[m,n] = size(X) returns the size of matrix X in

separate variables m and n.m = size(X,dim) returns the size of the dimension of X specified by

scalar dim.

[d1,d2,d3,...,dn] = size(X), for n > 1, returns the sizes of the dimensions of the array X in the

variables d1,d2,d3,...,dn, provided the number of output arguments n equals ndims(X). If n does

not equal ndims(X), the following exceptions hold:

4. implay

Syntax of implays function as

mplay

implay(

filename)

implay(I)

implay(…, FPS)

Dept. of CS&E Page 46

Vehicle detection and counting in traffic video on highway

Description

Implay function is used Play movies, videos, or image sequences.

mplay opens a Movie Player for showing MATLAB movies, videos, or image sequences (also

called image stacks). Use the implay File menu to select the movie or image sequence that you

want to play. Use implay toolbar buttons or menu options to play the movie, jump to a specific

frame in the sequence, change the frame rate of the display, or perform other exploration

activities. One can open multiple implay movie players to view different movies simultaneously.

implay(filename) opens the implay movie player, displaying the content of the file

specified by filename. The file can be an Audio Video Interleaved (AVI) file. implay reads one

frame at a time, conserving memory during playback. implay does not play audio tracks.

implay(I) opens the implay movie player, displaying the first frame in the multiframe

image array specified by I. I can be a MATLAB movie structure, or a sequence of binary,

grayscale, or truecolor images. A binary or grayscale image sequence can be an M-by-N-by-1-

by-K array or an M-by-N-by-K array. A true color image sequence must be an M-by-N-by-3-by-

K array.

implay(..., FPS) specifies the rate at which you want to view the movie or image

sequence. The frame rate is specified as frames-per-second. If omitted, implay uses the frame

rate specified in the file or the default value 20.

5. regionprops

Syntax of regionprops function as fallows

STATS = regionprops(BW, properties)

STATS = regionprops(CC, properties)

STATS = regionprops(L, properties)

STATS = regionprops(..., I, properties)

Description

The regionnprop is Measure properties of image regions

Dept. of CS&E Page 47

Vehicle detection and counting in traffic video on highway

STATS = regionprops(BW, properties) measures a set of properties for each connected

component (vehicle) in the binary image, BW. The image BW is a logical array; it can have any

dimension.

STATS = regionprops(CC, properties) measures a set of properties for each connected

component (vehicle) in CC, which is a structure returned by bwconncomp.

STATS = regionprops(L, properties) measures a set of properties for each labeled region in the

label matrix L. Positive integer elements of L correspond to different regions. For example, the

set of elements of L equal to 1 corresponds to region 1; the set of elements of L equal to 2

corresponds to region 2; and so on.

STATS = regionprops(..., I, properties) measures a set of properties for each labeled region in the

image I. The first input to regionprops—either BW, CC, or L—identifies the regions in I. The

sizes must match: size(I) must equal size(BW), CC.ImageSize, or size(L).

STATS is a structure array with length equal to the number of vehicles in BW,

CC.NumVehicles, or max(L(:)). The fields of the structure array denote different properties for

each region, as specified by properties.

Properties

Properties can be a comma-separated list of strings, a cell array containing strings, the

single string 'all', or the string 'basic'. If properties are the string 'all', regionprops computes all

the shape measurements, listed in Shape Measurements. If called with a grayscale image,

regionprops also returns the pixel value measurements, listed in Pixel Value Measurements. If

properties are not specified or if it is the string 'basic', regionprops computes only the 'Area',

'Centroid', and 'BoundingBox' measurements. One can calculate the following properties on N-D

inputs: 'Area', 'BoundingBox', 'Centroid', 'FilledArea', 'FilledImage', 'Image', 'PixelIdxList',

'PixelList', and 'SubarrayIdx'

Dept. of CS&E Page 48

Vehicle detection and counting in traffic video on highway

6. floor

Syntax of floor function as fallows

B = floor(A)

Description

The floor function Round toward negative infinity.B = floor(A) rounds the elements of A to the

nearest integers less than or equal to A. For complex A, the imaginary and real parts are rounded

independently.

Dept. of CS&E Page 49

Você também pode gostar

- The Yellow House: A Memoir (2019 National Book Award Winner)No EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Nota: 4 de 5 estrelas4/5 (98)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeNo EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeNota: 4 de 5 estrelas4/5 (5794)

- The Little Book of Hygge: Danish Secrets to Happy LivingNo EverandThe Little Book of Hygge: Danish Secrets to Happy LivingNota: 3.5 de 5 estrelas3.5/5 (400)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureNo EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureNota: 4.5 de 5 estrelas4.5/5 (474)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryNo EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryNota: 3.5 de 5 estrelas3.5/5 (231)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceNo EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceNota: 4 de 5 estrelas4/5 (895)

- Team of Rivals: The Political Genius of Abraham LincolnNo EverandTeam of Rivals: The Political Genius of Abraham LincolnNota: 4.5 de 5 estrelas4.5/5 (234)

- Never Split the Difference: Negotiating As If Your Life Depended On ItNo EverandNever Split the Difference: Negotiating As If Your Life Depended On ItNota: 4.5 de 5 estrelas4.5/5 (838)

- The Emperor of All Maladies: A Biography of CancerNo EverandThe Emperor of All Maladies: A Biography of CancerNota: 4.5 de 5 estrelas4.5/5 (271)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaNo EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaNota: 4.5 de 5 estrelas4.5/5 (266)

- The Unwinding: An Inner History of the New AmericaNo EverandThe Unwinding: An Inner History of the New AmericaNota: 4 de 5 estrelas4/5 (45)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersNo EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersNota: 4.5 de 5 estrelas4.5/5 (345)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyNo EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyNota: 3.5 de 5 estrelas3.5/5 (2259)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreNo EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreNota: 4 de 5 estrelas4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)No EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Nota: 4.5 de 5 estrelas4.5/5 (121)

- Dubai TalesDocumento16 páginasDubai Talesbooksarabia100% (2)

- Outbreaks Epidemics and Pandemics ReadingDocumento2 páginasOutbreaks Epidemics and Pandemics Readingapi-290100812Ainda não há avaliações

- Tfa Essay RubricDocumento1 páginaTfa Essay Rubricapi-448269753Ainda não há avaliações

- CM PhysicalDocumento14 páginasCM PhysicalLulu Nur HidayahAinda não há avaliações

- Caisley, Robert - KissingDocumento53 páginasCaisley, Robert - KissingColleen BrutonAinda não há avaliações

- Development Communication Theories MeansDocumento13 páginasDevelopment Communication Theories MeansKendra NodaloAinda não há avaliações

- Literature Review LichenDocumento7 páginasLiterature Review LichenNur Fazrina CGAinda não há avaliações

- MK Slide PDFDocumento26 páginasMK Slide PDFPrabakaran NrdAinda não há avaliações

- Bad SenarioDocumento19 páginasBad SenarioHussain ElboshyAinda não há avaliações

- Hydrozirconation - Final 0Documento11 páginasHydrozirconation - Final 0David Tritono Di BallastrossAinda não há avaliações

- NIPMR Notification v3Documento3 páginasNIPMR Notification v3maneeshaAinda não há avaliações

- Elementary Linear Algebra Applications Version 11th Edition Anton Solutions ManualDocumento36 páginasElementary Linear Algebra Applications Version 11th Edition Anton Solutions Manualpearltucker71uej95% (22)

- Math 10 Module - Q2, WK 8Documento5 páginasMath 10 Module - Q2, WK 8Reygie FabrigaAinda não há avaliações

- Being Mortal: Medicine and What Matters in The EndDocumento15 páginasBeing Mortal: Medicine and What Matters in The EndEsteban0% (19)

- ICT LegalEthical Issue PowerPoint PresentationDocumento4 páginasICT LegalEthical Issue PowerPoint PresentationReydan MaraveAinda não há avaliações

- Chapter 2Documento16 páginasChapter 2nannaAinda não há avaliações

- What Does The Scripture Say - ' - Studies in The Function of Scripture in Early Judaism and Christianity, Volume 1 - The Synoptic GospelsDocumento149 páginasWhat Does The Scripture Say - ' - Studies in The Function of Scripture in Early Judaism and Christianity, Volume 1 - The Synoptic GospelsCometa Halley100% (1)

- Ba GastrectomyDocumento10 páginasBa GastrectomyHope3750% (2)

- Zone Raiders (Sci Fi 28mm)Documento49 páginasZone Raiders (Sci Fi 28mm)Burrps Burrpington100% (3)

- Chiraghe Roshan Wa Amali Taweel - Nasir Khusrau PDFDocumento59 páginasChiraghe Roshan Wa Amali Taweel - Nasir Khusrau PDFJuzer Songerwala100% (1)

- Presente Progresive TenseDocumento21 páginasPresente Progresive TenseAriana ChanganaquiAinda não há avaliações

- Deeg Palace Write-UpDocumento7 páginasDeeg Palace Write-UpMuhammed Sayyaf AcAinda não há avaliações

- Population Viability Analysis Part ADocumento3 páginasPopulation Viability Analysis Part ANguyễn Phương NgọcAinda não há avaliações

- MSC Nastran 20141 Install GuideDocumento384 páginasMSC Nastran 20141 Install Guiderrmerlin_2Ainda não há avaliações

- Breast Cancer ChemotherapyDocumento7 páginasBreast Cancer Chemotherapydini kusmaharaniAinda não há avaliações

- Modbus Manual TD80 PDFDocumento34 páginasModbus Manual TD80 PDFAmar ChavanAinda não há avaliações