Escolar Documentos

Profissional Documentos

Cultura Documentos

Chapter3f M

Enviado por

Asahel NuñezDireitos autorais

Formatos disponíveis

Compartilhar este documento

Compartilhar ou incorporar documento

Você considera este documento útil?

Este conteúdo é inapropriado?

Denunciar este documentoDireitos autorais:

Formatos disponíveis

Chapter3f M

Enviado por

Asahel NuñezDireitos autorais:

Formatos disponíveis

How it Works

Formation of the Coefficients of Linearized Flow Equations

and the Equation Solvers

3

3.33 Introduction

Once the system of equations has been developed and the initial and boundary

conditions are identified, the remaining task is the solution of the equations to

determine the future distribution of pressure and saturation in the reservoir.

In this chapter, the objective is to demonstrate how the coefficients of the flow

equations are constructed leading to a linear set of equations; formation of the

coefficient matrices based on this set of linear algebraic equations;

characteristics of coefficient matrices; solution techniques and some special

topics.

3.34 Generation of Coefficient Matrices

In the following section, the coefficient matrices corresponding to IMPES and

Fully Implicit Method will be discussed.

3.34.1 IMPES Coefficient Matrix

Let us write the IMPES Finite Difference Pressure Equation in its

simplest form:

Tn n n+1 n n n+1

oi – 1--- + T wi – 1--- ( Poi – 1 – Poi ) 1 ( P oi + 1 – P oi )

+ T 1 + T

oi + --- wi + ---

2 2 2 2

+ T 1 + T

n n n+1 n n n+1 (1)

oj – --- 1 ( P oj – 1 – P oj ) + T 1+T

1 ( P oj + 1 – P oj )

wj – --- oj + --- wj + ---

2 2 2 2

Tn n n+1 n n n+1

ok – 1--- + T wk – 1--- ( P ok – 1 – P ok ) 1 ( P ok + 1 – P ok )

+ T 1+T

ok + --- wk + ---

2 2 2 2

= Qo + Qw

When constructing coefficients, following terminology is used:

East: i+1 Direction

West: i-1 Direction

3 - 118 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

North: j+1 Direction

South: j-1 Direction

North-East: k+1 Direction

South-West: k-1 Direction

These directions are shown in Figure 3-48. Then, the above equation

can be written in the following form:

Figure 3-48: Directional Definition of the Coefficients

j+1

k+1

i-1 i+1 East :i+1

West :i-1

North :j+1

South :j-1

k-1 North-East :k+1

South-West :k-1

ijk

Central Block :ijk

j-1

East Coefficient:

E = T 1 + T

n n

oi + --- wi + ---

1

(2)

2 2

West Coefficient:

W = T 1 + T

n n

oi – --- wi –

1

--

- (3)

2 2

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 119

How it Works

North Coefficient:

N = T 1 + T

n n

wj + --- (4)

1

oj + ---

2 2

South Coefficient:

S = T 1 + T

n n

oj – --- wj – ---

1 (5)

2 2

South West Coefficient:

SW = T

n n

+T (6)

ok – 1--- wk – ---

1

2 2

North East Coefficient:

NE = T

n n

1--- + T 1--- (7)

ok + wk +

2 2

Resulting equation will take the following form in three dimensions:

( SW )Pok – 1 + ( S )P oj – 1 + ( W )P oi – 1 + ( C )Pi, j, k

(8)

+ ( E )Poi + 1 + ( N )Poj + 1 + ( NE )Pok + 1 = RHS

where RHS = Q o + Q w and C = – ( SW + S + W + E + N + NE )

For 2-D Case:

( S )Poj – 1 + ( W )P oj – 1 + ( C )Pi, j, k

+ ( E )Poi + 1 + ( N )Poj + 1 = RHS (9)

where C = - (S + W + E + N).

For 1-D Case:

( W )P oi – 1 + ( C )Pi, j, k + ( E )P oi + 1 = RHS (10)

3 - 120 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

where C = - (W + E).

The Equations (8), (9) and (10) can be represented in the following

matrix equation form:

Ax = b (11)

Where A is the coefficient matrix, b is the right hand side vector, and

x is the unknown vector.

3.34.2 Simultaneous Solution and Fully Implicit Case

Recalling the simultaneous solution for pressure and saturation, the

following forms the coefficient matrix for an oil water system:

Oil Equation:

( PSWO )δP ok – 1 + ( PSO )δP oi – 1 + ( PWO )δPoi – 1 + ( PCO )δP oi, j, k

+ ( PEO )δPoi – 1 + ( PNO )δPoj + 1 + ( PNEO )δP ok – 1 + ( SSWO )δS wk – 1 (12)

+ ( SSO )δSwj – 1 + ( SWO )δS wi – 1 + ( SCO )δS wi, j, k + ( SEO )δSw + 1

+ ( SNO )δS wj + 1 + ( SNEO )δS wk + 1 = RHS o

Water Equation:

( PSWW )δP ok – 1 + ( PSW )δPoi – 1 + ( PWW )δP oi – 1 + ( PCW )δPoi , j, k

+ ( PEW )δP oi – 1 + ( PNW )δPoj + 1 + ( PNEW )δP ok – 1 + ( SSWW )δSwk – 1

+ ( SSW )δS wj – 1 + ( SWW )δS wi – 1 + ( SCW )δS wi, j, k + ( SEW )δS w + 1 (13)

+ ( SNW )δSwj + 1 + ( SNEW )δS wk + 1 = RHS w

Then the matrix equation will be:

Oil PSWO SSWO δPo + PSO SSO δP o + PWO SWO δPo

Water PSWW SSWW δS w PSW SSW δS w PWW SWW δS w

δP o δP o δPo

+ PSWO SSWO + PSO SSO + PWO SWO (14)

PSWW SSWW δS w PSW SSW δS w PWW SWW δS w

δPo RHS o

+ PNEO SNEO =

PNEW SNEW δS w RHS w

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 121

How it Works

It is clear from the above equation that for each grid block, two

equations are solved. This is what makes the approach more

expensive than IMPES in terms of linear solver cost requirements.

The above Equation can be represented in the following form:

( SW )δx ok – 1 + ( S )δx oj – 1 + ( W )δx oi – 1 + ( C )δx i, j, k

(15)

( E )δx oi + 1 + ( N )δx oj + 1 + ( NE )δx ok + 1 = RHS

where - refers to a matrix (in this case 2 x 2 sub matrix),x is a vector

of pressure and saturation.

3.34.3 Coefficient Matrix Construction

1. 1-Dimensional Case: Assume that we have linear 10 grid block

problem. From Figure 3-49 one can construct the connections of

each grid block as follows:

Diagonal Address Link

1 2

2 1 3

3 2 4

4 3 5

5 4 6

6 5 7

7 6 8

8 7 9

9 8 10

10 9

This address link and the resulting tridiagonal matrix form can be

related directly to the linearized 1-D flow equation above, which

is of the form:

( W )Poi – 1 + ( C )Pi, j, k ( E )Poi + 1 = RHS

3 - 122 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

Figure 3-49: 1-Dimensional 10 Grid Block System

1 2 3 4 5 6 7 8 9 10

Diagonal Linked Nodes Direction

1 2 East

2 1 3 West East

3 2 4 West East

4 3 5 West East

5 4 6 West East

6 5 7 West East

7 6 8 West East

8 7 9 West east

9 8 10 West East

10 9 West

I Coefficient

1 CE (W does not exist since i = 1 = 0)

2 WCE

3 WCE

4 WCE

5 WCE

6 WCE

7 WCE

8 WCE

9 WCE

10 WC (E does not exist since i + 1 = n + 1)

If we compare the coefficients with the address link, it is clear that when

we add the diagonal term to the number of entries in the address link, then

the sum will be equal to the number of entries in coefficient list. The posi-

tions of the coefficients are defined by the address links, which are also the

column numbers for the rows identified by the diagonal number. The Ma-

trix form is given in Figure 3-50.

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 123

How it Works

Figure 3-50: Pattern of the Coefficient Matrix for 1 Dimensional 10

Grid Block System

2. 2-Dimensional Case: The shape of the domain, the numbering of the grid

blocks and the corresponding matrix form is given in Figure 3-51. There

are 9 grid blocks in that areal domain. For 20 grid block case (4x5 system)

(See Figure 3-52)

Figure 3-51: 2-Dimensional 3x3 Grid Block System Coefficients

1 2 3 Diagonal Linked Nodes

4 5 6 S W E N

7 8 9 1 2 4

2 1 3 5

3 2 6

4 1 5 7

5 2 4 6 8

6 3 5 9

7 4 8

8 5 7 9

9 6 8

3 - 124 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

Figure 3-52: Areal Grid Realization

1 2 3 4

5 6 7 8

9 10 11 12

13 14 15 16

17 18 19 20

Diagonal Address Link

1 2 5

2 1 3 6

3 2 4 7

4 3 8

5 1 6 9

6 2 5 7 10

7 3 6 8 11

8 4 7 12

9 5 10 13

10 6 9 11 14

11 7 10 12 15

12 8 11 16

13 9 14 17

14 10 13 15 18

15 11 14 16 19

16 12 15 20

17 13 18

18 14 17 19

19 15 18 20

20 16 19

The corresponding matrix pattern is shown in Figure 3-53.

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 125

How it Works

Figure 3-53: Pattern of Coefficient Matrix for a 4x5 Grid System

.

This address link and the resulting pentadiagonal (one main and 4 co-

diagonals) matrix form can be related directly to the linearized 2-D flow

equation above, which is of the form:

( S )P oj – 1 + ( W )Poi – 1 + ( C )P i, j, k + ( E )Poi + 1 ( N )Poj + 1 = RHS

3 - 126 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

Blk i-1 j-1 i,j j+1 i+1 i-1 j-1 i,j j+1 i+1

No

1 0 0 1,1 2 2 C N E

2 0 1 1,2 3 2 S C N E

3 0 2 1,3 4 2 S C N E

4 0 3 1,4 0 2 S C

5 1 0 2,1 2 3 W C N E

6 1 1 2,2 3 3 W S C N E

7 1 2 2,3 4 3 W S C N E

8 1 3 2,4 0 3 W S C E

9 2 0 3,1 2 4 W C N E

10 2 1 3,2 3 4 W S C N E

11 2 2 3,3 4 4 W S C N E

12 2 3 3,4 0 4 W S C E

13 3 0 4,1 2 5 W C N E

14 3 1 4,2 3 5 W S C N E

15 3 2 4,3 4 5 W S C N E

16 3 3 4,4 0 5 W S C E

17 4 0 5,1 2 0 W C N

18 4 1 5,2 3 0 W S C N

19 4 2 5,3 4 0 W S C N

20 4 3 5,4 0 0 W S C

The address links and the position of the coefficients are in full

agreement. This aspect of the coefficients make them easy to

program.

3.35 Solution of Coefficient Matrices

Before introducing the solution techniques, it is necessary to list some of the

characteristics of the coefficient matrices:

• They are diagonally dominant, i.e. the diagonal term is either greater

than or equal to the negative sum of the off-diagonal terms.

• Their incidence matrices are symmetrical with respect to the main diag-

onal. Depending on the weighting schemes, the entries can be symmet-

rical too.

• Since the number of equations is always equal to the number of

unknowns, the matrix is square.

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 127

How it Works

There are two main types of solution techniques for the coefficient matrices of

the linearized flow equations, namely:

• Direct Methods

• Iterative Methods

3.35.1 Direct Methods

Direct methods are the techniques by which the unknowns of the

equations are eliminated one by one until all of the equations are

solved. The solution is theoretically exact but with computer

solutions, due to rounding off errors creeping in, they may not be as

exact in practice.

A crucial ingredient of direct methods is Gaussian Elimination. The

objective is to eliminate the first unknown from all the equations

except the first equation. This leaves the systems with one less

equation and one less unknown. This process is repeated for the

remaining unknowns resulting in smaller and smaller number of

equations and finally the system is reduced to a scalar form which can

be solved by division operation. An example for Gaussian

Elimination can be given as follows:

Original Matrix Equation:

a 11 a 12 a 13 x 1 b1

a 21 a 22 a 23 x 2 = b 2

a 31 a 32 a 33 x 3 b3

Elimination Process:

Orginal Step 1 Step 2

a 11 a12 a 13 a 11 a12 a 13 a 11 a 12 a 13

a 21 a22 a 23 0 a22 a 23 0 a 22 a 23

a 31 a32 a 33 0 a32 a 33 0 0 a 33

b1 b1 b1

b2 b2 b2

b3 b3 b3

3 - 128 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

where

a 12 a 21 a13 a 21

a 22 = a 22 – -------------- -, a 23 = a 23 – ---------------

a 11 a 11

a 12 a 31 a 13 a 31

a 32 = a 32 – --------------- , a 33 = a 33 – --------------

-

a 11 a 11

b1 a 21 b 1 a 31

-, b 3 = b3 – ------------

b 2 = b 1 – ------------ -

a 11 a 11

One can explain the terms with = cap using the same technique. The

important feature of Gaussian Elimination here is that at each stage of

the calculations, the previous row is multiplied with a pivot element

and subtracted from the following equations. This subtraction

process is of particular importance and the main cause of extra

storage requirements as we shall see in the next section.

Ordering Techniques in Direct Methods

Coefficient matrices of reservoir simulators are extremely sparse. By

this we mean that the number of non-zero entries in the coefficient

matrices are very low as compared with the total matrix entries.

An inevitable process in Gaussian Elimination is the continuous

generation of new non zero elements as a result of manipulation and

elimination of the terms in the equations. The new non zero

elements are filled in and defined as follows:

Let A be the sparse coefficient matrix. The original non zero structure

of this matrix is defined by Nonz(A) = {(i,j) aij ≠ 0 and i ≠ j }. Let F

be the matrix with original and created non zero, then Fill (A) = Nonz

(F) - Nonz (A).

Therefore, any method of reducing Fill (A) is of great interest and

that forms the main principle of ordering. The following is an

example:

x x x x

x x 0 0

x 0 x 0

x 0 0 x

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 129

How it Works

Application of Gaussian Elimination will result in a Full Matrix that

is Fill (a)= 16-10 = 6. If, however, we order the matrix in the

following form:

x 0 0 x

0 x 0 x

0 0 x x

x x x x

there is no fill in and Fill (A) = 10 - 10 = 0.

Ordering matrices can be performed in the following form:

• Ordering into a desirable form

• Near Optimal Ordering

Ordering into a desirable form requires a preset pattern of the

coefficient matrix. Some of the patterns available in the Petroleum

Literature are Standard Gaussian Ordering, D2 Ordering, Cyclic 2

Ordering, Zebra Ordering and D4 Ordering. Figure 3-52 and 3-53 are

the results of standard ordering. Figure 3-54 and 3-55 show the D-4

ordering.

Figure 3-54: 4x5 Areal Grid Realization - D4 Ordering

1 11 2 12

13 3 14 4

5 15 6 16

17 7 18 8

9 19 10 20

3 - 130 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

Figure 3-55: Matrix Pattern for D-4 Ordering, Before Elimination.

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 131

How it Works

Diagonal Address Link

1 11 13

2 11 12 14

3 11 13 14 15

4 12 14 16

5 13 15 17

6 14 15 16 18

7 15 17 18 19

8 16 18 20

9 17 19

10 18 19 20

11 1 2 3

12 2 4

13 1 3 5

14 2 3 4 6

15 3 5 6 7

16 4 6 8

17 5 7 9

18 6 7 8 10

19 7 9 10

20 8 10

From Figure 3-55, it is clear that, in applying Gaussian elimination, only the

lower half of the matrix need to be triangularized. Hence, when we compare

this with standard ordering, it is obvious that the amount of arithmetical

operations and the storage requirements are cut at least by half.

Figure 3-56 shows the pattern of the matrix after the triangularization process

where N represents new nonzeroes, and the are accumulated around the

diagonal.

3 - 132 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

Figure 3-56: D-4 With Non-Zeroes Created After Elimination.

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 133

How it Works

Figure 3-57 shows the relative performance of these two ordering schemes,

where W3 is the arithmetical operations required inverting D4 and W1 is for

standard ordering. As the number of grid blocks increase, there is a rapid initial

decline in the ratio of W3 to W1. After some grid size, it remains almost

constant. Another interesting observation is as the ratio between the number of

grid blocks in x-direction and y-direction decrease, D4 becomes more efficient.

Figure 3-57: Computational Cost Comparisons for Different

Ordering Schemes

For Near Optimal Ordering, There are Three Main Strategies:

1. Scheme-I: (Figure 3-58, Panel a): Number rows according to the number of

non-zero off-diagonal terms before the actual elimination process takes

place. In this scheme, the rows with only one off-diagonal terms are num-

bered first, those with two terms second, and so on, with those with most

terms being last.

2. Scheme-II: (Figure 3-58, Panel b): Number the rows so that at each step of

the elimination process the next row to be operated upon is the one with the

fewest non-zero terms. If more than one row meets this criterion, any one

of them is selected. This scheme warrants the examination of the effects of

the non-zero term accumulation on the elimination process.

3 - 134 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

3. Scheme-III: (Figure 3-58, Panel-c): Number the rows so that at each step of

the process, the next row to be operated upon is the one that will introduce

the fewest non-zero terms. If more than one row meets this criterion, select

anyone. This process involves trial test of every feasible alternative of the

elimination process at each step.

Figure 3-58: Near Optimal Ordering Techniques

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 135

How it Works

Figure 3-58 Continued: Near Optimal Ordering Techniques

3.35.2 Iterative Techniques

Iterative techniques are based on the determination of an approximate

solution to an exact answer. The approximation is refined

systematically until the answer converges to the exact answer (See

Figure 3-59). The incentive to develop iterative techniques is the low

cost and storage requirements. In direct methods, we have seen that

due to Gaussian Elimination, new fill ins may appear. In the case of

Iterative techniques, either no fill ins appear, or the amount of fill ins

that appear for accelerating solution are usually negligible as

compared with direct methods, especially inverting large sparse

matrices. Hence iterative techniques reduce, the memory required to

store the matrix and the quantity of arithmetic required to arrive at the

solution.

Figure 3-59: Iterative Techniques

F(x)

3 - 136 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

The oil industry has used many types of iterative solvers. We will only give the

names of these techniques, and brief explanation where necessary. Iterative

solvers are unable to cope with the increasing complexity of the reservoir

engineering problems modeled by the simulators.

• Point Jacobi and Gauss-Seidel Method:

• Point Successive Overrelaxation: These techniques use acceleration

parameters (or amplification parameters), and are denoted by w. The

optimal value of this parameter can be determined by analyzing the iter-

ation matrix evaluated at w=1. On the other hand, the most practical

way to determine the best value of w is to simply observe the computer

time required for solving the pressure equation for different values of w.

This can be done by varying w on successive time steps or on successive

runs. Figure 3-60 shows a typical plot of computer time required for

convergence determined experimentally as a function of w. Note that the

best value of w is below the value of the theoretically optimal value of

w.

• Alternation Direction Method (ADIP)

• Stone's Implicit Method (SIP): This was designed to achieve signifi-

cantly faster convergence than any other technique mentioned above. It

is also not particularly sensitive to the degree of nonlinearity in the

problem, which is a particular limitation for the ADIP methods.

Although, the low computation costs make iterative techniques attractive as

compared with direct methods, this conclusion may not always be valid as we

shall discuss in the following section.

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 137

How it Works

Figure 3-60: Selection of Optimum Relaxation Parameter

3.35.3 Direct Versus Conventional Iterative Techniques

The overall efficiency of a solution method is often dependent on the

characteristics of the particular problem at hand. It is, therefore,

difficult to generalize, however, the following points are important in

assessing the possible performance of any solution technique.

• Order of Matrix and Available Computer Storage: Iterative

methods are generally considered to be very efficient in terms of

storage since they need only to store the nonzero entries of the

matrix. For example, for a 20 x 20 x 20 grid (i.e. 8000 active

blocks), the storage required for an iterative method is 56 x 103

(for 7 point finite difference approximation). On the other hand,

for a direct band solver (Standard Gaussian Elimination) this stor-

3 - 138 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

age would be 6.4 x 106. It is this property of iterative techniques

which has made them preferable even if the cost of arithmetic

operations involved becomes higher than that of direct methods.

• The advent of new generation computers (e.g. single and multi-

processing machines), has somewhat reduced the disadvantages

of the direct methods.

• The Form of the Equations: The coefficient matrices of the res-

ervoir simulation exhibits diagonal dominancy, that is the central

term if the main diagonal term is equal or greater than the nega-

tive sum of the off-diagonal terms. It is greater when terms come

from the right hand side of the equations. As the time steps get

bigger, then the diagonal dominancy becomes weaker. This con-

dition reduces the efficiency of the iterative solvers. Also, the

irregularity of the reservoir domains may cause a marginal

increase in the cost of the iterative methods due to missing

blocks. These problems do not exist for direct methods.

• The Number of Times a Similar Problem is Likely to Occur:

In cases where the same coefficient matrix B is to be solved with

changing the right hand side (namely, in compressible single

phase fluid flow simulation), direct methods are the most efficient

techniques. Storage availability is still a limiting factor. This is

due to the fact that the transformation of the matrix into an upper

triangular one is carried out only once. Iterative techniques, how-

ever require the solution to be refined at each time step of the sim-

ulation procedure, by performing matrix vector calculations.

• III Conditioning: Weak diagonal dominancy and/or negative

compressibility/transmissibility ratios may result in ill condition-

ing. Negative ratios are likely to arise in treating well bore terms

in fully implicit simulation formulations and simulation of stream

cycling and gas condensate problems. Under these conditions,

one or more eigen values with negative real parts is possessed by

the coefficient matrix, a characteristic that inhibits the application

of well known iterative techniques like SIP, LSOR, etc.

• Miscellaneous Factors: Most of the iterative techniques require

a selection of optimal convergence parameters to achieve a feasi-

ble convergence. These parameters are determined empirically

and their optimization is often troublesome.

Performance of the iterative techniques depends on the initial guess

of solution whereas direct methods require neither optimal parameter

selection nor an approximation to the solution as it provides an exact

answer itself.

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 139

How it Works

3.35.4 Semi Direct/Semi Iterative: Conjugate Gradient Algorithm,

Preconditioned by Nested Factorization

Due to the difficulties involved in implementing iterative techniques

and the resource limitations of direct methods, much research has

been carried out to find out if there was any technique which could

combine the relative merits of both Direct and Iterative Techniques.

To this end, a method of Conjugate Gradients is used as a

compromise. Conjugate Gradient Method as an equation solver was

first introduced into the literature by Hestenes and Steifel in 1952. It

is guaranteed that this method converges in N iterations, where N is

the order of the matrix. In this respect, its cost is equivalent to those

of direct methods. Reids in 1971 evaluated the method and concluded

that satisfactory convergence might occur substantially earlier than N

iterations. His comparative study based on sparse matrices showed a

clear superiority of Conjugate Gradient Methods over Successive

Relaxation iterative technique. One of the major disadvantages of

CGM as presented by the inventors was the applicability limitation of

the technique to the symmetric matrices. This shortcoming was

overcome later by Concus and Golub of Stanford University

(REPORT STAN-CS 76-535) who prepared a generalized conjugate

gradient method which is valid for asymmetric as well as indefinite

systems of equations. After this, many authors started to implement

acceleration techniques by using a preconditioning technique, a

technique that replaces the original coefficient matrix by its

approximation that can easily be inverted. Since then, the method has

become popular and almost every commercial simulator has used

different variations of it. Some of the advantages of the method may

be summarized as follows:

• No convergence acceleration parameter is required,

• It imposes fewer restrictions on the coefficient matrix for the opti-

mal behavior than most of the iterative techniques, and,

• The second norm of the error vector decreases monotonically at

each iteration.

In the last two decades, a procedure invented by Vinsome (SPE 5729)

has been used extensively. This method is called ORTHOMIN. In the

state of the art simulator ECLIPSE, there is a variant of ORTHOMIN

method based on a preconditioning technique invented by I. Cheshire

(GeoQuest), and called Nested Factorization. In the following

sections, we will briefly see the CGM method and the nested

factorization.

3 - 140 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

1. Nested Factorization as a Preconditioner

For every iterative equation solver, one of the essential compo-

nents is a procedure which computes a solution, which is an ap-

proximation to the true solution. At each step of iteration, this

procedure is implemented to correct the errors carried from the

previous iteration steps. The approximation is a preconditioning

matrix, say B. Then, the original matrix equation

AX = RHS (16)

is multiplied by an approximate inverse of A,

B-1AX = B-1RHS (17)

In order to understand the characteristics of the nested factoriza-

tion procedure, we will focus on a single-phase matrix. In order to

facilitate the visualization, we will use 5x4x2 system.

In the matrix given by Figure 3-61, the connections in terms of di-

rections can be expressed as follows:

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 141

How it Works

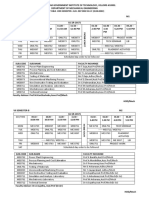

Figure 3-61: Standard Coefficient Matrix

Table 3-2: Column and Row Addresses for the Diagonal and Co-Diagonal Entries.

D E W N S NE SW D E W N S NE SW

1 2 6 21 21 22 26 1

2 1 3 7 22 22 21 23 27 2

3 2 4 8 23 23 22 24 28 3

4 3 5 9 24 24 23 25 29 4

5 4 10 25 25 24 30 5

6 7 1 11 26 26 27 21 31 6

7 6 8 2 12 27 27 26 28 22 32 7

8 7 9 3 13 28 28 27 29 23 33 8

9 8 10 4 14 29 29 28 30 24 34 9

10 9 5 15 30 30 29 25 35 10

11 12 6 16 31 31 32 26 36 11

12 11 13 7 17 32 32 31 33 27 37 12

13 12 14 8 18 33 33 32 34 28 38 13

14 13 15 9 19 34 34 33 35 29 39 14

15 14 10 20 35 35 34 30 40 15

16 17 11 36 36 37 31 16

3 - 142 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

D E W N S NE SW D E W N S NE SW

17 16 18 12 37 37 35 38 32 17

18 17 19 13 38 38 36 39 33 18

19 18 20 14 39 39 37 40 34 19

20 19 15 40 40 38 35 20

The above table includes the diagonal and the 6 co-diagonals.

D Main diagonal

W Upper co-diagonal connected to the main diagonal in positive x-

direction

E Lower co-diagonal connected to the main diagonal in negative x-

direction

N Upper co-diagonal connected to the main diagonal in positive y-

direction

S Lower co-diagonal connected to the main diagonal in negative y-

direction

NW Upper co-diagonal connected to the main diagonal in positive z-

direction

SW Lower co-diagonal connected to the main diagonal in negative z-

direction

In order to solve AX=RHS, we need an approximate matrix B to A such that

B-1y is easily solvable. Then, the speed of convergence of the iterative method

depends on how good B approximates A.

We may represent the coefficient matrix A by the following:

A = D+E+W+S+N+SE+NW (18)

The nested factorization that replaces (3) may be summarized as follows:

–1

B = ( Ω + SE )Ω ( Ω + NW ) (19)

–1

Ω = ( θ + S )θ ( θ + N ) (20)

–1

θ = ( Γ + E )Γ ( Γ + W ) (21)

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 143

How it Works

–1 –1 –1

Γ = D – EΓ W – ∑ ( Sθ N + SEΩ NW ) (22)

where

Γ Diagonal Matrix

θ Block diagonal matrix (blocked by line)

Ω Block diagonal matrix (blocked by plane)

By combining Equations (19) to (22) a new expression for B may be obtained

–1 –1 –1 –1

B = A + ( Sθ N + SEΩ NW ) – ∑ ( Sθ N + SEΩ NW ) (23)

In two dimension, SE = NW = 0, leading to

–1 –1

B = A + ( Sθ N ) – ∑ ( Sθ N ) (24)

In one dimension

–1

B = ( Γ + E )Γ ( Γ + W ) (25)

Equation (25) is an exact Cholesky decomposition.

The best choice for the preconditioning matrix must be the one that depends on

the structure of A. B in Equation (8) shows similar structure to A. The solution

of B can be performed as follows:

a. At the outermost level, the block triangular matrix equation as given below

is solved:

( Ω + SE )α = y (26)

• Since Ω is block diagonal, these equations can be solved a plane at a

time using

–1

α = Ω ( y – SEα ) (27)

3 - 144 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

• Equation (12) is not recursive. SE α requires solution from the previous

plane. Within each plane, the equations are solved a line at a time using

–1

β = θ ( y – Sβ ) (28)

It is clear from Equation (28) again that the right hand side contains

known values of the parameters. For example, Sβ is linked with the

solution from previous line.

b. Evaluation of G (must be done before the iteration starts): Similar consid-

erations to step 1 apply here as well.

• Calculations proceed with a plane at a time.

• Within each plane, calculations proceed a line at a time.

• At each stage of the calculations

–1

- elements of Ω from the previous plane

–1

- elements of θfrom the previous line

–1

- elements of Γ from the previous cell.

2. Conjugate Gradient Method Embedding Nested Factorization as a Precon-

ditioner.

Once the preconditioning matrix is found, the approach proceed as follows:

Step 1: set iteration counter k to zero, and compute the initial guess X O to

the solution vector and compute the initial guess with the help of

Preconditioning matrix B, as follows:

XO = B-1RHS (29)

Step 2: Determine the residual vector RO.

RO = RHS-AXO (30)

One can use the norm of the residual vector to assess the goodness of the

estimated solution. Procedure terminates if the estimated residual norm is

less than or equal to the preset tolerance criteria.

Step 3: Estimate search direction vector Dk using

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 145

How it Works

Dk = B-1Rk (31)

k

Step 4: Estimate the corresponding movement direction, D r , in residual

space

k k

D r = AG

If A and B were the same, then the exact solution could be found by

progressing the iteration along the direction vectors.

k k

X = X +D (32)

k k k k

R = B – A ( X + D ) = R – Dr = 0 (33)

( if φB – A = 0 )

Step 5: Determine a vector Qk in the space defined by Dk and the previous

n search directions D-1, Dk-2,...,Dk-1 so that the residual corresponding to

k+1 k k k

X = X +α Q (34)

k

is minimized corresponding to a value of α , a constant. The

corresponding vector in residual space will be:

k k (35)

Z = AQ

The optimum Qk is given by

n

k k k k–1

Q = Dr + ∑ βi Q (36)

i=1

n (37)

k k k k k–1

Z = AQ = D r + ∑ RHSi Z

i=1

k k–1

k –Dr Z

βi = ---------------------

- (38)

k–1 2

Z

3 - 146 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

Here, Qk, Qk-1,..., Qk-n are conjugate directions, and Z k, Zk-1,..., Zk-n

are mutually orthogonal residual space vectors.

k

Step 6: Determine α , which minimizes the norm of Rk+1

k+1 k k k (39)

R = R –α Z

2 2 k k 2 k2

Rk + 1 = Rk – 2α k R Z + αk Z (40)

and for

k k

k R Z

α = -----------2

Z

k (41)

Step 7: Update the solution and residual vectors

k+1 k k k

X = X +α Q (42)

Step 8: If (n+1) is equal to or greater than specified number of search

directions (Nstack), then

k

• Find the smallest β i

• Reject Zk-1 and Qk-i

• Set Zk-i-(j+1) = Zk-i-j, and

• Set Qk-i-(j+1) = Qk-i-j

These will ensure that the next iteration will be done using the current

most significant Nstack -1 residuals.

Step 9: Check if the residuals are small enough. If they are not small

enough, go back to step 3.

It is clear from the CGM procedure outlined above that the residual at

each step of iteration is minimized over a n+1 dimensional space,

which is less than, or equal to stack length Nstack. As stack length

Nstack increases, the method becomes more robust. If it is the same as

the order of the matrix, theoretically the exact solution can be

obtained. However, greater the number of stacks, greater is the

storage requirements and the computational costs in general.

Fortunately, in the practice minimum of 3 to 4 is sufficient for many

problems. In case of difficult problems like coning problems,

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 147

How it Works

complex-heterogeneous reservoirs where there are sharp saturation

and pressure gradient changes, the stack length may need to be a

multiple of the minimum value as stated above. Stack length is input

in many commercial simulators, and having the insight on how the

procedure works, one can identify the optimal Nstack value without

much difficulty.

The reader should remember that the coefficient matrix A used in

these discussions is a diagonal one. When the diagonal structure of A

is destroyed by the use of faults, aquifers, local grid refinements,

radial co-ordinates including the completion of a circle, rate specified

wells, pressure maintenance options, crossflow wells and etc, special

treatment is necessary in order to apply the technique.

3.36 Solution of Tridiagonal Matrix Equations

As stated earlier, tridiagonal matrices arise from one dimensional modeling

studies. The most convenient and efficient technique for solving the equations

of 1-D problems is the Thomas Algorithm. Consider the following equation:

AP i – 1 + BP i + CP i + 1 = D i (44)

If we can represent the above equation in the following form:

P i = E i P i + 1 + Fi (45)

then Pi can be calculated from Pi+1 after calculating Ei and Fi.

Let Pi-1 = Ei-1 Pi + Fi-1. Substitute this into the flow equation given above to

end up with:

D i – AFi – 1 C (46)

P i = --------------------------- – -------------------------P

AEi – 1 + B AEi – 1 + B i + 1

then

D i – AFi – 1 –C

Ei = --------------------------

- and ------------------------- (47)

AEi – 1 + B AE i – 1 + B

3 - 148 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

3.37 Selection of Equation Solvers

Apart from the general rules laid down in direct versus iterative technique

section, there are some rule of thumbs that one should be aware of in selecting

the solver type. These are summarized as follows:

1. Areal Models: If the order of the matrix is small, use a band solver. If the

reservoir grid block size intermediate is in the order of 800-1200 grid

blocks, use direct methods rather than band solvers like D4. If it is in the

order of 2000, one can use Scheme III of the sparse matrix techniques. For

anything more than 2000, one should definitely be using iterative tech-

niques with the current state of computational resources.

2. Cross-sectional Models: If the number of blocks is small, again, one can

use direct methods. For a large number of grid blocks, however, Line Suc-

cessive Over relaxation (LSOR) or Nested Factorization version of Conju-

gate Gradient Method can be used. If vertical communication is good, for

large matrices corresponding to large numbers of grid blocks, LSOR can be

used efficiently.

3. Three Dimensional Models: Direct methods are usually inefficient in three-

dimensional problems, except perhaps sparsity conserving schemes and D4

for small domain studies. If vertical communication is good, use LSOR or

else access a Nested Factorization version of CGM orSIP.

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 149

How it Works

References

Appleyard, J.R. and I.M. Cheshire (1983): “Nested Factorization,” paper SPE

12264, presented at the 1983 SPE Symposium on Reservoir Simulation, San

Francisco, Nov. 15-18.

Appleyard, J.R., I.M. Cheshire and R.K. Pollard (1981): “Special Techniques

for Fully Implicit Simulators,” Proc., European Symposium on Enhanced Oil

Recovery, Bournemouth, England, pp. 395-408

Al-Marhoun, M.A. (1979): “A New Approach to the Application of Direct

Methods in Petroleum Reservoir Simulation,” Middle East Oil Technical

Conference of the SPE, Manama, Bahrain, March 25-29.

Behie, A. and P.A. Forsyth (1983): “Multigrid Solution of the Pressure

Equation in Reservoir Simulation,” SPEJ, (Aug.), pp. 623-632.

Behie, A. and P.K.W. Vinsome (1982): “Block Interative Methods for Fully

Implicit Reservoir Simulation,” SPEJ, (Oct.), pp. 658-668.

Birkhoff, G. R.S. Varga, and D. Young, (1962): “Alternating Direction Implicit

Methods,” Advances in Computers, v. 3, pp. 189-273.

Bjordammen, J. and K.H. Coats, (1969): “Comparison of Alternating-direction

and Successive Overrelaxation Techniques in Simulation of Reservoir Fluid

Flow,” SPEJ, (March), pp. 47-58.

Brameller, A., R.N. Allan and Y.M. Haman (1976): Sparsity, Pitman

Publishing, London.

Brietenbach, E.A., D.H. Thurnau and H.K. Van Poollen, (1969): “Solution of

the Immiscible Fluid Flow Simulation Equations,” SPEJ, (June), pp. 155-169.

Brown, K.E. and J.F. Lea (1985): “Nodal Systems Analysis of Oil and Gas

Wells,” Journal of Petroleum Technology, (Oct.), pp. 1751-1763.

Carnagan, B., H.A. Luther and J.O. Wilkes (1969): Applied Numerical

Methods, John Wiley Publishing Co., New York City.

Chadra, Rati (1978): “Conjugate Gradient Methods for Partial Differential

Equations,” Research Report no. 129, Jan., Yale University.

3 - 150 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

Chung, A.K. and H. Gersund (1985): “Automatic Generation of the Coefficient

Matrix of Finite Difference Equation,” IJNM, England.

Coats, K.H. and M.H. Terhune, “Comparison of Alternating Direct Explicit

and Implicit Procedures in Two-dimensional Flow Calculations,” Society of

Petroleum Engineers Journal, (Dec.), pp. 350-362; Trans. AIME, 237.

Concus, P. and G.H. Golub: “A Generalized Conjugate Gradient Method for

Non-Symmetric Systems of Linear Equations,” Report STAN-CS 76-535,

Department of Computer Science, Stanford University.

Crout, P.D., (1941): “A Short Method for Evaluating Determinants and

Solving Systems of Linear Equations with Real or Complex Coefficients,”

Trans. of AIME, v. 60, p. 1235.

Csendes, Z.J. (1975): “A Fortran Program to Generate Finite Difference

Formulas,” IJNM, England, v. 9, pp. 581-589.

Curtis, A.R. and J.K. Reid, (1971): “The Solution of Large Sparse

Unsymmetric Systems of Linear Equations,” J. Inst. Math Appl., v. 8, pp. 344-

353.

Daltaban, T.S. (1985): “Comparative Study on Direct Methods in Reservoir

Simulation,” submitted to the SEG-SIAM-SPE Conference on Mathematical

Modeling and Computational Methods in Seismic Exploration and Reservoir

Modeling, Houston, TX, Jan. 21-24.

Duff, I. S. (1977): “A Survey of Sparse Matrix Research,” Proceedings of the

IEEE, v. 65, No. 4, April, pp. 500-535.

Dystra, H. and R.L. Parsons, “Relaxation Methods Applied to Oil Field

Research,” Trans AIME, v. 192, pp. 227-232.

Eisenstat, S.C., H.C. Elman and M.H. Schultz (1988): “Block-Preconditioned

Conjugate-Gradient-Like Methods for Numerical Reservoir Simulation,”

SPERE, (Feb.), pp. 307-312.

Evans, D.J. (1973): “The Analysis and Application of Sparse Matrix

Algorithms in the Finite Element Method,” The Mathematics of Finite

Element and Applications. Proceedings of the Brunel University

Conference (eds. by Whiteman), Academic Press, London.

George, J.A. (1973): “Nested Dissection of a Regular Finite Element Mesh,”

SIAMJ. Numerical Analysis, (April), v. 10, pp. 345-363.

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 151

How it Works

Gibbs, N.E., W.G. Poole, and P.K. Stockmeyer (1976): “An Algorithm for

Reducing the Bandwidth and Profile Reduction,” SIAM J. Num. Anal., (April)

v. 13, No. 2,.

Graham, M.F. and G. Jennings (1979): “Efficient Sparse Matrix

Implementation for Direct Methods in Reservoir Simulation,” SPE 7682,

presented at the 1979 SPE Symposium on Reservoir Simulation, Denver, CO,

Jan. 13-Feb. 2.

Golub, G.H. and C.F. Van Loan (1983): Matrix Computations, Johns

Hopkins U. Press, Baltimore.

Gustavson, F.G. and W. Linger and R. Willoughby, (1970): “Symbolic

Generation of an Optimal Crout Algorithm for Sparse Systems of Linear

Equations,” J. ACM, v. 17, pp 87-109.

Hestenes, M.R. and E. Steifel (1952): “Methods of Conjugate Gradients for

Solving Linear Systems,” Journal of Research of the National Bureau of

Standards, 49, pp. 409-436.

Killough, J.E. (1979): “The Use of Vector Processors in Reservoir

Simulation,” SPE 7673. presented at 1979 SPE of AIME Fifth Symposium on

Reservoir Simulation, Denver, CO, Feb. 1-2.

Letkeman, J.P. (1976): “Semi-Direct Iterative Methods in Numerical Reservoir

Simulation,” SPE 5730, presented at 4th Symposium of Numerical Simulation

of Reservoir Performance of the SPE of AIME, Los Angeles, CA, Feb. 18-20.

McDonald, A.E. and R.H. Trimble (1977): “Efficient Use of Mass Storage

During Elimination for Sparse Sets of Simultaneous Equations,” SPEJ, (Aug.),

pp. 300-316.

McDonald, A.E. and R.H. Trimble (1976): “Matrix Factorization - Storage

Management Algorithm for the Direct Solution of Reservoir Simulation

Equations,” SPE 5731, presented at the 4th Symposium on Numerical

Simulation of Reservoir Performance of the SPE of AIME, Los Angeles, CA,

Feb. 19-20.

Meijerink, J.A. and H.A. van der Vorst (1977): “An Interative Solution Method

for Linear System of which the Coefficient Matrix is a Symmetric Matrix,”

Mathematics of Computation, (Jan.), v. 31, p. 148.

Nolen, J.S. D.W. Kuba and M.J. Kascic, Jr. (1979): “Application of Vector

Processors to the Solution of Finite Difference Equations,” SPE 7675,

3 - 152 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

presented at 1979 SPE of AIME Fifth Symposium on Reservoir Simulation,

Denver, CO, Feb. 1-2.

Ogbuabovi, E.C., W.F. Tinney and J. W. Walker (1970): “Sparsity-Direct

Decomposition for Gaussian Elimination of Matrices,” IEE Trans. on Power

Apparatus and Systems, v. 89, No. 1, Jan.

Page, E.S. and L.B. Wilson, Information Representation and Manipulation

in a Computer Cambridge England, Cambridge University Press.

Peaceman D.W. (1978): “Interpretation of Well-Block Pressures in Numerical

Reservoir Simulation,” Society of Petroleum Engineers Journal, (June), pp.

183-194.

Peaceman D.W. (1983): “Interpretation of Well-Block Pressures in Numerical

Reservoir Simulation with Nonsquare Grid Blocks and Anisotropic

Permeability,” Society of Petroleum Engineers Journal, (June), pp. 531-543.

Peaceman D.W. and H.H. Rachford, (1955): “The Numerical Solution of

Parabolic and Elliptic Differential Equations,” J. Soc. Indust. Appl. Math., v. 3,

pp. 28-41.

Philps, W. “The Storage and Inversion of Large Sparse Matrices,” Math and

Stats Group IC, Wilmslow Rep.

Price, H.S. and Coats K.H., (1973): “Direct Methods in Reservoir Simulation,”

SPE 4278, paper presented at the Third SPE Symposium on Numerical

Simulation, Houston, TX, Jan. 10-12; JPT, (June 1974), pp. 295-308.

Quon, D., P.M. Dranchuk, S.R. Allada and P.K. Leung, (1966): “Application of

the Alternating Direction Explicit Procedure to Two-dimensional Natural Gas

Reservoirs,” Society of Petroleum Engineers Journal, (June): pp. 137-142;

Tans. AIME, 237.

Reid, J.K. (1971): “On the Method of Conjugate Gradients for the Solution of

Large Sparse Systems of Linear Equations,” Proceedings of the Conference

on Large Sparse sets of Linear Equations, Academic Press. pp. 231-254.

Routt, Kenneth R. and Paul B. Crawford, “A New and Fast Method for Solving

Large Numbers of Reservoir Simulation Equations,” SPE 4277, Third

Symposium on Numerical Simulation of Reservoir Performance, Houston, TX,

Jan. 10-12, 1973.

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 153

How it Works

Saad, Y, and M.H. Schultz (1986): “GMRES: A Generalized Minimal Residual

Algorithm for Solving Nonsymmetric Linear Systems,” SIAM J. Sci. Stat.

Comput., (July), pp. 856-869.

Schwarz, H.R., H. Rutishauser and E. Stiefel, (1973): Numerical Analysis of

Symmetric Matrices, Englewood Cliffs, N.J.: Prentice-Hall.

Settari, A. and K. Aziz (1973): “A Generalization of the Additive Correction

Methods for Iterative Solution of Matrix Equation,” SIAM J. Numerical

Analysis, v. 10, p. 506.

Schneider, G.E. and M. Zeban (1981): “A Modified Strongly Implicit

Procedure for the Numerical Solution of Field Problems,” Numerical Heat

Transfer, v. 4.

Sherman, A.H. (1985): “Sparse Gaussian Elimination for Complex Reservoir

Models,” SPE 13537, presented at the 1985 SPE Symposium on Reservoir

Simulation, Dallas, TX, Feb. 10-13.

Stone, H.L. (1968): “Iterative Solution of Implicit Approximations of

Multidimensional Partial Differential Equations,” SIAM J. on Numer. Anal., v.

5, p. 530.

Stone, H.L. (1968): “Iterative Solution of Implicit Approximations of

Multidimensional Partial Differential Equations,” SIAM J. Numer. Anal. v. 5, p.

530.

Suarez, A. and Ali S.M. Farouq (1976): “A Comparative Evaluation of

Different Versions of the Strongly Implicit Procedure (SIP),” paper presented

at the 4th Symposium on Numerical Simulation of Reservoir Performance, Los

Angeles, CA, Feb.

Tan, T.B.S. (1982): “Application of D4 Ordering and Minimization in an

Effective Partial Matrix Inverse Iteration Method,” SPE 10493, presented at

the 1982 SPE Symposium on Reservoir Simulation, New Orleans, Jan. 31-Feb.

3.

Tinney, W.F. and J.W. Walker (1967): “Direct Solutions of Sparse Network

Equations by Optimally Ordered Triangular Fractionalization,” Proceedings of

IEEE, v. 55, No. 11, (Nov.), pp. 1801-1809.

Towler, B.F. and J.E. Killough (1982): “Comparison of Preconditioners for the

Conjugate Gradient Method in Reservoir Simulation,” SPE 10490, presented

3 - 154 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

How it Works

at the 1982 SPE Symposium in Reservoir Simulation, New Orleans, Jan. 31-

Feb. 3.

Varga, R.S., (1962): Matrix Iterative Analysis, Prentice-Hall Inc., Englewood

Cliffs, N.J.: Prentice Hall.

Vinsome, P.K. (1976): “Orthomin, an Iterative Method for Solving Sparse Sets

of Simultaneous Linear Equations,” SPE 5729, presented at the 1976 SPE

Symposium in Numerical Simulation of Reservoir Performance, Los Angeles,

CA, Feb. 19-20.

Wallis, J.R. (1983): “Incomplete Gaussian Elimination as a Preconditioning for

Generalized Conjugate Gradient Acceleration,” SPE 12265, presented at the

1983 SPE Symposium on Reservoir Simulation, San Francisco, CA, Nov. 15-

18.

Wallis, J.R., K.H. Coats and R. Volina (1982): “An Interative Matrix Solution

Technique for Steamflood Simulation,” SPE 10491, presented at the 6th SPE

Symposium on Reservoir Simulation of the SPE of AIME, New Orleans, LA,

Jan. 31-Feb, 3.

Wallis, J.R., R.P. Kendall and T.E. Little (1985): “Constrained Residual

Acceleration of Conjugate Residual Methods,” SPE 13536, presented at the

1985 SPE Symposium on Reservoir Simulation, Dallas, TX, Feb. 10-13.

Watts, J.W. (1981): “A Conjugate Gradient-Truncated Direct Method for the

Iterative Solution of the Reservoir Simulation Pressure Equation,” SPEJ,

(June), pp. 345-353.

Watts, J.W. (1971): “An Iterative Matrix Solution Method Suitable for

Anisotropic Problems,” SPEJ, (March), pp. 47-51; Trans. AIME, 251.

Watts, J.W. (1973): “A Method for Improving Line Successive Over-relaxation

in Anistropic Problems-A Theoretical Analysis,” SPEJ, (April), pp. 105-118;

Trans of AIME, 255.

Weinstein, H.G., H.L. Stone and T.V. Kwan (1969): “An Interative Procedure

for Solution of System of Parabolic and Elliptic Equations in Three

Dimensions,’ Ind. Eng. Chem. Fund., v. 8, pp. 281-287.

Woo, P.T. (1979): “Application of Array Processor to Sparse Elimination,”

SPE 7674, presented at 1979 SPE of AIME Fifth Symposium on Reservoir

Simulation, Denver, CO, Feb. 1-2.

Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN 3 - 155

How it Works

Woo, PT., S.J. Roberts, and F.G. Gustavson, “Application of Sparse Matrix

Techniques in Reservoir Simulation,” SPE 4544, paper presented at the 48th

Annual Meeting, Las Vegas, Nev., Oct. 1973.

Young, D.M. (1971): Iterative Solution of Large Linear Systems, Academic

Press, New York City.

Young, D.M. (1962): “The Numerical Solution of Elliptic and Parabolic Partial

Differential Equations,” Survey of Numerical Analysis, J.Todd (ed.),

McGraw-Hill Book Co., New York City.

3 - 156 Applied Reservoir Simulation by Dr. Tayyar Sezgin DALTABAN

Você também pode gostar

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)No EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Nota: 4.5 de 5 estrelas4.5/5 (121)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryNo EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryNota: 3.5 de 5 estrelas3.5/5 (231)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaNo EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaNota: 4.5 de 5 estrelas4.5/5 (266)

- Never Split the Difference: Negotiating As If Your Life Depended On ItNo EverandNever Split the Difference: Negotiating As If Your Life Depended On ItNota: 4.5 de 5 estrelas4.5/5 (838)

- The Emperor of All Maladies: A Biography of CancerNo EverandThe Emperor of All Maladies: A Biography of CancerNota: 4.5 de 5 estrelas4.5/5 (271)

- The Little Book of Hygge: Danish Secrets to Happy LivingNo EverandThe Little Book of Hygge: Danish Secrets to Happy LivingNota: 3.5 de 5 estrelas3.5/5 (400)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeNo EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeNota: 4 de 5 estrelas4/5 (5794)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyNo EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyNota: 3.5 de 5 estrelas3.5/5 (2259)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreNo EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreNota: 4 de 5 estrelas4/5 (1090)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersNo EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersNota: 4.5 de 5 estrelas4.5/5 (344)

- Team of Rivals: The Political Genius of Abraham LincolnNo EverandTeam of Rivals: The Political Genius of Abraham LincolnNota: 4.5 de 5 estrelas4.5/5 (234)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceNo EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceNota: 4 de 5 estrelas4/5 (895)

- The Unwinding: An Inner History of the New AmericaNo EverandThe Unwinding: An Inner History of the New AmericaNota: 4 de 5 estrelas4/5 (45)

- BDSM List FixedDocumento4 páginasBDSM List Fixedchamarion100% (3)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureNo EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureNota: 4.5 de 5 estrelas4.5/5 (474)

- The Yellow House: A Memoir (2019 National Book Award Winner)No EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Nota: 4 de 5 estrelas4/5 (98)

- Geop. ArtDocumento8 páginasGeop. ArtEduardo MassaAinda não há avaliações

- Vein Type DepositDocumento7 páginasVein Type DepositHarisArmadiAinda não há avaliações

- JPT MagazineDocumento148 páginasJPT MagazineAsahel NuñezAinda não há avaliações

- An Integrated Workflow For Chemical EOR Pilot DesingDocumento18 páginasAn Integrated Workflow For Chemical EOR Pilot DesingAsahel NuñezAinda não há avaliações

- SPE - 35666 Reduction of Drill String Torque and Casing Wear in Extended Reach Wells Using - N B Moore PDFDocumento10 páginasSPE - 35666 Reduction of Drill String Torque and Casing Wear in Extended Reach Wells Using - N B Moore PDFAsahel NuñezAinda não há avaliações

- Grade 6 2nd Periodical Test With TOS Answer Keys MATH 1 PDFDocumento6 páginasGrade 6 2nd Periodical Test With TOS Answer Keys MATH 1 PDFmafeh caranogAinda não há avaliações

- Archer, J. S. and Wall, C. G. - Petroleum Engineering Principles and PracticeDocumento375 páginasArcher, J. S. and Wall, C. G. - Petroleum Engineering Principles and PracticeAlbert AmekaAinda não há avaliações

- Lecture 1 Overview 2016 PDFDocumento75 páginasLecture 1 Overview 2016 PDFchimbarongo10000% (1)

- Manual de Despiece Honda Beat 100Documento84 páginasManual de Despiece Honda Beat 100jorgeeu8833% (3)

- Nasua NasuaDocumento9 páginasNasua NasuaJetsabellGutiérrezAinda não há avaliações

- Optimization Study of A Novel WaterIonic PDFDocumento11 páginasOptimization Study of A Novel WaterIonic PDFAsahel NuñezAinda não há avaliações

- Spe 129421 JPT PDFDocumento7 páginasSpe 129421 JPT PDFSahib QafarsoyAinda não há avaliações

- SPE - 27488 Pore Pressure Estimation From Velocity Data - Accounting For Overpressure - Glenn L Bowers PDFDocumento7 páginasSPE - 27488 Pore Pressure Estimation From Velocity Data - Accounting For Overpressure - Glenn L Bowers PDFAsahel NuñezAinda não há avaliações

- Optimization Study of A Novel WaterIonic PDFDocumento11 páginasOptimization Study of A Novel WaterIonic PDFAsahel NuñezAinda não há avaliações

- Lecture 2 Chapter 1 2014 1Documento50 páginasLecture 2 Chapter 1 2014 1Asahel NuñezAinda não há avaliações

- SPE - 95241 The Effect of Fracture Relative Permeabilities and Capillary Pressures PDFDocumento9 páginasSPE - 95241 The Effect of Fracture Relative Permeabilities and Capillary Pressures PDFAsahel NuñezAinda não há avaliações

- Salinity: ECLIPSE Black Oil Simulator - Advanced Options: P Low Water FloodingDocumento42 páginasSalinity: ECLIPSE Black Oil Simulator - Advanced Options: P Low Water FloodingAsahel NuñezAinda não há avaliações

- EOR Screening CriteriaDocumento7 páginasEOR Screening CriteriaMuhammad WibisonoAinda não há avaliações

- Spe 35385 Pa PDFDocumento10 páginasSpe 35385 Pa PDFChris PonnersAinda não há avaliações

- SPE - 39258 Assessment of Application of Dynamic Kill For The Control of PDFDocumento14 páginasSPE - 39258 Assessment of Application of Dynamic Kill For The Control of PDFAsahel NuñezAinda não há avaliações

- Spe 35385 Pa PDFDocumento10 páginasSpe 35385 Pa PDFChris PonnersAinda não há avaliações

- Spe 0211 0045 JPTDocumento2 páginasSpe 0211 0045 JPTAsahel NuñezAinda não há avaliações

- EOR Screening CriteriaDocumento7 páginasEOR Screening CriteriaMuhammad WibisonoAinda não há avaliações

- Spe893731 PDFDocumento8 páginasSpe893731 PDFInnobaPlusAinda não há avaliações

- p52 74Documento22 páginasp52 74LawAinda não há avaliações

- SPE - 24630 Evaluation of Horizontal Radial An Vertical Injection Wells PDFDocumento14 páginasSPE - 24630 Evaluation of Horizontal Radial An Vertical Injection Wells PDFAsahel NuñezAinda não há avaliações

- SPE - 27488 Pore Pressure Estimation From Velocity Data - Accounting For Overpressure - Glenn L Bowers PDFDocumento7 páginasSPE - 27488 Pore Pressure Estimation From Velocity Data - Accounting For Overpressure - Glenn L Bowers PDFAsahel NuñezAinda não há avaliações

- SPE - 35120 Comparision of Steady State and Trasient Analysis Dynamics Kill Models For - L W Abel PDFDocumento7 páginasSPE - 35120 Comparision of Steady State and Trasient Analysis Dynamics Kill Models For - L W Abel PDFAsahel NuñezAinda não há avaliações

- SPE - 22559 Advancements in Dynamic-Kill Calculations For Blowout Wells - G E KoubaDocumento10 páginasSPE - 22559 Advancements in Dynamic-Kill Calculations For Blowout Wells - G E KoubaAsahel NuñezAinda não há avaliações

- SPE - 35120 Comparision of Steady State and Trasient Analysis Dynamics Kill Models For - L W Abel PDFDocumento7 páginasSPE - 35120 Comparision of Steady State and Trasient Analysis Dynamics Kill Models For - L W Abel PDFAsahel NuñezAinda não há avaliações

- SPE - 35120 Comparision of Steady State and Trasient Analysis Dynamics Kill Models For - L W Abel PDFDocumento7 páginasSPE - 35120 Comparision of Steady State and Trasient Analysis Dynamics Kill Models For - L W Abel PDFAsahel NuñezAinda não há avaliações

- Lecture 5 - Final For Posting PDFDocumento54 páginasLecture 5 - Final For Posting PDFAsahel NuñezAinda não há avaliações

- A Review of Geopressure Evaluation From Well Logs PDFDocumento9 páginasA Review of Geopressure Evaluation From Well Logs PDFmurbieta100% (1)

- An Automated Energy Meter Reading System Using GSM TechnologyDocumento8 páginasAn Automated Energy Meter Reading System Using GSM TechnologyBarAinda não há avaliações

- Vivo X5Pro Smartphone Specifications: Brand and ModelDocumento4 páginasVivo X5Pro Smartphone Specifications: Brand and ModelEric AndriantoAinda não há avaliações

- Therelek - Heat Treatment ServicesDocumento8 páginasTherelek - Heat Treatment ServicesTherelek EngineersAinda não há avaliações

- CHEM333 Syllabus 2020 2021Documento4 páginasCHEM333 Syllabus 2020 2021lina kwikAinda não há avaliações

- Iso TR 16922 2013 (E)Documento18 páginasIso TR 16922 2013 (E)Freddy Santiago Cabarcas LandinezAinda não há avaliações

- ASI Hammer Injection Block ManualDocumento16 páginasASI Hammer Injection Block ManualGerardo Manuel FloresAinda não há avaliações

- Brunei 2Documento16 páginasBrunei 2Eva PurnamasariAinda não há avaliações

- 96-09302-00-01 Reva Technical Manual Inogen One G5Documento18 páginas96-09302-00-01 Reva Technical Manual Inogen One G5Paula Andrea MarulandaAinda não há avaliações

- Measuring Salinity in Crude Oils Evaluation of MetDocumento9 páginasMeasuring Salinity in Crude Oils Evaluation of Metarmando fuentesAinda não há avaliações

- Ded Deliverable List: As Per 19-08-2016Documento2 páginasDed Deliverable List: As Per 19-08-2016Isna MuthoharohAinda não há avaliações

- StringTokenizer in JavaDocumento11 páginasStringTokenizer in JavaNeha saxena Neha saxenaAinda não há avaliações

- Architecture of HimalayasDocumento3 páginasArchitecture of HimalayasAndrea CaballeroAinda não há avaliações

- Kingspan 30 GSNDocumento1 páginaKingspan 30 GSNNoella AguiarAinda não há avaliações

- Better Place - Heaven or HellDocumento3 páginasBetter Place - Heaven or HellToto SammyAinda não há avaliações

- Super GisDocumento535 páginasSuper GisNegrescu MariusAinda não há avaliações

- HISTOPATHDocumento38 páginasHISTOPATHDennis Louis Montepio BrazaAinda não há avaliações

- Advanced Automatic ControlDocumento26 páginasAdvanced Automatic Controlabdullah 3mar abou reashaAinda não há avaliações

- N100 Rle Back MassageDocumento24 páginasN100 Rle Back MassagerlinaoAinda não há avaliações

- Higher Unit 11 Topic Test: NameDocumento17 páginasHigher Unit 11 Topic Test: NamesadiyaAinda não há avaliações

- Liquid SizingDocumento38 páginasLiquid SizingChetan ChuriAinda não há avaliações

- Looking For Cochlear Dead Regions A Clinical Experience With TEN TestDocumento9 páginasLooking For Cochlear Dead Regions A Clinical Experience With TEN TestVinay S NAinda não há avaliações

- Odd Semester Time Table Aug - Dec22 Wef 22.08.2022.NEWDocumento4 páginasOdd Semester Time Table Aug - Dec22 Wef 22.08.2022.NEWKiran KumarAinda não há avaliações

- Danas Si Moja I BozijaDocumento1 páginaDanas Si Moja I BozijaMoj DikoAinda não há avaliações

- Transdermal Drug Delivery System ReviewDocumento8 páginasTransdermal Drug Delivery System ReviewParth SahniAinda não há avaliações

- Ap Art and Design Drawing Sustained Investigation Samples 2019 2020 PDFDocumento102 páginasAp Art and Design Drawing Sustained Investigation Samples 2019 2020 PDFDominic SandersAinda não há avaliações