Escolar Documentos

Profissional Documentos

Cultura Documentos

Raid 6

Enviado por

smart_katkoot7473Direitos autorais

Formatos disponíveis

Compartilhar este documento

Compartilhar ou incorporar documento

Você considera este documento útil?

Este conteúdo é inapropriado?

Denunciar este documentoDireitos autorais:

Formatos disponíveis

Raid 6

Enviado por

smart_katkoot7473Direitos autorais:

Formatos disponíveis

USING RAID 6 FOR INCREASED RELIABILITY AND PERFORMANCE:

Sun Storage 2500 Series, 6140, 6580, and 6780 Arrays Said A. Syed, NPI and OEM Array Engineering Group Sun BluePrints Online

Part No 820-7395-10 Revision 1.0, 2/9/09

Sun Microsystems, Inc.

Table of Contents

Suns RAID 6 Solution: Reliability, Performance, Economy . . . . . . . . . . . . . . . . . . . . . 1 Disk Failure Rates, Reliability Needs Promote RAID 6 Usage. . . . . . . . . . . . . . . . . . . . 4 RAID 6 Implementation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5 Best Practices for Implementing RAID 6 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8 Conclusions. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14 About the Author . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14 Acknowledgements. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15 References . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15 Ordering Sun Documents . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16 Accessing Sun Documentation Online . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

Using RAID 6 for Increased Reliability and Performance:

Sun Storage 2500 Series, 6140, 6580, and 6780 Arrays

Many business applications requiring high availability, reliability, and performance have historically chosen either RAID 5 or RAID 1+01 technology for their data storage needs. While both of these RAID algorithms provide increased data availability, they are not without certain disadvantages. For example, disk groups using RAID 5 are only able to sustain a single drive failure. And disk groups using RAID 1+0 algorithms must use 50 percent of the available storage capacity for mirroring. These disadvantages are exacerbated as disk drive capacity increases. With larger disks, the RAID 1+0 implementation becomes increasingly costly. And as number and size of disks increase, the risk of a disk error also increases making RAID 5 disk groups inherently less reliable.

RAID 6 is cheaper to implement than RAID 1+0 and is more reliable than RAID 5.

RAID 6, a RAID algorithm that is recently gaining popularity, helps solve these problems. By using dual parity calculations, a RAID 6 disk group can tolerate two concurrent disk failures. Sun Storage 2500 series, 6140, 6580, and 6780 arrays using RAID 6 protection are an ideal solution to meet the needs of applications requiring highly available, high-performance data storage. This article addresses the following topics: Suns RAID 6 Solution: Reliability, Performance, Economy on page 1 describes the Sun RAID 6 solution using the Sun Storage 2500 series, 6140, 6580, and 6780 arrays. RAID 6 Implementation on page 5 provides information on how RAID 6 is implemented and describes how it increases data reliability by protecting against multiple failures. Best Practices for Implementing RAID 6 on page 8 discusses best practices when implementing RAID 6 on the Sun Storage 2500 series, 6140, 6580, and 6780 arrays.

Suns RAID 6 Solution: Reliability, Performance, Economy

Sun Storage arrays with RAID 6 technology feature enterprise class data protection for the cost conscious. This solution can provide enhanced reliability for applications with large data sets requiring both high performance and high availability.

1. RAID 1+O, mirroring and striping, is also referred to as RAID 10 in some documentation.

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

Sun Storage 2500 Series Arrays

The Sun Storage 2510, 2530, 2540 Arrays, along with the Sun Storage 2501 Expansion Module, make up the 2500 series of workgroup disk solutions. These Sun Storage 2500 arrays contain disk drives for storing data and controllers that provide the interface between a data host and the disk drives. The Sun Storage 2540 Array provides Fibre Channel connectivity, the Sun Storage 2530 Array provides Serial Attached SCSI (SAS) connectivity, and the Sun Storage 2510 supports iSCSI over Ethernet networks. The Sun Storage 2501 Expansion Module provides additional storage, and can be attached to any 2500 series array. Key features of the Sun Storage 2500 series arrays include: Maximum of 48 disk drives (one controller tray and three expansion trays) Dual redundant controllers 512 MB cache per controller or 1 GB cache, with the option for a 1 GB cache per controller Support for SAS and SATA disk drives.

Sun Storage 6140 Array

Intelligent RAID controllers and a dedicated processor for performing RAID calculations provide enhanced performance in the StorageTek 6140 array.

The Sun Storage 6140 Array provides performance, high availability, and reliability in an economical package. The array includes dual intelligent RAID controllers, with an embedded processor to perform all RAID calculations for enhanced performance. The redundant design and non-disruptive upgrade procedures provide exceptional data protection for business-critical applications. All components in the arrays data path are redundant; if one component fails, the Sun Storage 6140 array automatically fails-over to the alternate component providing continuous uptime and uninterrupted data availability. Hot-swappable components enable maintenance to occur without system disruption. Support for up to 112 SATA and Fibre Channel (FC) drives helps meet growing data storage needs.

Figure 1. The Sun Storage 6140 Array. Key features of the Sun Storage 6140 Array include: Dual Fibre Channel RAID controllers support RAID levels 0, 1, 3, 5, 1+0, and 6 Scales to 112 disk drives and 112 TB of data in a small footprint

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

Supports high-performance FC drives and high-performance SATA drives Redundant, hot-swappable components protect against any single point of failure

Sun Storage 6580 and 6780 Arrays

The Sun Storage 6580 and 6780 Arrays provide the performance, high availability, and expansion capabilities required by high-end database applications and other demanding workloads. The design includes an independent controller tray with completely redundant dual controllers. These features provide increased reliability and availability, and offer excellent data protection for business-critical applications. A maximum of 16 expansion trays are supported, with 16 disks per tray, for a total of 256 drives and 256 TB of raw storage capacity. Key features of the Sun Storage 6580 and 6780 Arrays include: Eight or 16 FC host interfaces 4-Gbits/sec, 2-Gbits/sec, and 1-Gbit/sec host interface speed Dual redundant controllers FC and SATA-2 disk drive support Support for up to 16 expansion trays with one controller tray A maximum of 256 drives (16 trays with up to 16 drives each) The Sun Storage 6580 and 6780 Arrays are compared in Table 1. The primary difference between the two models is support for larger total cache size and larger number of host ports on the Sun Storage 6780 Array.

Table 1. Comparison of the Sun Storage 6580 and 6780 Arrays.

Feature

6580

6780

Total cache size per array Number of host ports Maximum number of drives supported Maximum array configuration Maximum raw capacity Optional number of additional storage domains supported

8 GB 8 4-Gbit/sec 256 1x17 256 TB 4/8/16/64/ 128/512

8 or 16 GB 8 or 16 4-Gbit/sec 256 1x17 256 TB 4/8/16/64/ 128/512

RAID 6 Overview

RAID 6 was developed to fulfill a specific need: provide data availability for applications requiring extremely high fault tolerance at a reasonable cost. RAID 6 is highly fault tolerant, having the ability to sustain two consecutive drive failures within the same RAID group. Furthermore, RAID 6 provides this increased data availability at a fraction of the cost of a RAID-1+0 disk group, which uses 50 percent of the storage subsystems capacity for mirroring.

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

Potential uses for RAID 6 include applications requiring extremely high fault tolerant storage, including: Email servers Web servers File servers Database servers Financial applications

Some potential uses for RAID 6 include the following: Email Servers Email usage has grown exponentially there were an estimated 1.4 billion email accounts in 2007, and the number is expected to double in the next few years. Email servers such as Sun Java Communications Suite and Microsoft Exchange typically require high storage availability. With an estimated 516 million corporate email users depending on them for reliable communications, email servers are ideal candidates for RAID 6 implementation. Web Servers The Internet is a crucial source of information flow, and users depend on it for diverse needs including distributed business applications, instant messaging, online auctions, gaming, news, and video on-demand. In many environments, Web servers and the data they share need to be available to users at all times. The requirement for highly available data mandates highly fault tolerant storage. RAID 6, with its built-in availability and redundancy and low cost, is a good solution for Web server implementations. File Servers File servers are an essential and integral part of virtually all organizations. For many businesses, user home directories need to be available at all times for all users. The need for highly available, always online data and storage at a reasonable cost makes file servers a perfect candidate for RAID 6 implementation. Other Uses Other candidates for RAID 6 include databases, such as MySQL and Oracle database servers; financial applications such as SAP; and many other similar applications that require high availability of data without sacrificing performance and while keeping cost at a minimum.

Disk Failure Rates, Reliability Needs Promote RAID 6 Usage

The need for RAID 6 comes as:

Drive capacity continues to increase, with 2 TB drives planned for 2009. Annual Replacement Rate for disk drives can be 2 - 4% on average, and as high as 13% in certain circumstances. Unrecoverable Read Error (URE) rates for all drive types, and especially SATA drives, are within range of expected URE every 12 TBs of data read. The likelihood of a second URE occurring during single drive reconstruction is all but guaranteed for RAID groups using very large capacity disk drives. 1. Schroeder, Bianca and Gibson, Garth A. Disk failures in the real world: What does an MTTF of 1,000,000 hours mean to you? FAST 07: 5th USENIX Conference on File and USENIX Association Storage Technologies. http://www.usenix.org/events/fast07/tech/schroeder/schroeder.pdf

While disks are generally considered to be reliable, they do fail and can fail more often than might be anticipated. A scientific study performed by the Computer Science Department1 at Carnegie Mellon University found that the Annual Replacement Rate (ARR) of 100,000 disk drives was significantly higher than the Mean Time to Failure (MTTF) for the same disk drives. The MTTF for these drives as listed in the vendor datasheet ranged from 1,000,000 to 1,500,000 hours, which suggested an annual failure rate of 0.88%. However, the study found that the ARR was typically above 1 - 4 percent on average, and as much as 13 percent in certain instances within the first three years of the disk drives useful life. The study also found that the disk

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

replacement rate increased constantly as the drives aged. An additional key finding of the study was that there was no significant difference in replacement rates between SCSI, Fibre Channel and SATA drives. In addition to disk drive failure, drives can experience Unrecoverable Read Errors (UREs) when reading a disk block. The URE rate is defined as an unrecoverable read error per bits read. SATA drives in particular have a much lower URE per bit read than Fibre Channel and SAS, as illustrated in Table 2, and therefore are more likely to experience unrecoverable read errors during operation.

Table 2. URE per bit read for Fibre Channel, SAS, and SATA drives.

Drive Speed and Type

URE per Bit Read

2 and 4 Gb/sec Fibre Channel 3 Gb/sec SAS 3 Gb/sec SATA 1.5 and 3 Gb/sec SATA

1015 - 1016 1015 - 1016 1014 - 1015 1014

A URE of 1014 means a SATA drive will experience an unrecoverable bit error on average once every one hundred trillion bits, which is approximately every 12 Terabytes. Consider a RAID 5 disk group with eight 2 TB SATA drives (7 data drives and 1 parity drive) and usable raw space of approximately 13 TB. If the RAID group is working on regenerating a failed drive, the likelihood of another URE is extremely high, if not almost guaranteed. This phenomenon, called Media Errors During Reconstruction (MEDR), can result in unrecoverable RAID group. The risk for MEDRs increases with the size of the RAID group; increasing disk capacities and large RAID groups increase the risk of encountering an unrecoverable disk block during reconstruction.

RAID 6 is able to sustain a second URE by using two independent parity algorithms, P+Q.

RAID 6 is able to sustain a second URE or MEDR. Unlike RAID 5, a RAID 6 disk group will continue to service read/write requests during regeneration with not one but two consecutive drive failures. This ability gives RAID 6 a significant advantage over RAID 5, particularly with higher capacity drives.

RAID 6 Implementation

RAID 6 builds on the commonly used RAID 5 single parity calculation. RAID 5 uses a

RAID 6 P+Q uses RAID 5s existing P parity algorithm and a second independent ReedSolomon Q parity algorithm.

striped data set with distributed parity, as shown in Figure 2. RAID 6 utilizes two independent parity schemes striped across multiple spindles for increased fault tolerance. This approach protects against data loss caused by two concurrent disk drive failures, and thereby provides a higher level of data protection than RAID 5. Sun Storage arrays make use of the P+Q algorithm to compute the two independent parity calculations. The RAID 6 P+Q algorithm uses the existing RAID 5 single parity generation algorithm, P parity, which is striped across all the disks within the RAID group. A second completely independent parity, Q parity, is computed based on the

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

Reed-Solomon Error Correcting Code. The Q parity is also striped across all the disks within the RAID group. Figure 2 illustrates the striping of data blocks and both independent RAID 6 P and Q parity blocks within a RAID group.

Block 1 Block 3 P3 Parity Block 7

Block 2 P2 Parity Block 5 Block 8

P1 Parity Block 4 Block 6 P4 Parity

Block 1 Block 3 P3 Parity Q4 Parity

Block 2 P2 Parity Q3 Parity Block 7

P1 Parity Q2 Parity Block 5 Block 8

Q1 Parity Block 4 Block 6 P4 Parity

Disk 0

Disk 1

Disk 2

Disk 0

Disk 1

Disk 2

Disk 3

RAID 5

RAID 6

Figure 2. A RAID 6 stripe set is identical to RAID 5, with the addition of Q parity computed using Reed-Solomon Error-Correcting Code. Data Regeneration and Availability A RAID 5 disk group will go into degraded status after suffering a bad block (URE) or failed disk. When a disk failure occurs, data can be recalculated using the distributed parity and the remaining drives. While slower, I/O can continue without interruption to the user while the data on the failed drive is recovered. However, the data is vulnerable until the recovery completes; if there is a second failure, data loss can occur. In contrast, RAID 6 provides for a more reliable alternative than RAID 5 as it is able to sustain two complete disk failures.

RAID 6 is able to service I/O while in degraded mode and during reconstruction. Multiple block error protection is provided during optimal state, and single block error protection is provided during degraded mode.

When a disk failure in a RAID 6 P+Q RAID group occurs, blocks are regenerated using the ordinary XOR parity (P). At the same time, both P and Q parities are recomputed for each regenerated block. If a second disk failure occurs while the first failed disk is either being recovered or still in degraded status, blocks on the second failed disk are regenerated using the second independent Q parity. With RAID 6, data availability is not affected should two drives fail concurrently: the P+Q algorithm will simply recompute the data blocks upon a read request and continue to service any reads and writes as such while data regeneration is in progress for both failed drives. The potential for experiencing a URE or hitting a bad black increases with both the number and the size of disk drives. Also, as disk capacity increases, so does the time required to rebuild a failed drive and it is during this rebuild time that a RAID 5 disk group is vulnerable to data loss if a second drive were to fail. Thus, the need for RAID 6 becomes more apparent when availability and large disk capacity are both important factors.

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

Capacity

RAID 6 uses the capacity of two disk drives within the RAID group for the two independent parity algorithms.

Due to the second independent Q parity computation and striping across all disks in the RAID group, the RAID 6 P+Q algorithm requires capacity equal to two complete disks. Usable capacity of a RAID 6 disk group can be calculated using the formula:

N 2 S min

Where N is the total number of disks available and Smin is the capacity of the smallest drive in the RAID group. A minimum of five disk drives are required to implement a RAID 6 disk group on Sun Storage 2500 series, 6140, 6580, and 6780 arrays. Given the formula above, a five disk RAID 6 disk group provides space for three data and two parity disk drives. RAID 6 P+Q parity is implemented using an addition in the Galois1 field, which is set at GF(28). Therefore, a theoretical maximum of 257 drives can be part of any one RAID 6 disk group, 255 of which can be data drives. Performance

RAID 6 can sustain write performance similar to that of RAID 5 when intelligent storage array controllers with dedicated processors for RAID calculations are used.

If implemented using an intelligent storage array controller with a dedicated processor for RAID computations, the RAID 6 P+Q algorithm may sustain write performance almost similar to that of RAID 5. Sun Storage 2500 series and 6140 arrays include an embedded processor on each controller for RAID computations, for improved performance. The Sun Storage 6580 and 6780 arrays include a dedicated processor on each controller for RAID computations, providing enterprise class RAID performance in mid-range arrays.

Performance is affected during regeneration, especially if two drive failures have occurred.

However, there may be read and write performance degradation during drive regeneration. If the RAID 6 disk group is currently in the process of regenerating a failed drive and a second drive failure does occur, the controller will need to service any reads by regenerating requested data using both P and Q parity blocks on the surviving disks. This generates a substantial overhead, possibly as much as 20 percent, for the RAID 6 disk group as compared to RAID 5. This performance degradation during degraded mode should be expected and is not significant when compared to the alternative, which is complete data loss. A RAID 6 disk group also takes more time to rebuild after a drive failure as compared to RAID 5. The longer rebuild time is attributed to the regeneration of data using two parity algorithms instead of one.

1. Anvin, H. Peter. The Mathematics of RAID-6, online paper, July 2008.

http://kernel.org/pub/linux/kernel/people/hpa/raid6.pdf

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

RAID 6 Benefits and Trade-offs

Advantages of RAID 6: Protection against two drive failures Performance almost similar to RAID 5, with hardware assisted RAID computations Lower cost than RAID 1+0

The most substantial advantage of using RAID 6 is that it provides protection against multiple unrecoverable errors in the optimal state (normal operation with no disk failures) and against a single unrecoverable error in the degraded state. This is a huge advantage over RAID 5 which only protects against a single unrecoverable error during optimal state. Other RAID technologies offer similar or slightly better performance and reliability, however, at a very high cost. RAID 1+0 provides similar availability and redundancy as RAID 6 by mirroring the data across disk drives and then striping the mirrored data across a separate set of disks drives. However, RAID 1+0 uses half of available storage for mirroring making it a very expensive solution. The same highly available storage space for applications can be provided using RAID 6 at a fraction of the cost. The most significant trade-off with using RAID 6 is the potential for slightly reduced write performance as compared to RAID 5 due to two independent parity calculations. However, use of intelligent RAID controllers with dedicated processors for RAID computations, like those in the Storage 6140 arrays, can significantly increase RAID 6 write performance to levels comparable to RAID 5. RAID 6 disk groups can also experience longer rebuild times after a drive failure, as two parity algorithms are required to regenerate the failed drive, as compared to the single RAID 5 parity calculation. RAID 6 also requires additional disk space as compared to RAID 5, as two drives are required for RAID 6 P+Q parity rather than the single drive for RAID 5 parity. In summary, RAID 6 offers increased fault tolerance with its ability to continue operation after two failed disks. While RAID 6 can experience slightly reduced write performance and longer rebuild times after a failed disk, using dedicated RAID processors can mitigate the performance penalty. In comparison to other popular RAID algorithms, RAID 6 offers significantly improved fault tolerance when compared to RAID 5, and can provide similar availability to RAID 1+0 without requiring double the amount of storage capacity.

RAID 6 Trade-offs: Slightly reduced performance compared to RAID 5 during reconstruction Longer reconstruction time Slightly more expensive than RAID 5, due to additional use of capacity for two parity calculations

Best Practices for Implementing RAID 6

RAID 6 is enabled on the Sun Storage 2500 series arrays with firmware revision 07.35.x.x or higher. The firmware revision 7.10.x.x or higher enables RAID 6 on the Sun Storage 6140 array. All new Sun Storage 2500 series and 6140 arrays come pre-loaded with the latest firmware. Existing Sun Storage 2500 series and 6140 arrays can be upgraded to the new firmware level; local Sun Services representatives can provide further information on the upgrade process.

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

The Sun Storage 6580 and 6780 arrays provide RAID 6 support by default using the same P+Q parity computation as the Sun Storage 6140 array. These arrays have dedicated next-generation hardware-assist XOR engine with RAID 6 P+Q support and completely redundant array controllers, providing one of the best-in-class data availability and performance.

Note The 7.10.x.x (or higher) firmware release is also available for Sun Storage 6540 storage arrays. However, the RAID controllers for Sun Storage 6540 arrays do not have the capability to perform P+Q calculations and hence cannot use the RAID 6 enhancement within the new firmware. All other enhancements are available on the Sun Storage 6540 arrays, however.

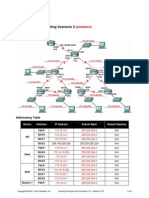

Striping Across Storage Trays

The following sections describe best practices for striping across storage trays in the different arrays: Sun Storage 2500 Series Arrays on page 9 Sun Storage 6140 Array on page 10 Sun Storage 6580 and 6780 Arrays on page 12 Sun Storage 2500 Series Arrays Sun Storage 2500 series arrays can have a maximum of 48 hard drives, which translates into one controller tray with dual redundant controllers and three Sun Storage 2501 JBOD trays. For optimal performance and availability, it is a recommended best practice to span a virtual disk across multiple storage trays. In the case of a RAID 6 virtual disk for the Sun Storage 2500 series arrays, a minimum of five spindles is required. Figure 3 illustrates an example of a five-spindle RAID 6 virtual disk spanning four storage trays for optimal performance and availability. With RAID 6 able to sustain two consecutive drive failures at a time, the virtual disk will be able to sustain two entire drive tray failures and continue to process I/O requests with the exception of the bottom-most drive tray. If the bottom-most drive tray suffers a complete failure, the virtual disk will continue to service I/O requests as long as no other disk within the virtual disk suffers a failure. Although an entire drive tray failure is rare, spanning the virtual disk as recommended can help avoid unnecessary downtime.

10

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

Figure 3. A 5-spindle RAID 6 virtual disk spanning four trays in a Sun Storage 2500 series array (one controller tray and three Sun Storage 2501 JBODs). Sun Storage 6140 Array

With proper planning for expansion trays and disk drive considerations, a RAID 6 virtual disk in a Sun Storage 6140 array can sustain up to two complete expansion tray failures, in addition to being able to sustain two consecutive disk drive failures.

Creating RAID groups (or virtual disks) that span multiple storage trays in the Sun Storage 6140 array is a recommended best practice for enhanced availability of data. Although RAID 6 provides for higher availability than RAID 5 and can sustain two independent disk failures, the failure of a single storage array tray can cause unnecessary downtime and loss of access to data for some virtual disk configurations. This potential downtime caused by a tray failure can be avoided by creating RAID groups and virtual disks that span multiple trays. With proper planning and the right combination of Sun StorageTek expansion trays and disk considerations, a RAID 6 virtual disk can sustain two complete expansion tray failures. Figure 4 illustrates three example eight-spindle RAID 6 virtual disks in a Sun Storage 6140 storage array. Virtual Disk A is striped across two trays in a Sun Storage 6140 array; Virtual Disk B is striped across 4 trays; and Virtual Disk C is striped across all seven trays in the array. Because Virtual Disk A uses RAID 6, it provides protection against the failure of any two disk drives in the RAID group. However, Virtual Disk A will not be able to sustain a tray failure for either of the trays it resides on, as each tray contains four drives used in the virtual disk.

11

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

In contrast, Virtual Disk B will be able to continue servicing I/O during a complete tray failure. As with Virtual Disk A, Virtual Disk B provides protection against any two consecutive disk failures within the RAID group. However, by using only two disks from each tray, Virtual Disk B is also able to sustain any one complete tray failure for any of the four expansion trays it is striped across.

Virtual Disk A

Virtual Disk C

Virtual Disk B

Figure 4. Three 8-spindle RAID 6 virtual disks spanning multiple trays in a Sun Storage 6140 array. Virtual Disk C, striped across all seven trays in the Sun Storage 6140 array, is the most resilient of the three example RAID groups. On top of protection against any two disk failures within the RAID group, Virtual Disk C is also able to sustain complete tray failure of any two trays in the Sun Storage 6140 array, except Tray 4 (which contains two drives of the virtual disk) and Tray 0 (the controller tray). For example, if Tray 2 and Tray 3 were to both fail, Virtual Disk C would lose two disk drives from the RAID group but data would remain available. However, given the disk configuration in this example, a complete failure of Tray 4 would result in two failed disks within Virtual Disk C. In this failure scenario, Virtual Disk C would continue to service I/O, however it would not be able to sustain a second complete tray failure.

12

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

Sun Storage 6580 and 6780 Arrays Sun Storage 6580 and 6780 arrays feature an independent controller tray with completely redundant dual controllers. These features allow for even more reliability and data availability built into the hardware along with dual-parity protection of RAID 6. Both of these arrays support up to 16 drive trays with up to 16 drives in each tray at initial release. This translates into true data and failure protection. If a RAID 6 virtual disk with 5 spindles spanned 5 unique drive trays, two entire trays could become completely disabled and the virtual disk would continue to operate and the volumes resident on the given virtual disk presented to hosts as LUNs would continue to service I/O. Figure 5 shows two 8-spindle RAID 6 virtual disks spanning 8 completely independent CSM200 trays each on a fully populated Storage 6780 array with 16 CSM200 trays. This configuration provides no single point of failure and delivers optimal performance.

Figure 5. Two 8-spindle RAID 6 virtual disks spanning multiple trays in a Sun Storage 6780 array.

13

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

Striping Across Multiple Arrays

Striping across multiple Sun Storage 2500 series, 6140, 6580, and 6780 arrays may provide enhanced performance and reliability. When a RAID group is striped across more than one array, the multiple array controllers in the separate arrays provide multiple independent actives paths to the data, which can enhance performance. As an example, assume a RAID 6 virtual disk with eight physical disks striped across eight independent Sun Storage arrays. In this case, the host will use eight independent paths across eight independent array controllers to write data; the data paths are not limited to the same controller tray in a single Sun Storage array. In addition, striping against multiple arrays can help protect against loss of access to the RAID group if there is a complete controller tray failure.The loss of a controller tray affects access to all trays in that specific array. If a RAID group is striped across trays in a single array, a controller tray failure will disrupt access to the RAID group. However, if the RAID group is striped across multiple arrays with at most two drives in each array, the RAID group can sustain a failure of any one controller tray. And if the RAID groups is striped across multiple arrays with at most one drive in each array, this configuration can sustain any two complete tray failures even two complete controller tray failures. Because complete array controller failure is rare, striping across multiple arrays is primarily used to enhance performance. However, this configuration also provides enhanced reliability in protecting against multiple controller tray failures.

Summary of Best Practices

RAID 6 best practices: Use no more than 8 disk drives within one RAID 6 virtual disk. Stripe across expansion trays for enhanced availability. Limit the total number of LUNs per RAID 6 virtual disk to six. Do not use very large, multi-terabyte LUNs due to long reconstruction times.

The following configuration details are recommended as best practices when implementing RAID 6 virtual disks on a Sun Storage 2500 series, 6140, 6580, and 6780 arrays: Use no more than eight disk drives per virtual disk As with RAID 5, RAID 6 performance starts to degrade as additional disk drives are added to a virtual disk. A maximum of 30 disks can be part of a RAID 6 virtual disk in a Sun Storage 2500 series, 6140, 6580, or 6780 array. However, it is recommended to use no more than a total of eight disk drives to achieve best results for performance and number of LUNs per virtual disk, especially for very large capacity disk drives. Stripe RAID 6 virtual disks across multiple trays For best availability, stripe the RAID 6 virtual disks across multiple trays in Sun Storage 2500 series, 6140, 6580, and 6780 arrays. The ability to sustain up to two complete expansion tray failures can be achieved if proper pre-planning and considerations take place prior to creating virtual disks.

14

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

Limit the number of LUNs for each RAID group to six Under normal circumstances, it is best to limit the number the LUNs to a maximum of six for each RAID group. This configuration helps keep disk seek contention low and can provide better performance. Avoid the use of very large multi-terabyte LUNs Since data regeneration is relatively slower for RAID 6, a multi-terabyte LUN reconstruction can take a significant amount of time, especially with very small files if there is substantial I/O activity occurring on these files. Data availability is not affected during regeneration, and RAID 6 virtual disks can sustain a second drive failure without affecting data availability. However, a RAID 6 virtual disk is unable to sustain a third drive failure. Avoiding very large multi-terabyte LUNs whenever possible reduces the regeneration time and thereby reduces the risks of additional errors occurring during regeneration.

Conclusions

RAID 6 provides protection against two consecutive drive failures by using two independent parity calculations. Sun Microsystems takes advantage of the better performing P+Q algorithm implementation of RAID 6, and uses embedded processors for RAID computations on intelligent RAID controllers in the Sun Storage 2500 series and 6140 arrays and dedicated processors for RAID computations on intelligent RAID controllers in the Sun Storage 6580 and 6780 arrays for improved performance. RAID 6 offers highly available storage for applications and implementations requiring extremely high fault tolerance while keeping cost down and performance at an acceptable level during normal circumstances. If best practices are followed, a RAID 6 disk group is capable of performing at rates very close to RAID 5 with a slightly higher cost. RAID 6 is also capable of providing reliability close to RAID 1+0 at a fraction of the cost. Sun Storage 2500 series arrays running firmware version 7.35.x.x, Sun Storage 6140 arrays running firmware revision 7.10.x.x, and all Sun Storage 6580 and 6780 arrays by default, have RAID 6 capabilities and dedicated RAID computation processors which allow for enhanced RAID 6 performance. Using best practices configuration guidelines, a RAID 6 virtual disk is able to sustain up to two complete expansion tray failures within a Sun Storage 2500 series, 6140, 6580, or 6780 array.

About the Author

Said A. Syed has over 14 years of industry experience, including over 8 years with Sun. Said started with Sun as a System Support Engineer in Chicago supporting high-end and mid-range servers and Sun storage products. Said joined Suns Storage Product Technical Support group in 2004 as the Sun Support Services global lead for Brocade SAN products. In this position, Said managed the Sun Support Services relationship

15

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

with Brocade Support Services directly and supported world-wide Sun customers on high visibility, high severity escalations involving SAN infrastructure products and Suns high-end and low-cost storage products, the Sun Storage 3000 and 9000 series arrays. In 2008, Said was promoted to Staff Engineer role within Suns NPI and OEM array engineering group and is currently chartered with gaining in-depth understanding of how virtualization applications such as VMware ESX server, the Sun xVM platform, Microsoft Hyper-V virtualization, Sun Logical Domains (LDoms), Solaris Containers, Cloud Computing, and other similar applications interact with Suns modular and highend storage arrays, the Sun Storage 2500, 6000 and 9000 series arrays.

Acknowledgements

The author would like to thank Andrew Ness, a Staff Engineer within the NPI and OEM array engineering group at Sun, for reviewing technical details outlined in this document for accuracy. Thanks also to Michael Jeffries for reviewing the document for technical content, flow and verbiage. Further more, the author would like to extend his thanks to the Sun BluePrints team for assisting in technical writing, final review and publication.

References

Sun Storage 2500 series arrays: http://dlc.sun.com/pdf/820-6247-10/820-6247-10.pdf http://www.sun.com/storage/disk_systems/workgroup/2510/ http://www.sun.com/storage/disk_systems/workgroup/2530/ http://www.sun.com/storage/disk_systems/workgroup/2540/ Sun Storage 6140 array: http://www.sun.com/storagetek/disk_systems/midrange/6140/ Sun Storage 6580 and 6780 arrays: http://www.sun.com/storage/disk_systems/midrange/6580/ http://www.sun.com/storage/disk_systems/midrange/6780/ Anvin, H. Peter. The Mathematics of RAID-6, online paper, July 2008. http://kernel.org/pub/linux/kernel/people/hpa/raid6.pdf Schroeder, Bianca and Gibson, Garth A. Disk failures in the real world: What does an MTTF of 1,000,000 hours mean to you? FAST 07: 5th USENIX Conference on File and USENIX Association Storage Technologies. http://www.usenix.org/events/fast07/tech/schroeder/schroeder.pdf

16

Using RAID 6 for Increased Reliability and Performance

Sun Microsystems, Inc.

Ordering Sun Documents

The SunDocsSM program provides more than 250 manuals from Sun Microsystems, Inc. If you live in the United States, Canada, Europe, or Japan, you can purchase documentation sets or individual manuals through this program.

Accessing Sun Documentation Online

The docs.sun.com web site enables you to access Sun technical documentation online. You can browse the docs.sun.com archive or search for a specific book title or subject. The URL is http://docs.sun.com/ To reference Sun BluePrints Online articles, visit the Sun BluePrints Online Web site at: http://www.sun.com/blueprints/online.html

Using RAID 6 for Increased Reliability and Performance

On the Web sun.com

Sun Microsystems, Inc. 4150 Network Circle, Santa Clara, CA 95054 USA Phone 1-650-960-1300 or 1-800-555-9SUN (9786) Web sun.com

2009 Sun Microsystems, Inc. All rights reserved. Sun, Sun Microsystems, the Sun logo, BluePrints, Java, MySQL, Solaris, and SunDocs are trademarks or registered trademarks of Sun Microsystems, Inc. or its subsidiaries in the United States and other countries. Information subject to change without notice. Printed in USA 02/09

Você também pode gostar

- The Yellow House: A Memoir (2019 National Book Award Winner)No EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Nota: 4 de 5 estrelas4/5 (98)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeNo EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeNota: 4 de 5 estrelas4/5 (5794)

- The Little Book of Hygge: Danish Secrets to Happy LivingNo EverandThe Little Book of Hygge: Danish Secrets to Happy LivingNota: 3.5 de 5 estrelas3.5/5 (400)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureNo EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureNota: 4.5 de 5 estrelas4.5/5 (474)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryNo EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryNota: 3.5 de 5 estrelas3.5/5 (231)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceNo EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceNota: 4 de 5 estrelas4/5 (895)

- Team of Rivals: The Political Genius of Abraham LincolnNo EverandTeam of Rivals: The Political Genius of Abraham LincolnNota: 4.5 de 5 estrelas4.5/5 (234)

- Never Split the Difference: Negotiating As If Your Life Depended On ItNo EverandNever Split the Difference: Negotiating As If Your Life Depended On ItNota: 4.5 de 5 estrelas4.5/5 (838)

- The Emperor of All Maladies: A Biography of CancerNo EverandThe Emperor of All Maladies: A Biography of CancerNota: 4.5 de 5 estrelas4.5/5 (271)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaNo EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaNota: 4.5 de 5 estrelas4.5/5 (266)

- The Unwinding: An Inner History of the New AmericaNo EverandThe Unwinding: An Inner History of the New AmericaNota: 4 de 5 estrelas4/5 (45)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersNo EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersNota: 4.5 de 5 estrelas4.5/5 (345)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyNo EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyNota: 3.5 de 5 estrelas3.5/5 (2259)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreNo EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreNota: 4 de 5 estrelas4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)No EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Nota: 4.5 de 5 estrelas4.5/5 (121)

- Fortranv 7Documento173 páginasFortranv 7mu4viewAinda não há avaliações

- Hyundai Heavy Industries - Gas Insulated SwitchgearDocumento25 páginasHyundai Heavy Industries - Gas Insulated SwitchgearbadbenzationAinda não há avaliações

- 6 3 AnswersDocumento4 páginas6 3 Answersshiwaisanxian100% (1)

- Binary Search of Unsorted ArrayDocumento3 páginasBinary Search of Unsorted ArrayGobardhan BaralAinda não há avaliações

- Fly Ash 1Documento9 páginasFly Ash 1Az AbqariAinda não há avaliações

- 3 Types of Lasers and ApplicationsDocumento2 páginas3 Types of Lasers and ApplicationsHemlata AgarwalAinda não há avaliações

- Info02e5 Safety ValveDocumento2 páginasInfo02e5 Safety ValveCarlos GutierrezAinda não há avaliações

- Catalogue For AutoDocumento22 páginasCatalogue For Autosentimiento azulAinda não há avaliações

- SCELDocumento46 páginasSCELzacklawsAinda não há avaliações

- Memristor Modeling in MATLAB®&SimulinkDocumento6 páginasMemristor Modeling in MATLAB®&Simulinkjoseamh69062247Ainda não há avaliações

- Reducing ValveDocumento11 páginasReducing ValveFluidPowerConsultantAinda não há avaliações

- OurLocalExpert Exeter 2013-14Documento15 páginasOurLocalExpert Exeter 2013-14Nick HallAinda não há avaliações

- Diprotic Acid Titration Calculation Worked Example Sulphuric Acid and Sodium Hydroxide - mp4Documento2 páginasDiprotic Acid Titration Calculation Worked Example Sulphuric Acid and Sodium Hydroxide - mp4tobiloba temiAinda não há avaliações

- Module 1: Introduction To Operating System: Need For An OSDocumento18 páginasModule 1: Introduction To Operating System: Need For An OSshikha2012Ainda não há avaliações

- Fifth Wheel, Design and FunctionDocumento17 páginasFifth Wheel, Design and FunctionRobert Orosco B.Ainda não há avaliações

- Kolar-Chikkaballapur Milk Union Limited: First Prize DairyDocumento8 páginasKolar-Chikkaballapur Milk Union Limited: First Prize DairySundaram Ramalingam GopinathAinda não há avaliações

- M60 Main Battle TankDocumento7 páginasM60 Main Battle Tankbcline50% (2)

- (Conectores) SandhaasC Munch-AndersenJ DietschP DesignofConnectionsinTimberStructures PDFDocumento332 páginas(Conectores) SandhaasC Munch-AndersenJ DietschP DesignofConnectionsinTimberStructures PDFClaudio Ignacio Zurita MillónAinda não há avaliações

- Plastic Roads: Presented By-Akash Chakole (First Year MBBS, GMC Nagpur)Documento27 páginasPlastic Roads: Presented By-Akash Chakole (First Year MBBS, GMC Nagpur)Chandu CKAinda não há avaliações

- CS-ME-13 R0 SFFECO - Fire Extinguisher Dry Chemical Powder & Co2 - TSDDocumento1 páginaCS-ME-13 R0 SFFECO - Fire Extinguisher Dry Chemical Powder & Co2 - TSDAamer Abdul MajeedAinda não há avaliações

- Nokia c7-00 Rm-675 Rm-691 Service Manual-12 v6Documento32 páginasNokia c7-00 Rm-675 Rm-691 Service Manual-12 v6sasquatch69Ainda não há avaliações

- Pneumatic Report GuidelineDocumento2 páginasPneumatic Report GuidelineAhmad FaidhiAinda não há avaliações

- LoftplanDocumento1 páginaLoftplanapi-228799117Ainda não há avaliações

- 05 MathematicalReference PDFDocumento484 páginas05 MathematicalReference PDFHassanKMAinda não há avaliações

- Copeland Scroll Compressors For Refrigeration Zs09kae Zs11kae Zs13kae Application Guidelines en GB 4214008Documento24 páginasCopeland Scroll Compressors For Refrigeration Zs09kae Zs11kae Zs13kae Application Guidelines en GB 4214008Cesar Augusto Navarro ChirinosAinda não há avaliações

- Sqldatagrid Symbol User'S Guide: ArchestraDocumento36 páginasSqldatagrid Symbol User'S Guide: ArchestraAde SuryaAinda não há avaliações

- Applied Hydrology LabDocumento6 páginasApplied Hydrology Labshehbaz3gAinda não há avaliações

- Potable Water Tank Calculation PDFDocumento37 páginasPotable Water Tank Calculation PDFboysitumeangAinda não há avaliações

- Power Quality Standards in ChinaDocumento21 páginasPower Quality Standards in ChinaCarlos Talavera VillamarínAinda não há avaliações

- XXX Lss XXX: NotesDocumento2 páginasXXX Lss XXX: NotesMoisés Vázquez ToledoAinda não há avaliações